Abstract

Drought is a significant natural hazard that severely challenges water resource management and agricultural sustainability. This study aims to propose a novel approach for predicting streamflow drought indices (SDI-3, SDI-6, and SDI-12) in humid continental (Stockholm) and semi-arid (ELdiem) climates at different time-steps. The approach utilizes a Quantile Regression Neural Network (QRNN) coupled with wavelet decomposition (WD) techniques. Six mother wavelets (haar, sym8, coif5, bior6.8, demy, and db10) were used to decompose the SDI time series into different frequency bands, helping to identify patterns and trends in drought signals. The QRNN was compared with a tree-based machine learning (ML) model and two deep learning models: Long Short-Term Memory (LSTM) and Convolutional Neural Network (CNN). Results from stand-alone models showed that the LSTM model outperformed others in predicting SDI-3, while the QRNN model performed best in predicting SDI-6 and SDI-12 in both study regions. In the Stockholm station, the hybrid models achieved acceptable accuracy with bior6.8-LSTM2 (Nash–Sutcliffe efficiency (NSE) = 0.927), bior6.8-QRNN2 (NSE = 0.962), and demy-QRNN2 (NSE = 0.984) performing best for SDI-3, SDI-6, and SDI-12 predictions during the test phase, respectively. For the ELdiem station, the db10-QRNN3 (NSE = 0.926), demy-QRNN3 (NSE = 0.934), and demy-QRNN2 (NSE = 0.981) models demonstrated superior performance during the test phase in predicting SDI-3, SDI-6, and SDI-12, highlighting the robust capability of hybrid models across two case studies. The results indicate that combining WD with ML models can produce more accurate hydrological drought predictions than traditional models.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Streamflow is an important factor in human life and planetary stability regarding climate change, water resources management, ecosystem sustainability, agricultural productivity, drought and flood management (He et al. 2019; Latifoglu 2020; Tikhamarine et al. 2020; Zuo et al. 2020). Factors such as precipitation, evaporation, flow efficiency, catchment shape, vegetation, and human activities significantly affect streamflow values (Senthil Kumar et al. 2013; Luo et al. 2019). While the flow increases cause floods when they exceed the flow channel, long-term low flow triggers the drought event (Lehner et al. 2006; Brunner et al. 2021). Especially hydrological drought is one of the disasters that depends on the streamflows and negatively affects the ecosystem and human life. Therefore, monitoring and forecasting streamflow drought is critical for providing early warning signals, enabling effective implementation of drought risk management strategies (Aghelpour et al. 2021; Katipoğlu 2023).

Modeling hydrological droughts based on streamflows helps with planning hydroelectric power generation, effective management of water resources, and optimum sizing of water structures. While, the complex and nonlinear nature of climate systems, combined with heterogeneous and often incomplete datasets, makes it difficult to accurately model and predict drought events (Dikshit et al. 2022). Stochastic model approaches and artificial intelligence (AI) techniques are the most preferred models in drought modeling. Stochastic models are chosen for their simplicity and accessibility, while AI techniques are preferred because they can quickly and efficiently reveal nonlinear relationships (Ashrafzadeh et al. 2020; Katipoğlu et al. 2020; Aghelpour et al. 2021; Abdallah et al. 2023). Sharma and Panu (2014) employed river flow sequences to model hydrological drought in Canada. The standardized hydrological index (SHI) series for determining droughts was evaluated according to the median threshold level. To assess drought characteristics, the Markov chain provided satisfactory results. Liu et al. (2016) used a probability estimation network to evaluate hydrological droughts based on the common river flow drought indicator (JSDI) in three basins in Germany, China and the United States. According to the analysis, it has been revealed that JSDI can be used to explain hydrological droughts and to estimate the probability of the proposed network. Bae et al. (2017) evaluated a hydrological drought forecasting system based on the Bayesian method and standardized runoff index (SRI) for South Korea. According to the statistical indicators, the Bayes method has improved the drought prediction potential. It is particularly effective for drought prediction in 1- and 2-month lead times. Aghelpour et al. (2021) used stochastic models and various machine learning (ML) models for simulating hydrological drought according to the Streamflow Drought Index (SDI) in the Khalkaei and Pasikhan rivers.

Researchers have applied various AI techniques for drought prediction due to advanced capabilities of AI in handling complex data and patterns. Mohammed et al. (2022) used random forest (RF), random subspace (RSS), bagging (BG) and random tree (RT) algorithms to predict the Standardized Precipitation Index (SPI) values at 3- and 12-month time scale and analyze the agricultural and hydrological drought situation. According to the results, the most successful algorithm in drought prediction is RT, while the weakest is RSS. Rose and Chithra (2023) used RF and gradient boosting regression model (GBM) algorithms to estimate hydrological drought indices. Various meteorological indicators under climate change scenarios were used to estimate hydrological droughts. Conventional ML methods rely on feature extraction in terms of prediction; however, identifying the most effective features during the training of these algorithms can be challenging (Dargan et al. 2020).

Deep learning (DL) algorithms offer substantial advantages in hydrological predictions due to their flexibility and high accuracy (Pham et al. 2021; Sardar et al. 2022). Long Short-Term Memory (LSTM) reveals long-term dependencies with a developed integration of short-term information (Hochreiter and Schmidhuber 1997; Cho et al. 2014). The main advantage of LSTM is the availability of gates that have the potential to extract or add information (Makridakis et al. 2018). The benefits of Convolutional Neural Networks (CNNs) are that they automatically detect essential features. In addition, Quantile Regression Artificial Neural Networks, instead of modeling the mean, correspond to a specific quantile and provide an advantage by allowing detailed modeling of the general distribution (Susperreguy et al. 2018). Because of these advantages, these algorithms attract the attention of many researchers in hydrological and meteorological forecasting. Wu et al. (2022) combined dynamical models and DL to estimate in 3-month time scale SPI values over China. According to the results of the analysis, it was revealed that the D-LSTM model produced more accurate predictions than the LSTM model. Danandeh Mehr et al. (2023) employed genetic programming, artificial neural networks, CNN and LSTM to estimate Standardized Precipitation Evapotranspiration Index (SPEI) values at 3 and 6-month time scales at two stations in Ankara province, Turkey. As a result, CNN-LSTM outperformed other models. Sardar et al. (2022) combined with Barnacles Mating Optimizer (BMO) to increase the capability of the CNN model. The established model was used for drought prediction in the Kolar regions of Karnataka. The analysis revealed that the prediction accuracy of the CNN model was increased. The analysis revealed that the prediction accuracy of the CNN model was increased. Aghelpour et al. (2023) predicted oceanic signals on droughts using Autoregressive Integrated Moving Average (ARIMA), multilayer perceptron (MLP), and support vector machine (SVM). The stochastic ARIMA model produced more accurate predictions in the artificial intelligence based MLP and SVM models. The study’s outputs indicated that the predictions of ocean signals could be used to increase the success of precipitation and drought forecasting in Iran. Vo et al. (2023) combined LSTM and a climate model (LSTM-CM) and compared the drought prediction success of the hybrid model with a single LSTM. According to statistical indicators, it has been observed that the LSTM-CM model has increased the performance of the LSTM model. While DL require large amounts of labeled data, which can be difficult or costly to obtain in hydrological forecasting, they are also less interpretable than traditional models and computationally expensive, posing challenges in resource-limited environments. To address these issues, this study aims to evaluate, for the first time, the QRNN model for predicting hydrological drought under different climate conditions.

Hybrid approaches combining ML algorithms and wavelet decomposition (WD) techniques have gained popularity in hydrological predictions by enhancing signal separation, reducing noise, and improving model accuracy (Mohammadi et al. 2024). Belayneh et al. (2016) combined boosting and bootstrap methods and ML with wavelet transform to predict SPI values at 3, 12, and 24-month scales in the Awash River Basin of Ethiopia. Analysis results showed that wavelet transform improves ML performance. In addition, the Wavelet boosting SVR hybrid approach predicted meteorological droughts most accurately. Khan et al. (2020) integrated ARIMA and Artificial Neural Network (ANN) with WD to predict the SPI and the Standard Index of Annual Precipitation (SIAP) in Malaysia’s Langat River Basin. According to statistical criteria, it was revealed that the Wavelet-ARIMA-ANN hybrid model produced more accurate predictive results than the single ANN and wavelet-ANN models. Drisya et al. (2021) evaluated the potential of conventional feedforward neural network (FFNN) and wavelet-enabled ANN (WANN) techniques to estimate streamflow values. Statistical indicators showed that the WANN hybrid model was superior to FFNN in predicting streamflow. Taylan et al. (2021) estimated 3-, 6-, 9- and 12-months SPI values by combining WD with adaptive neuro fuzzy inference system (ANFIS), SVM and ANN in Çanakkale, Turkey. Statistical criteria showed that wavelet-based hybrid models gave better results than single ANFIS, ANN and SVM. In addition, the most successful results were obtained with the W-ANFIS model in the 6 months. Altunkaynak and Jalilzadnezamabad (2021) coupled Fuzzy, k-Nearest Neighbor (kNN) and SVM models with discrete wavelet transform (DWT) for accurate estimation of monthly Palmer drought severity index (PDSI) values in the Marmara region of Turkey. The analysis revealed that DWT-based hybrid ML models are superior to single ML models. Ham et al. (2023) compared the performances of wavelet long short-term memory network (WLSTMN), WANN and WSVR algorithms to estimate 6 and 12-month time scales SPI values in the west area of the Democratic People’s Republic of Korea. According to the performance indicators, the WLSTMN model was superior to other models with low error and high correlation values.

This study developed a hydrological forecasting approach based on SDI at 3-, 6-, and 12-month time scales, utilizing various ML and DL techniques in Sudan and Sweden. To enhance model performance, different mother wavelets were employed to divide input time series into subcomponents. Drought prediction accuracy was evaluated using multiple statistical and graphical indicators. The main objectives of this study are twofold: (1) to develop a novel approach for predicting the hydrological drought index using a Quantile Regression Neural Network (QRNN) coupled with wavelet decompositions. (2) To compare the performance of the proposed model with existing DL approaches commonly used in hydrological drought prediction. By achieving these objectives, the current study aims to apply and evaluate effectiveness of the proposed QRNN-wavelet model in accurately forecasting hydrological droughts.

Materials and methods

Study sites

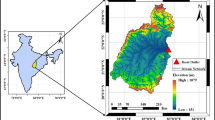

The Stockholm hydrometry station, located in eastern Sweden, serves as one of the case study sites in this research. The elevation of the Stockholm hydrometric station is approximately 110 m above sea level, and the station encompasses a diverse hydrological setting. Stockholm’s climate is influenced by its location on the eastern coast of Sweden and the surrounding water bodies. It is characterized by distinct seasons, with cold winters and relatively mild summers. Precipitation is distributed throughout the year, with varying intensity and frequency. The ELdiem Station, located in the border between Sudan and Ethiopia, serves as another case study site in this study. Situated at the outlet of the Upper Blue Nile Basin (UBNB), where the climate condition across the UBNB is classified as arid to semi-arid. It is characterized by typical climatic conditions, high temperatures and low annual precipitation. These two case study sites (Stockholm hydrometry station in Sweden and ELdiem hydrometry station in Sudan) were carefully selected to capture the variability in hydrological conditions and climate characteristics. Figure 1 shows the box-plots of average monthly streamflow data at ELdiem and Stockholm hydrometric stations.

Streamflow Drought Index (SDI)

The SDI is a standardized indicator used to characterize hydrological drought conditions based on streamflow data over specified time scales. A measure comparable to the Standardized Precipitation index (SPI) called the SDI which can be used to assess the strength and severity of hydrological drought. Nalbantis et al. (Nalbantis and Tsakiris 2009) presented the monthly standard normal flow as the foundation for this index. In the current investigation, SDI was calculated utilizing a process identical to that used to calculate SPI (McKee et al. 1993). As a result, the same algorithms were used to generate this index, with the input being discharge monthly time series rather than rainfall data.

where \({M}_{i,n}\) donates for streamflow volume for the \(n\) month in the \(i\) hydrological year whereas \({\stackrel{-}{M}}_{n}and{L}_{n}\) are represent the average and standard deviation in the \(n\) month across the study period. Monthly streamflow data from Stockholm station (1979–2021) and ELdiem station (1989–2019) were partitioned into 75% for model training and 25% for testing. Table 1 presents the basin’s drought classifications based on SDI data.

Wavelet Decompositions (WD)

WDs are unique techniques for processing signals and analyzing time series data, but in other ways, they represent a combination of prior approaches with more advanced mathematical solutions techniques. WD has the potential to be complimentary to current approaches, such as correlation and spectrum analysis. It additionally includes the benefit of allowing for flexible selection of the mother wavelet, which represents the transform function, based on the properties of the time series; in addition, the WD can be applied on a dataset though continuous (CWT) and discrete (DWT) approaches (Nejad and Nourani 2012; Özger et al. 2020). The CWT is introduced by (Adamowski and Chan 2011) and defined as:

where \(s\) and \(\tau\) denote the scale and translation parameters, \(*\) is the complex conjugate, and \({\Psi}\left(t\right)\) denotes the mother wavelet. CWT demands a great deal of equipment and calculation time; however, DWT uses less equipment and is easier to build than CWT. In DWT, the scales and positions are selected using powers of two, and these choices are referred to as dyadic scales and positions. This is done by changing the wavelet structure as described by Cannas et al. (Cannas et al. 2005):

where j and k are numbers that regulate wavelet dilation and translations. A constant dilation step is\({s}_{0}>1\), and \({\tau}_{0}\) represent the location factor. The most frequent and easiest parameter values are \({s}_{0}=2\)and \({\tau}_{0}=1\) (Nourani et al. 2009). The wavelet technique allows the time-scale domain to be gathered at discrete levels.

Mallat (Mallat 1989) proposed a fast DWT approach consisting of four filters: decomposition low and high pass, reconstruction low and high-pass (Fig. 2). The low-pass filter and high-pass filter, which are also known as scaling and wavelet functions, are utilized for the actual application of Mallat’s approach in place of father and mother wavelet transform. When used with the scaling transform functions, the low-pass filter enables the study of elements with low frequencies, whereas when used with the wavelet transform functions, the high-pass filter enables the analysis of elements with high frequencies. These filters utilized in Mallat’s approach are defined by the selection of mother wavelets (Otazu et al. 2005). A multilayer decomposition technique (Fig. 2) may be obtained, in which the original signal is partitioned into lower-resolution elements (Catalao et al. 2010). For more details Eckley et al. (Eckley et al. 2010) has comprehensive information about Mallat’s approach.

Quantile Regression Neural Network (QRNN)

A quantile regression (QR) approach was introduced by Koenker et al. (Koenker and Bassett Jr 1978). The conditional distribution structure and the variability spectrum of the dependent variables and those that are independent can impact the output of the regression. But QR can reflect these effects more precisely than the conventional mean regression. As opposed to the nonlinear relationship found in most real-world data, this technique only teaches the linear relationship among the variables that respond and the variables that were input under various quantile levels (Xu and Jiang 2011). The main equations of QRNN can be formulated as:

where the range (0, 1) can provide a variety of quantiles, The predicted weight matrix across the inputs layers and the hidden layers is represented by \({T}_{i}={\left\{{t}_{i,j,k}\right\}}_{j=\text{1,2},\dots,J;k=\text{1,2},\dots K}\). The connecting weight vectors across the hidden layers and the outcome layers is represented by \({U}_{i}={\left\{{u}_{i,k}\right\}}_{k=\text{1,2},\dots K}\). The hyperbolic-tangential sigmoid equation is used to represent \({g}_{1}(.)\) as the hidden layers of activation function \({g}_{1}\left(v\right)=\frac{1}{1+{e}^{-v}}\). The outcome of the layer function, denoted by the overall linear model as \({g}_{2}(.)\). A function that is nonlinear called \(f({x}_{i},{T}_{i}(\tau),{U}_{i}(\tau\left)\right)\) is made up of the weighted vectors \({T}_{i}and{U}_{i}\).

The QRNN model’s parameters estimate values for \(T\left(\tau\right)=\left\{{T}_{1},{T}_{2},\dots,{T}_{n}\right\}\), \(U\left(\tau\right)=\left\{{U}_{1},{U}_{2},\dots,{U}_{n}\right\}\) may be obtained by solving the following programming issue.

where n represents the sample size, and the reduction function is described by Eq. (6). The model penalized parameters \({s}_{1}and{s}_{2}\) successfully stop the model to avoid the overfitting which this will increase the accuracy of predictions. Equation (5) can be optimized to get the best estimate values for \(T\left(\tau\right)andU\left(\tau\right)\), which are subsequently stored as \(\widehat{T}\left(\tau\right)and\widehat{U}\left(\tau\right)\). The conditionally quantiles of the outcome variables are determined by entering the two quantile values into Eq. (3).

eXtreme Gradient Boosting (XGB)

Gradient Boosting (GB) is a multiplicative ensemble technique that utilizes uniform decision trees to generate a prediction equation of the form \(\widehat{y}\left(x\right)=F\left(x\right)=\sum\nolimits_{i=1}^{n}{\gamma}_{i}{h}_{i}\left(x\right)\) (Friedman 2001), where \({h}_{i}\left(x\right)\) are uniform tree models, \(i\) is the step-length coefficient affiliated to every tree, and \(n\) is the model duration. At the \(mth\) level, the GB method employs the stencil.

where \({F}_{0}\) is a constant beginning value, and \(\nu\) is donates the learning rate. To reduce the root-mean-square error \(L(.,.)\) assessed on \(N\) set, the decision tree \({h}_{i}\left(x\right)\) at the present phase is selected using the model \({F}_{i1}\) at the preceding stage (Saporetti et al. 2019).

The step-length \({\gamma}_{i}\)is also provided by

The XGB is a cost-effective usage for tree boosting (Chen and Guestrin 2016). It uses systematized learning fitness function, a number of heuristic optimizations, and computational techniques designed for ability to handle sparse data to produce an effective and reliable boosting model.

Convolutional Neural Network (CNN)

The concept of CNNs was developed by (LeCun and Bengio 1995). CNNs perform feature extraction by applying filters to input data groups to reveal the data’s underlying structure. CNNs are used in time series analysis because of their capacity to reveal sequential and spatial information. The structure of a CNN model consists of the input, convolution, pooling, and output layers. In the context of convolutional layers, the convolution operation is applied between the input data and a set of learnable filters (also known as kernels or weights). The convolutional filters are also n-dimensional tensors, typically smaller in size than the input tensor. Each filter is a set of learnable weights that are applied to local regions of the input data. The filters scan through the input tensor by sliding across its spatial dimensions, performing element-wise multiplications and summing the results.

A fully connected subnetwork, often referred to as dense layers, plays an important role in the CNN architecture and it is typically placed at the end of the network. The subnetwork receives the low-level and mid-level features capturing the spatial hierarchies and local patterns as inputs and further extracts higher-level and more abstract features, which are crucial for the final prediction. It allows also to add some non-linear transformations to the extracted features as it consists of fully connected layers, where neurons in a layer are connected to neurons in the previous layer (Aldhyani and Alzahrani 2022). This non-linear activation allows the network to model complex relationships and capture non-linear patterns in the data.

Long Short-Term Memory (LSTM) network

LSTM is a powerful recurrent neural network (RNN) variant for predicting future data with current time series data within a given time frame. However, RNNs can only remember information from the recent time period (Canizo et al. 2019). RNNs can be trained by back propagation but are difficult to use in long input sequences due to the vanishing gradient problem. The LSTM model has been proposed to solve this problem. LSTMs are a type of RNN that uses memory blocks instead of normal neurons in their hidden layers. By incorporating these memory cells and gating mechanisms, LSTM can address the limitations of traditional RNNs through capturing and retaining long-term dependencies. Memory blocks consist of three gate units called input, output and forget gates. It is possible to update and control information through these doors (Hochreiter and Schmidhuber 1997; Sainath et al. 2015). The input gate regulates the flow of information into the memory cell, the forget gate controls the retention or removal of information from the cell, and the output gate governs the output of the memory cell. Thus, progress stages of the LSTM network: (1) If the gate is active, any new input information in the request accumulates in the cell; (2) If the forget gate is active, the state of the previous cell is forgotten; (3) The propagation of the output of the last cell to the final state is evaluated by the output gate (Shi et al. 2015). The LSTM architecture has revolutionized sequence modeling by effectively addressing the vanishing and exploding gradient problems associated with traditional RNNs.

Models’ construction and scenario definition for drought prediction

In this study, drought lag times were incorporated as input variables in the ML models to capture the patterns of drought events. Drought lag times refer to the time lag between past drought conditions and their potential influence on future drought occurrences. By considering drought lag times as inputs of the ML models, then the models can consider the memory effect of drought signals. Also, the previous studies showed drought lag times can be chosen as effective input variables for ML models to predict different types of drought indices (Malik et al. 2019; Adnan et al. 2023). This is particularly important in SDI signal prediction, as the persistence of drought can vary across different time scales. Accruing to the details of Table 2, the lag times considered are t-1, t-2, and t-3, representing the previous time steps before the current prediction. This study’s lag times correspond to the SDI values at different time scales (SDI-3, SDI-6, and SDI-12). For example, the inputs in the QRNN2, XGB2, CNN2, and LSTM2 models include the SDI values at time steps t-1 and t-2. This means that the models consider the SDI values from the two previous time steps to predict the SDI value for the current time step. The three SDI timescales were selected to capture different aspects of drought phenomena and water management needs. While SDI-3 focuses on short-term water demands, SDI-6 addresses medium-term water issues, and SDI-12 captures long-term hydrological patterns essential for strategic planning. In addition, the following empirical formula was used to calculate the decomposition stage:

where k is the total amount of time series data, M is the decomposition stage, and int [] is the integer-part formula. Three decomposition stages were found in this investigation. In order to get sub-time series of 2-day mode (D1), 4-day mode (D2), 8-day mode (D3), and approximated mode (A3) throughout every input set for both training and testing phases, input SDIs signals were decomposed via various mother wavelets.

In this study, six mother wavelets were employed, including haar, sym8, coif5, bior6.8, demy, and db10, which widely used in hydrology studies (Nourani et al. 2014; Dadu and Deka 2016; Rhif et al. 2019). Several techniques have been adopted to create new inputs to time series via the wavelet elements, such as combining the effective components, combining each component for various stages, and utilizing all of the elements for various stages without combining them (Phinyomark et al. 2011; Chakrabarty et al. 2015; Silik et al. 2021; Liu et al. 2022). In this study, we applied the approach of summing each component for various stages (D1, D2, and D3).

The current study also tried to use hyperparameter tuning for DL models to use near-optimal architecture and thus using the random search method that samples randomly hyperparameters from a predefined search space. In the design of our model, we considered the most three important hyperparameters for each model. In LSTM, the search space was [32, 64, 128, 256] for the number of LSTM units, the number of layers has range of integers from 10 to 100, learning rate has possible values of [0.001, 0.005, 0.01], the near-optimal values selected were respectively 128, 25, 0.001. For CNN, the near-optimal hyperparameters were 7 convolutional layers, 5 × 5 for the kernel size in the early layers and 3 × 3 for the last 3 convolutional layers, number of filters in each layer was 32 in the early layers and 64 for the last 3 convolutional layers. The pooling used is maxpooling, the optimizer is Adam and the activation function is ReLu.

Statistical metrics

The performance of employed models in this study was evaluated by comparing the predicted and observed SDI at two study sites. Model performance was evaluated using four metrics, including the Determination Coefficient (R2), Nash-Sutcliffe efficiency (NSE), Root Mean Square Error (RMSE), and the ratio of RMSE to the standard deviation of observed SDI (RSR). These metrics were calculated using the following equations:

where M donates the observed SDI, N donates the predicted SDI with applied models at both study sites. \(\stackrel{-}{M}and\stackrel{-}{N}\) represent the average value of observed and predicted SDI, respectively, the subscript \(i\) specifies the number of samples, while the superscript \(k\) denotes the number of days in the dataset.

Precision, sensitivity, and F1-score metrics were employed to evaluate the performance of the drought phase detection via the proposed models. Precision quantifies the accuracy of the model in identifying true positives while minimizing false positives (Eq. 16). Sensitivity measures the proportion of correctly identified positive samples out of all the actual positive samples (Eq. 17). The F1-score is a combined measure of precision and sensitivity, providing a balanced assessment of the model’s performance (Eq. 18).

where TP, FP, and FN refer to the true positive, false positive, and false negative of drought class detections, respectively.

Results and discussion

Comparison the stand-alone models

The current study evaluates the performance of QRNN against XGB, LSTM, and CNN models to predict hydrological drought at the Stockholm and ELdiem stations. Table 3 provides an overview of the statistical performance of various models (XGB, QRNN, CNN, and LSTM) in predicting the SDI at different time scales (SDI-3, SDI-6, and SDI-12) for Stockholm station. For SDI-3, the XGB models (XGB1, XGB2, and XGB3) demonstrated acceptable performance, with RMSE ranging from 0.16 to 0.37, RSR ranging from 0.16 to 0.37, R2 ranging from 0.865 to 0.974, and NSE ranging from 0.864 to 0.973. The CNN and QRNN models also showed acceptable accuracy in SDI prediction. The LSTM models yielded lower in terms of RMSE, RSR, and R2, but showed acceptable accuracy in terms of NSE values. For SDI-6 simulation, the XGB-based models performed acceptably, with RMSE ranging from 0.10 to 0.25, RSR ranging from 0.10 to 0.25, R2 ranging from 0.939 to 0.991, and NSE ranging from 0.938 to 0.991. The CNN and QRNN models demonstrated similar performance as in SDI-3, while the LSTM-based models showed lower performance metrics compared to the other models. For SDI-12, the XGB-based models preserve their strong performance, with RMSE ranging from 0.07 to 0.17, RSR ranging from 0.07 to 0.16, R2 ranging from 0.974 to 0.996, and NSE ranging from 0.974 to 0.996. The XGB-based model showed the highest performance of predicting SDI-3, SDI-6, and SDI-12 than QRNN, LSTM, and CNN during the training phase across two study sites.

Table 4 presents the statistical performance of different models (XGB, QRNN, CNN, and LSTM) in predicting the drought index at the ELdiem station for various time scales (SDI-3, SDI-6, and SDI-12). In terms of testing phase, the XGB model yielded lower performance when compared to QRNN, LSTM, and CNN models in terms of SDI-3, SDI-6, and SDI-12 at both study sites. The LSTM model yielded better performance of predicting SDI-3 than other applied model at both study sites. The values of RMSE, RSR, R2, and NSE is 0.46, 0.46, 0.793, and 0.787 at the Stockholm station, while across the ELdiem station is 0.46, 0.53, 0.724, and 0.720; respectively. The QRNN model outperformed the XGB, LSTM, and CNN models in terms of predicting the SDI-6 and SDI-12 at both study sites. The values of RMSE, RSR, R2, and NSE of QRNN model is 0.35, 0.37, 0.865, and 0.864 for SDI-6, while for SDI-12 is 0.19, 0.23, 0.947, and 0.946 at the Stockholm station. The value of RMSE, RSR, R2, and NSE of QRNN model is 0.35, 0.40, 0.858, and 0.840 for SDI-6, while for SDI-12 is 0.21, 0.27, 0.929, and 0.928 at the ELdiem station.

From the result of Tables 3 and 4, the two DL models (LSTM and CNN) outperform XGB model and ranked next to QRNN model at the Stockholm and ELdiem stations. The NSE values of the XGB model reported as 0.973, 0.991, and 0.996 at Stockholm, and for ELdiem station reported as 0.995, 0.991, and 0.993 for SDI-3, SDI-6, and SDI-12, respectively. The results showed that the QRNN method is more capable of predicting hydrological drought than the tree-based ML model (XGB) and DL models (LSTM and CNN) in the humid continental (Stockholm station) and arid to semi-arid (ELdiem station) regions. When using the second combination (first- and second-month lag times as input of models) the stand-alone models indicated the highest accuracy and more capable to predict SDI-3, SDI-6, and SDI-12 at the Stockholm station. However, at the ELdiem station the third combination of SDI-3 and SDI-6 showed the best performance, and the second combination of SDI-12 reported better of all the stand-alone models.

For SDI-3, the XGB models (XGB1, XGB2, and XGB3) demonstrated acceptable performance, with RMSE ranging from 0.07 to 0.25, RSR ranging from 0.07 to 0.27, R2 ranging from 0.928 to 0.995, and NSE ranging from 0.928 to 0.995. The CNN and QRNN models also demonstrate reasonable performance, with comparable RMSE, RSR, R2, and NSE values. The LSTM models perform lower in terms of RMSE, RSR, and R2, but still achieve acceptable NSE values. Moving to SDI-6, the XGB models continue to display strong performance, with RMSE ranging from 0.09 to 0.19, RSR ranging from 0.10 to 0.21, R2 ranging from 0.958 to 0.991, and NSE ranging from 0.957 to 0.991. The CNN and QRNN models reported similar performance in SDI prediction as observed in SDI-3, while the LSTM models reported lower performance compared to the other models. For SDI-12, the XGB -based models reported excellent prediction capabilities, with RMSE ranging from 0.08 to 0.13, RSR ranging from 0.08 to 0.13, R2 ranging from 0.983 to 0.993, and NSE ranging from 0.983 to 0.993. The XGB models demonstrated strong predictive capabilities across different time scales of the SDI at the ELdiem station.

Figure 3 shows the density scatter plots of the best developed SDI-3, SDI-6, and SDI-12 predicting models during the testing phase at the Stockholm station. This plot visualizes the distribution and concentration of data points in relation to observed and predicted time series data of SDI-3, SDI-6, and SDI-12. In this plot, the relationships between the observed SDIs and predicted SDIs were shown which, yellow-colored points show high concentrations and blue-colored points show low concentrations. The R2 values of the XGB2, LSTM2, CNN2, and QRNN2 scenarios reported from 0.702 to 0.924, 0.793 to 0.944, 0.782 to 0.941, and 0.790 to 0.947, respectively. Based on R2 values, LSTM2 showed the best performance in SDI-3 prediction and QRNN2 modeled SDI-6 and SDI-12 by highest R2 of 0.865 and 0.947 in Stockholm station.

Figure 4 presents the density scatter plots for the ELdiem station, illustrating the distribution and concentration of data points related to the observed and predicted time series data of SDI-3, SDI-6, and SDI-12. During the testing period, various prediction models, namely XGB3, LSTM3, CNN3, and QRNN3 scenarios, were evaluated based on their respective R2 values. The R2 values ranged from 0.722 to 0.728 for SDI-3, 0.777 to 0.876 for SDI-6, 0.871 to 0.930 for SDI-12 during the testing phase at the ELdiem station. The QRNN3 model demonstrated the best performance in predicting SDI-3, the CNN3 model outperformed the other models in SDI-6 prediction, and LSTM2 resulted the best accuracy for SDI-12 prediction, in the ELdiem station.

Comparison of hybrid WD models

In this study, the performance of XGB, LSTM, CNN, and QRNN models for predicting hydrological drought at two study sites were evaluated. The best input combination of the above stand-alone models was selected to improve through signal transforming by using the WD. Six mothers WD were chosen in this study including haar, sym8, coif5, bior6.8, demy, and db10. Table 5 presents the statistical performance of six wavelet mothers combined with different ML models for predicting the Drought Index (SDI-3) at the Stockholm and Eldiem stations. Again, the XGB models outperform LSTM, CNN, and QRNN models across all the wavelet mothers during the training phase in both study regions. In the Stockholm station, the best models during testing phase are haar-LSTM2 (NSE = 0.872), sym8-QRNN2 (NSE = 0.925), coif5-QRNN2 (NSE = 0.924), bior6.8-LSTM2 (NSE = 0.927), demy-LSTM2 (NSE = 0.925), and db10-QRNN2 (NSE = 0.922). The best models in the ELdiem station during testing phase are haar-QRNN3 (NSE = 0.827), sym8-LSTM3 (NSE = 0.906), coif5-QRNN3 (NSE = 0.897), bior-QRNN3 (NSE = 0.905), demy-QRNN3 (NSE = 0.917), and db10-QRNN3 (NSE = 0.926). For the Stockholm station by using the “haar” WD mother, the XGB model with the second configuration (XGB2) achieved an RMSE of 0.11, RSR of 0.11, R2 of 0.988, and NSE of 0.988 during training. During testing, the XGB2 model obtained an RMSE of 0.42, RSR of 0.42, R2 of 0.822, and NSE of 0.821. Similarly, LSTM2, CNN2, and QRNN2 models were trained and tested with the “haar” wavelet mother. For the Stockholm station, other wavelet mothers such as “sym8,” “coif5,” “bior6.8,” and “demy” were also used. The performance metrics for each model and wavelet mother combination during training and testing phases are presented in Table 5. The wavelet mothers used are “haar,” “sym8,” “coif5,” “bior6.8,” and “demy.” The models include XGB3, LSTM3, CNN3, and QRNN3. The statistical performance of each model and wavelet mother combination is provided for both training and testing.

The harr wavelet mother shows lower performance than other mothers but had better performance than the stand-alone models at both study sites. The bior6.8 wavelet mother shows the best performance in Stockholm station, while the db10 wavelet mother shows the best performance in the ELdiem station. The hybrid XGB model shows lower performance during the testing phase at both study sites while the hybrid QRNN model reflects good performance in various wavelet mothers at both study sites.

Table 6 provides statistical performance of six different wavelet mothers combined with various ML models for predicting the SDI-6 at two studied stations. The wavelet mother “haar” combined with XGB2 model showed RMSE = 0.11 and R2 = 0.988, as accurate SDI predictions during the training phase in the Stockholm Station. During the testing phase, the RMSE increased to 0.45 and R2 decreased to 0.787. In the Eldiem station, the wavelet mother “haar” combined with XGB3 model resulted RMSE equal to 0.04 and R2 equal to 0.998 during the training phase. During the testing phase, the RMSE increased to 0.41 and R2 decreased to 0.801. At the Stockholm station, the wavelet mother “haar” combined with the XGB2 model reported an RMSE = 0.11 and RSR = 0.11 during the training phase. In the testing phase, the RMSE increased to 0.45, while the RSR increased to 0.47, which showed a minor decrease in performance. Similar performances reported by combinations of other wavelet mothers with ML models at the Stockholm station. The results demonstrated the potential effectiveness of these combinations in accurately predicting the SDI signals, although the performance may vary depending on the specific wavelet mother and ML model used.

Table 7 presents the statistical metrics of six wavelet mothers coupled with ML models for predicting the SDI-12 at the Stockholm and ELdiem stations. Among the models, XGB2 demonstrated high accuracy with low RMSE, RSR, and high R2 values for both training and testing phases in Stockholm station. The LSTM2 model also showed acceptable accuracy in SDI signal predictions. For the ELdiem station, combinations of wavelet mothers with ML models reported different performance. The XGB2 model resulted in excellent capability, with low RMSE, RSR, and high R2 during training and testing phases. Table 7 also highlights the high capability of the XGB2 model while coupling different wavelet mothers for predicting SDI-12 at both the Stockholm and ELdiem stations. The LSTM2, CNN2, and QRNN2 models also reported promising results in SDIs signal prediction.

Overall performance of drought time series prediction

Figure 5 represents the group of Taylor diagrams (Taylor 2001) that compare the performance of stand-alone and hybrid models with wavelet transformation for predicting SDI-3, SDI-6, and SDI-12 against the observed SDI at the Stockholm and ELdiem stations. The close point of model prediction to the observation point represents the best accuracy of prediction. For comparing the effectiveness of various model predictions, the Taylor diagram is super beneficial. It can be observed that the stand-alone (XGB, LSTM, CNN, and QRNN) models’ performances have comparatively small values, as shown by the red dashed line in Fig. 5 where the RMSE is higher than 0.4 for SDI-3 and 0.2 for SDI-6 and SDI-12 for both study sites. In general, the performances of hybrid models with six mother’s wavelet transformation were better than the stand-alone models at both study sites.

Taylor diagram groups of stand-alone and hybrid wavelet models to compare the observed and predicted SDI in different time scales based on correlation coefficient (radial black dash line), root mean square error (red line arc) and standard deviation (radial blue dot arc). For (a), (b), and (c) for the Stockholm station and (d), (e), and (f) for the ELdiem station for SDI-3, SDI-6, and SDI-12, respectively

As shown in the box-plots of Fig. 6, it is obvious that the stand-alone models are relatively different when compared with observed SDI value, while the WD type hybrid models are found to have the best distribution SDI values. Besides, both stand-alone and hybrid XGB models have the lower performance. For both regions, the hybrid QRNN model outperforms the two DL models and the tree model in predicting SDI-3, SDI-6, and SDI-12.

The best hybrid models were proposed in this study to predict SDI-3, SDI-6, and SDI-12 in Stockholm and ELdiem stations can be shown with scatter plots in Fig. 7. Areas with a higher density of data points are typically represented by lighter colors (e.g., yellow), indicating a greater concentration between observed and predicted SDI. The three mother’s wavelets including bior, db10, and demy showed highest capability in prediction than harr, sym8, and coif5 mothers at both study sites. The bior6.8-LSTM2 model showed highest accuracy to predict the SDI-3, while the bior-QRNN2 and db10-QRNN2 models provided best performance to predict SDI-6 and SDI-12 in the Stockholm station as shown in Fig. 7a-c. in terms of ELdiem station, the QRNN model with db10 and demy mothers.

Despite the promising results, several limitations of the current study should be acknowledged. The models’ dependency on high-quality, continuous streamflow data, which may not be available in many watersheds, particularly in developing regions. Moreover, while the study evaluated model performance in two different regions, the applicability of the proposed approach to other climatic zones has not been tested. Furthermore, the computational requirements for real-time operational implementation of these hybrid models, particularly for early warning systems, have not been thoroughly assessed.

Drought phase detection via proposed models

Figure 8 shows the precision (y-axis), sensitivity (x-axis), and F1-score (contour lines) of streamflow drought phase detection for different models and different SDI time-steps during the testing period. For the Eldiem station, the figure shows that precision values range from 60% to 100%, which reports the accuracy of correctly identifying streamflow drought phases. The sensitivity values vary from 73.33% to 100%, representing the models’ ability to detect actual streamflow drought phases. For the Stockholm station, precision values range from 80.65% to 100%, while sensitivity values range from 87.1% to 98.18%, and corresponding values of F1-scores range from 85.47% to 98.9%. At the Eldiem station, the models reported different accuracy in detecting drought phases. XGB3 shows moderate precision values ranging from 66.67% to 76.92% in different SDI time-steps (in Eldiem station). LSTM3 shows higher precision, ranging from 75% to 91.67% in Eldiem station. CNN3 and QRNN3 demonstrate similar precision, ranging from 66.67% to 83.33% and 64.29–83.33%, respectively in Eldiem station. The coif5-QRNN3 and db10-QRNN3 show the highest precisions in all SDI time steps, this issue proves its ability to accurately identify drought phases. The sym8-LSTM3 and coif5-QRNN3 reported sensitivity from 81.82–100% in drought phase detection of both stations and all SDI time steps.

At the Stockholm station, XGB3 and LSTM3 reported precision from 80.65% to 89.36% and from 81.69 to 91.3%, which shows their capabilities to detect drought phases accurately. CNN3 and QRNN3 resulted acceptable capabilities, with precision of 83.82–87.76% for CNN3, and 81.16–91.3% for QRNN3. The coif5-QRNN3 and db10-QRNN3 achieved the highest precisions (100%) for SDI-12 in Stockholm station. Sensitivity of sym8-LSTM3 and bior5-QRNN3 resulted from 91.94% to 96.36% and from 95.16% to 98.18%, respectively, in Stockholm station. When considering sensitivity, bior-QRNN3 and coif5-QRNN3 emerge as the top-performing models in Stockholm station. These models report a high sensitivity to drought events, effectively capturing a large number of true positive drought cases. The model like XGB3 shows lower sensitivity scores, which report a higher likelihood of false negatives drought phase detections. In addition, coif5-QRNN3 and bior-QRNN3 achieved high F1-scores, which show a balanced performance between precision and sensitivity in drought phase detection.

Table 8 presents the true class drought detection using XGB3, LSTM3, CNN3, QRNN3, haar-QRNN3, sym8-LSTM3, coif5-QRNN3, bior6.8-QRNN3, demy-QRNN3, and db10-QRNN3 models during their testing phases. The XGB3 detected drought true class with 60.71% for SDI-3, 58.33% for SDI-6, and 73.8% for SDI-12 in the Eldiem station. Also, in the Eldiem station, LSTM3 resulted true drought detection with 53.57% for SDI-3, 66.66% for SDI-6, and 84.52% for SDI-12. CNN3 and QRNN3 have similar performances, ranging from 52.38% to 83.33% for the different SDIs. The haar-QRNN3 model reported better drought true class detection (ranging from 65.47% to 90.47%). For the Stockholm station, XGB3 resulted drought true class detection of 56.41%, 64.1%, and 80.12% for SDI-3, SDI-6, and SDI-12, respectively. LSTM3 showed drought true class detection ranging from 61.53% to 85.89% in the different SDIs. CNN3 and QRNN3 results in drought true class detection ranged from 63.46% (for CNN3) and 59.61% (for QRNN3) to 87.17% (for CNN3 and QRNN3). The haar-QRNN3 and sym8-LSTM3 models detected drought phase with acceptable performances, ranging from 62.82% to 90.38% and from 73.07–88.46%, respectively. The coif5-QRNN3 had high accuracy in SDI-12 (95.51%), the bior6.8-QRNN3 and demy-QRNN3 also resulted satisfactory performances with true drought class detection ranging from 73.71% to 89.1% and 68.58–92.94%, respectively in Stockholm station.

Conclusions

Streamflow droughts significantly impact water availability, making accurate detection of drought phases essential for water resource management. This study compared stand-alone models (XGB, LSTM, CNN, and QRNN) and hybrid models with six WD mothers (haar, sym8, coif5, bior6.8, demy, and db10) in predicting streamflow drought index (SDI-3, SDI-6, and SDI-12) in humid continental (Stockholm, Sweden) and semi-arid (ELdiem, Sudan) regions. Results showed all combinations achieved good predictive accuracy, with LSTM outperforming in SDI-3 prediction and QRNN excelling in SDI-6 and SDI-12 predictions at both stations. Notably, hybrid models significantly enhanced the prediction accuracy of stand-alone models in both humid continental and semi-arid regions, underscoring their potential for improving SDI forecasting across diverse climatic conditions. In the Stockholm station, the bior6.8-LSTM2, bior6.8-QRNN2, and demy-QRNN2 models showed highest accuracy than other hybrid models during the testing stage in terms of prediction SDI-3, SDI-6, and SDI-12, respectively. In the ELdiem station, the db10-QRNN3, demy-QRNN3, and demy-QRNN2 models performed better than other hybrid models during the testing stage in terms of prediction SDI-3, SDI-6, and SDI-12, respectively. The QRNN model provided a more accurate prediction of hydrological drought than applied models in two studied regions. Further studies could investigate the potential of ensemble approaches combining multiple wavelet decomposition techniques to capture different aspects of drought signals simultaneously. Moreover, incorporating uncertainty quantification methods into the hybrid models would provide confidence intervals for drought predictions, enhancing their practical utility for water resource management decisions.

Data availability

Data will be made available on request.

References

Abdallah M, Mohammadi B, Nasiri H, Katipoğlu OM, Abdalla MAA, Ebadzadeh MM (2023) Daily global solar radiation time series prediction using variational mode decomposition combined with multi-functional recurrent fuzzy neural network and quantile regression forests algorithm. Energy Rep 10:4198–4217. https://doi.org/10.1016/j.egyr.2023.10.070

Adamowski JHF, Chan (2011) A wavelet neural network conjunction model for groundwater level forecasting. J Hydrol 407(1–4):28–40. https://doi.org/10.1016/j.jhydrol.2011.06.013

Adnan RM, Dai H-L, Kuriqi A, Kisi O, Zounemat-Kermani M (2023) Improving drought modeling based on new heuristic machine learning methods. Ain Shams Eng J 102168. https://doi.org/10.1016/j.asej.2023.102168

Aghelpour P, Bahrami-Pichaghchi H, Varshavian V (2021) Hydrological drought forecasting using multi-scalar streamflow drought index, stochastic models and machine learning approaches. North Iran Stoch Environ Res Risk Assess 35(8):1615–1635

Aghelpour P, Bahrami-Pichaghchi H, Varshavian V, Norooz-Valashedi R (2023) Evaluating the predictability of eight Atmospheric-Oceanic signals affecting Iran’s droughts, employing intelligence based and stochastic methods. Adv Space Res 71(5):2394–2415

Aldhyani THH, Alzahrani A (2022) Framework for predicting and modeling stock market prices based on deep learning algorithms. Electronics 11(19):3149. https://doi.org/10.3390/electronics11193149

Altunkaynak AA, Jalilzadnezamabad (2021) Extended lead time accurate forecasting of palmer drought severity index using hybrid wavelet-fuzzy and machine learning techniques. J Hydrol 601:126619

Ashrafzadeh A, Kişi O, Aghelpour P, Biazar SM, Masouleh MA (2020) Comparative study of time series models, support vector machines, and GMDH in forecasting long-term evapotranspiration rates in northern Iran. J Irrig Drain Eng 146(6):04020010

Bae D-H, Son K-H, So J-M (2017) Utilization of the bayesian method to improve hydrological drought prediction accuracy. Water Resour Manage 31:3527–3541

Belayneh A, Adamowski J, Khalil B, Quilty J (2016) Coupling machine learning methods with wavelet transforms and the bootstrap and boosting ensemble approaches for drought prediction. Atmos Res 172:37–47

Brunner MI, Slater L, Tallaksen LM, Clark M (2021) Challenges in modeling and predicting floods and droughts: a review. Wiley Interdisciplinary Reviews: Water 8(3):e1520

Canizo M, Triguero I, Conde A, Onieva E (2019) Multi-head CNN–RNN for multi-time series anomaly detection: an industrial case study. Neurocomputing 363:246–260. https://doi.org/10.1016/j.neucom.2019.07.034

Cannas B, Fanni A, Sias G, Tronci S, Zedda MK (2005) River flow forecasting using neural networks and wavelet analysis. Geophys Res Abstr 7: 08651

Catalao JPS, Pousinho HMI, Mendes VMF (2010) Hybrid wavelet-PSO-ANFIS approach for short-term wind power forecasting in Portugal. IEEE Trans Sustain Energy 2(1):50–59

Chakrabarty A, De A, Gunasekaran A, Dubey R (2015) Investment horizon heterogeneity and wavelet: overview and further research directions. Phys A: Stat Mech Appl 429:45–61. https://doi.org/10.1016/j.physa.2014.10.097

Chen T, Guestrin C (2016) Xgboost: a scalable tree boosting system. In: Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining. Association for Computing Machinery, pp 785–794. https://doi.org/10.1145/2939672.2939785

Cho K, Van Merriënboer B, Gulcehre C, Bahdanau D, Bougares F, Schwenk H, Bengio Y (2014) Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv preprint arXiv:1406.1078

Dadu KS, Deka PC (2016) Applications of wavelet transform technique in hydrology—a brief review. Urban Hydrology Watershed Manage socio-economic Aspects, pp 241–253. https://doi.org/10.1007/978-3-319-40195-9_19

Danandeh Mehr A, Rikhtehgar Ghiasi A, Yaseen ZM, Sorman AU, Abualigah L (2023) A novel intelligent deep learning predictive model for meteorological drought forecasting. J Ambient Intell Humaniz Comput 14(8):10441–10455. https://doi.org/10.1007/s12652-022-03701-7

Dargan S, Kumar M, Ayyagari MR, Kumar G (2020) A survey of deep learning and its applications: a new paradigm to machine learning. Arch Comput Methods Eng 27(4):1071–1092. https://doi.org/10.1007/s11831-019-09344-w

Dikshit A, Pradhan B, Santosh M (2022) Artificial neural networks in drought prediction in the 21st century–A scientometric analysis. Appl Soft Comput 114:108080. https://doi.org/10.1016/j.asoc.2021.108080

Drisya J, Kumar DS, Roshni T (2021) Hydrological drought assessment through streamflow forecasting using wavelet enabled artificial neural networks. Environ Dev Sustain 23:3653–3672

Eckley IA, Nason GP, Treloar RL (2010) Locally stationary wavelet fields with application to the modelling and analysis of image texture. J Roy Stat Soc: Ser C (Appl Stat) 59(4):595–616

Friedman JH (2001) Greedy function approximation: a gradient boosting machine. Ann Stat 29(5): 1189–1232

Ham YS, Sonu KB, Paek US, Om KC, Jong SI, Jo KR (2023) Comparison of LSTM network, neural network and support vector regression coupled with wavelet decomposition for drought forecasting in the western area of the DPRK. Nat Hazards 116(2): 2619–2643

He X, Luo J, Zuo G, Xie J (2019) Daily runoff forecasting using a hybrid model based on variational mode decomposition and deep neural networks. Water Resour Manage 33: 1571–1590. https://doi.org/10.1007/s11269-019-2183-x

Hochreiter SJ, Schmidhuber (1997) Long short-term memory. Neural Comput 9(8):1735–1780

Hong X, Guo S, Zhou Y, Xiong L (2015) Uncertainties in assessing hydrological drought using streamflow drought index for the upper Yangtze River basin. Stoch Env Res Risk Assess 29:1235–1247. https://doi.org/10.1007/s00477-014-0949-5

Katipoğlu OM (2023) Prediction of streamflow drought index for short-term hydrological drought in the semi-arid Yesilirmak Basin using Wavelet transform and artificial intelligence techniques. Sustainability 15(2):1109

Katipoğlu OM, Acar R, Şengül S (2020) Comparison of meteorological indices for drought monitoring and evaluating: a case study from Euphrates basin. Turk J Water Clim Change 11(S1):29–43

Khan MMH, Muhammad NS, El-Shafie A (2020) Wavelet based hybrid ANN-ARIMA models for meteorological drought forecasting. J Hydrol 590:125380

Koenker R, Bassett Jr G (1978) Regres quantiles. Econometrica 46(1): 33–50

Latifoglu L (2020) Evaluating stream flow forecasting performance using adaptive network based fuzzy logic inference system, artificial neural networks with feature selection. Eurasia Proc Sci Technol Eng Math 11:125–130

LeCun Y, Bengio Y (1995) Convolutional networks for images, speech, and time series. Handb Brain Theory Neural Networks 3361(10):1995

Lehner B, Döll P, Alcamo J, Henrichs T, Kaspar F (2006) Estimating the impact of global change on flood and drought risks in Europe: a continental, integrated analysis. Clim Change 75:273–299

Liu Z, Törnros T, Menzel L (2016) A probabilistic prediction network for hydrological drought identification and environmental flow assessment. Water Resour Res 52(8):6243–6262

Liu Q, Yang J, Zhang K (2022) An improved empirical wavelet transform and sensitive components selecting method for bearing fault. Measurement 187:110348

Luo X, Yuan X, Zhu S, Xu Z, Meng L, Peng J (2019) A hybrid support vector regression framework for streamflow forecast. J Hydrol 568:184–193

Makridakis S, Spiliotis E, Assimakopoulos V (2018) Statistical and machine learning forecasting methods: concerns and ways forward. PLoS ONE 13(3):e0194889

Malik A, Kumar A, Singh RP (2019) Application of heuristic approaches for prediction of hydrological drought using multi-scalar streamflow drought index. Water Resour Manage 33:3985–4006. https://doi.org/10.1007/s11269-019-02350-4

Mallat SG (1989) A theory for multiresolution signal decomposition: the wavelet representation. IEEE Trans Pattern Anal Mach Intell 11(7):674–693

McKee TB, Doesken NJ, Kleist J (1993) The relationship of drought frequency and duration to time scales. Proceedings of the 8th Conference on Applied Climatology, Boston

Mohammadi B, Vazifehkhah S, Duan Z (2024) A conceptual metaheuristic-based framework for improving runoff time series simulation in glacierized catchments. Eng Appl Artif Intell 127:107302. https://doi.org/10.1016/j.engappai.2023.107302

Mohammed S, Elbeltagi A, Bashir B, Alsafadi K, Alsilibe F, Alsalman A, Zeraatpisheh M, Széles A, Harsányi E (2022) A comparative analysis of data mining techniques for agricultural and hydrological drought prediction in the eastern Mediterranean. Comput Electron Agric 197:106925

Nalbantis IG, Tsakiris (2009) Assessment of hydrological drought revisited. Water Resour Manage 23:881–897

Nejad FHV, Nourani (2012) Elevation of wavelet denoising performance via an ANN-based streamflow forecasting model. Int J Comput Sci Manage Res 1(4):764–770

Nourani V, Alami MT, Aminfar MH (2009) A combined neural-wavelet model for prediction of Ligvanchai watershed precipitation. Eng Appl Artif Intell 22(3):466–472

Nourani V, Baghanam AH, Adamowski J, Kisi O (2014) Applications of hybrid wavelet–artificial intelligence models in hydrology: a review. J Hydrol 514:358–377. https://doi.org/10.1016/j.jhydrol.2014.03.057

Otazu X, González-Audícana M, Fors O, Núñez J (2005) Introduction of sensor spectral response into image fusion methods. Application to wavelet-based methods. IEEE Trans Geosci Remote Sens 43(10):2376–2385

Özger M, Başakın EE, Ekmekcioğlu Ö, Hacısüleyman V (2020) Comparison of wavelet and empirical mode decomposition hybrid models in drought prediction. Comput Electron Agric 179:105851. https://doi.org/10.1016/j.compag.2020.105851

Pham BT, Luu C, Van Phong T, Trinh PT, Shirzadi A, Renoud S, Asadi S, Van Le H, von Meding J, Clague JJ (2021) Can deep learning algorithms outperform benchmark machine learning algorithms in flood susceptibility modeling? J Hydrol 592:125615

Phinyomark A, Limsakul C, Phukpattaranont P (2011) Application of wavelet analysis in EMG feature extraction for pattern classification. Meas Sci Rev 11(2):45–52

Rhif M, Ben Abbes A, Farah IR, Martínez B, Sang Y (2019) Wavelet transform application for/in non-stationary time-series analysis: a review. Appl Sci 9(7):1345. https://doi.org/10.3390/app9071345

Rose MJ, Chithra N (2023) Tree-based ensemble model prediction for hydrological drought in a tropical river basin of India. Int J Environ Sci Technol 20(5): 4973–4990

Sainath TN, Vinyals O, Senior A, Sak H (2015) Convolutional, long short-term memory, fully connected deep neural networks. 2015 IEEE international conference on acoustics, speech and signal processing (ICASSP), IEEE

Saporetti CM, da Fonseca LG, Pereira E (2019) A lithology identification approach based on machine learning with evolutionary parameter tuning. IEEE Geosci Remote Sens Lett 16(12):1819–1823. https://doi.org/10.1109/LGRS.2019.2911473

Sardar V, Chaudhari S, Anchalia A, Kakati A, Paudel A, Bhavana B (2022) Ensemble learning with CNN and BMO for Drought Prediction. 2022 IEEE 3rd Global Conference for Advancement in Technology (GCAT), IEEE

Senthil Kumar A, Goyal MK, Ojha C, Singh R, Swamee P (2013) Application of artificial neural network, fuzzy logic and decision tree algorithms for modelling of streamflow at Kasol in India. Water Sci Technol 68(12):2521–2526

Sharma TCUS, Panu (2014) Modeling of hydrological drought durations and magnitudes: experiences on Canadian streamflows. J Hydrology: Reg Stud 1:92–106

Shi X, Chen Z, Wang H, Yeung DY, Wong WK, Woo WC (2015) Convolutional LSTM network: A machine learning approach for precipitation nowcasting. Advances in neural information processing systems, 28. https://proceedings.neurips.cc/paper_files/paper/2015/file/07563a3fe3bbe7e3ba84431ad9d055af-Paper.pdf

Silik A, Noori M, Altabey WA, Ghiasi R (2021) Selecting optimum levels of wavelet multi-resolution analysis for time‐varying signals in structural health monitoring. Struct Control Health Monit 28(8):e2762. https://doi.org/10.1002/stc.2762

Susperreguy MI, Davis-Kean PE, Duckworth K, Chen M (2018) Self‐concept predicts academic achievement across levels of the achievement distribution: domain specificity for math and reading. Child Dev 89(6):2196–2214

Taylan ED, Terzi Ö, Baykal T (2021) Hybrid wavelet–artificial intelligence models in meteorological drought estimation. J Earth Syst Sci 130:1–13

Taylor KE (2001) Summarizing multiple aspects of model performance in a single diagram. J Geophys Research: Atmos 106(D7):7183–7192

Tikhamarine Y, Souag-Gamane D, Ahmed AN, Kisi O, El-Shafie A (2020) Improving artificial intelligence models accuracy for monthly streamflow forecasting using grey Wolf optimization (GWO) algorithm. J Hydrol 582:124435

Vo TQ, Kim S-H, Nguyen DH, Bae D-H (2023) LSTM-CM: a hybrid approach for natural drought prediction based on deep learning and climate models. Stoch Enviro Res Risk Assess 37(6): 2035–2051

Wu Z, Yin H, He H, Li Y (2022) Dynamic-LSTM hybrid models to improve seasonal drought predictions over China. J Hydrol 615:128706

Xu QC, Jiang (2011) Quantile partial adjustment model and its application. J Quant Tech Econ 8:115–133

Zuo G, Luo J, Wang N, Lian Y, He X (2020) Decomposition ensemble model based on variational mode decomposition and long short-term memory for streamflow forecasting. J Hydrol 585:124776. https://doi.org/10.1016/j.jhydrol.2020.124776

Funding

Open access funding provided by Swedish Meteorological and Hydrological Institute. This research received no external funding.

Author information

Authors and Affiliations

Contributions

Babak Mohammadi: Conceptualization, Methodology, Software, Validation, Formal analysis, Data Curation, Writing - Original Draft, Writing - Review & Editing, Visualization, Project administration. Mohammed Abdallah: Conceptualization, Methodology, Software, Formal analysis, Investigation, Data Curation, Writing - Original Draft, Visualization. Rachid Oucheikh: Methodology, Software, Investigation, Writing - Original Draft. Okan Mert Katipoğlu: Investigation, Writing - Original Draft. Majid Cheraghalizadeh: Software, Investigation, Visualization.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Communicated by: Hassan Babaie

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Mohammadi, B., Abdallah, M., Oucheikh, R. et al. Enhancing streamflow drought prediction: integrating wavelet decomposition with deep learning and quantile regression neural network models. Earth Sci Inform 18, 232 (2025). https://doi.org/10.1007/s12145-025-01736-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12145-025-01736-w