Abstract

When a person uses a single modality to obtain information from multiple sources, the modality experiences resource competition, affecting perception. To avoid such human resource conflicts, we investigated whether a person could multitask efficiently by using tactile perception instead of visual perception to recognize the robot in a scenario where the robot leads the person. A wheeled mobile robot that we developed was used for the investigation. Each participant’s gaze was measured using a wearable eye-tracker to analyze the allocation of visual resources. As a subtask, participants were given a one-back calculation task for numbers placed on the wall of the experimental environment. The workload of the participants on the subtask was evaluated using the NASA task load index. The experimental results showed that the participants looked at the surroundings longer when walking hand in hand with the robot than when walking alongside it. Conversely, there was no significant difference in the subjective evaluation of mental workload between the two conditions. This study provides new insights into how hand-holding navigation by a robot affects human gaze behavior and mental workload, and represents a new form of utilization of physical interaction by service robots.

Similar content being viewed by others

Data Availability

The datasets generated during and/or analyzed during the duration of the current study are available from the corresponding author on reasonable request.

References

Wickens CD (2008) Multiple resources and mental workload. Hum Factors 50(3):449–455. https://doi.org/10.1518/001872008X288394

Bremner P, Pipe A, Melhuish C, Fraser M, Subramanian S (2009) Conversational gestures in human-robot interaction. In: 2009 IEEE international conference on systems, man and cybernetics. pp 1645–1649. https://doi.org/10.1109/ICSMC.2009.5346903

Kondo Y, Takemura K, Takamatsu J, Ogasawara T (2010) Smooth human-robot interaction by interruptible gesture planning. In: 2010 IEEE/ASME international conference on advanced intelligent mechatronics. pp 213–218. https://doi.org/10.1109/AIM.2010.5695883

Salem M, Kopp S, Wachsmuth I, Rohlfing K, Joublin F (2012) Generation and evaluation of communicative robot gesture. Int J Soc Robot 4(2):201–217. https://doi.org/10.1007/s12369-011-0124-9

Kanda T, Ishiguro H, Ono T, Imai M, Nakatsu R (2002) Development and evaluation of an interactive humanoid robot robovie. In: Proceedings 2002 IEEE international conference on robotics and automation (Cat. No.02CH37292), vol 2. pp 1848–1855. https://doi.org/10.1109/ROBOT.2002.1014810

Kato S, Ohshiro S, Itoh H, Kimura K (2004) Development of a communication robot ifbot. In: IEEE international conference on robotics and automation, 2004. Proceedings. ICRA ’04. 2004, vol 1. pp 697–702 . https://doi.org/10.1109/ROBOT.2004.1307230

Hieida C, Abe K, Nagai T, Omori T (2020) Walking hand-in-hand helps relationship building between child and robot. J Robot Mechatron 32(1):8–20. https://doi.org/10.20965/jrm.2020.p0008

Nosaka T, Fukamachi K, Takeda Y, De Silva PRS, Okada M (2015) The effective communication between “mako no te’’ and human through the patterns of grasping. J Hum Interface Soc 17(2):191–200. https://doi.org/10.11184/his.17.2_191 (in Japanese)

Kochigami K, Okada K, Inaba M (2018) Effect of walking with a robot on child-child interactions. In: 2018 27th IEEE international symposium on robot and human interactive communication (RO-MAN). IEEE, pp 468–471. https://doi.org/10.1109/ROMAN.2018.8525506

Atsumi Y, Yokoya M, Yamada K, Morita J, Hirayama T, Enokibori Y, Mase K (2017) Consideration of task load evaluation in cognitive and physical multitask situations. In: The 31st annual conference of the Japanese society for artificial intelligence, 2017, pp. 1K33in1–1K33in1. The Japanese society for artificial intelligence . https://doi.org/10.11517/pjsai.JSAI2017.0_1K33in1. (in Japanese)

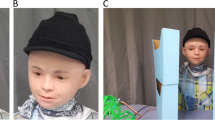

Nakata Y, Yagi S, Yu S, Wang Y, Ise N, Nakamura Y, Ishiguro H (2022) Development of ‘ibuki’ an electrically actuated childlike android with mobility and its potential in the future society. Robotica 40(4):933–950. https://doi.org/10.1017/S0263574721000898

Yu S, Nishimura Y, Yagi S, Ise N, Wang Y, Nakata Y, Nakamura Y, Ishiguro H (2020) A software framework to create behaviors for androids and its implementation on the mobile android“ ibuki”. In: Companion of the 2020 ACM/IEEE international conference on human-robot interaction. pp 535–537. https://doi.org/10.1145/3371382.3378245

ROS Navigation stack. http://wiki.ros.org/ja/navigation. Accessed 30 June 2022

ROS gmapping package. http://wiki.ros.org/gmapping. Accessed 30 June 2022

CSRT Tracker. https://docs.opencv.org/3.4/d2/da2/classcv_1_1TrackerCSRT.html. Accessed 30 June 2022

Hart SG, Staveland LE (1988) Development of nasa-tlx (task load index): results of empirical and theoretical research. In: Hancock PA, Meshkati N (eds.) Human mental workload, advances in psychology, vol 52. North-Holland, pp 139–183. https://doi.org/10.1016/S0166-4115(08)62386-9

Funding

This study was funded by the Japan Science and Technology Agency Exploratory Research for Advanced Technology (JST ERATO; Grant No. JPMJER1401).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflicts of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Ise, N., Nakata, Y., Nakamura, Y. et al. Gaze Motion and Subjective Workload Assessment While Performing a Task Walking Hand in Hand with a Mobile Robot. Int J of Soc Robotics 14, 1875–1882 (2022). https://doi.org/10.1007/s12369-022-00919-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12369-022-00919-5