Abstract

Most existing results about attribute reduction are reported by considering one and only one granularity, especially for the strategies of searching reducts. Nevertheless, how to derive reduct from multi-granularity has rarely been taken into account. One of the most important advantages of multi-granularity based attribute reduction is that it is useful in investigating the variation of the performances of reducts with respect to different granularities. From this point of view, the concept of Sequential Granularity Attribute Reduction (SGAR) is systemically studied in this paper. Different from previous attribute reductions, the aim of SGAR is to find multiple reducts which are derived from a family of ordered granularities. Assuming that a reduct related to the previous granularity may offer the guidance for computing a reduct related to the current granularity, the idea of the three-way is introduced into the searching of sequential granularity reduct. The three different ways in such process are: (1) the reduct related to the previous granularity is precisely the reduct related to the current granularity; (2) the reduct related to the previous granularity is not the reduct related to the current granularity; (3) the reduct related to the previous granularity is possible to be the reduct related to the current granularity. Therefore, a three-way based forward greedy searching is designed to calculate the sequential granularity reduct. The main advantage of our strategy is that the number of times to evaluate the candidate attributes can be reduced. Experimental results over 12 UCI data sets demonstrate the following: (1) three-way based searching is superior to some state-of-the-art acceleration algorithms in time consumption of deriving reducts; (2) the sequential granularity reducts obtained by proposed three-way based searching will provide well-matched classification performances. This study suggests new trends concerning the problem of attribute selection.

Similar content being viewed by others

References

Chen DG, Zhao SY (2010) Local reduction of decision system with fuzzy rough sets. Fuzzy Sets Syst 161:1871–1883

Chen DG, Zhao SY, Zhang L, Yang YP, Zhang X (2012) Sample pair selection for attribute reduction with rough set. IEEE Trans Knowl Data Eng 24:2080–2093

Chen HM, Li TR, Luo C, Horng SJ, Wang GY (2015) A decision-theoretic rough set approach for dynamic data mining. IEEE Trans Fuzzy Syst 23:1958–1970

Chen Y, Liu KY, Song JJ, Fujita H, Yang XB, Qian YH (2020) Attribute group for attribute reduction. Inf Sci 535:64–80

Chen Y, Song JJ, Liu KY, Lin YJ, Yang XB (2020) Combined accelerator for attribute reduction: a sample perspective. Mathematical Problems in Engineering, Article: 2350627

Dai JH, Xu Q (2013) Attribute selection based on information gain ratio in fuzzy rough set theory with application to tumor classification. Appl Soft Comput 13:211–221

Hu XG, Yang HG (2020) DRU-net: a novel U-net for biomedical image segmentation. IET Image Proc 14:192–200

Hu QH, Pedrycz W, Yu DR, Lang J (2010) Selecting discrete and continuous features based on neighborhood decision error minimization. IEEE Trans Syst Man Cybern Part B 40:137–150

Hu QH, An S, Yu X, Yu DR (2011) Robust fuzzy rough classifiers. Fuzzy Sets Syst 183:26–43

Jiang ZH, Yang XB, Yu HL, Liu D, Wang PX, Qian YH (2019) Accelerator for multi-granularity attribute reduction. Knowl-Based Syst 177:145–158

Jiang ZH, Liu KY, Yang XB, Yu HL, Fujita H, Qian YH (2020) Accelerator for supervised neighborhood based attribute reduction. Int J Approx Reason 119:122–150

Ju HR, Yang XB, Song XN, Qi YS (2014) Dynamic updating multigranulation fuzzy rough set: approximations and reducts. Int J Mach Learn Cybern 5:981–990

Ju HR, Yang XB, Yu HL, Li TJ, Yu DJ, Yang JY (2016) Cost-sensitive rough set approach. Inf Sci 355–356:282–298

Ju HR, Pedrycz W, Li HX, Ding WP, Yang XB, Zhou XZ (2019) Sequential three-way classifier with justifiable granularity. Knowl-Based Syst 163:103–119

Kong QZ, Zhang XW, Xu WH, Xie ST (2020) Attribute reducts of multi-granulation information system. Artif Intell Rev 53:1353–1371

Kuncheva LI, Whitaker CJ (2003) Measures of diversity in classifier ensembles and their relationship with the ensemble accuracy. Mach Learn 51:181–207

Lang GM, Li QG, Cai MJ, Yang T, Xiao QM (2014) Incremental approaches to knowledge reduction based on characteristic matrices. Int J Mach Learn Cybern 5:1–20

Li SQ, Harner EJ, Adjeroh DA (2011) Random KNN feature selection-A fast and stable alternative to Random Forests. BMC Bioinform 12:450

Li Y, Si J, Zhou GJ, Huang SS, Chen SC (2014) FREL: a stable feature selection algorithm. IEEE Trans Neural Netw Learn Syst 26:1388–1402

Li HX, Zhang LB, Huang B, Zhou XZ (2020) Cost-sensitive dual-bidirectional linear discriminant analysis. Inf Sci 510:283–303

Liang JY, Wang F, Dang CY, Qian YH (2012) An efficient rough feature selection algorithm with a multi-granulation view. Int J Approx Reason 53:912–926

Lin YJ, Hu QH, Liu JH, Li JJ, Wu XD (2017) Streaming feature selection for multi-label learning based on fuzzy mutual information. IEEE Trans Fuzzy Syst 6:1491–1507

Liu Y, Huang WL, Jiang YL, Zeng ZY (2014) Quick attribute reduct algorithm for neighborhood rough set model. Inf Sci 271:65–81

Liu JB, Li HX, Zhou XZ, Huang B, Wang TX (2019) An optimization-based formulation for three-way decisions. Inf Sci 495:185–214

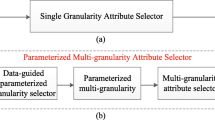

Liu KY, Yang XB, Fujita H, Liu D, Yang X, Qian YH (2019) An efficient selector for multi-granularity attribute reduction. Inf Sci 505:457–472

Liu KY, Yang XB, Yu HL, Mi JS, Wang PX, Chen XJ (2019) Rough set based semi-supervised feature selection via ensemble selector. Knowl-Based Syst 165:282–296

Liu KY, Yang XB, Yu HL, Fujita H, Chen XJ, Liu D (2020) Supervised information granulation strategy for attribute reduction. Int J Mach Learn Cybern. https://doi.org/10.1007/s13042-020-01107-5

Min F, He HP, Qian YH, Zhu W (2011) Test-cost-sensitive attribute reduction. Inf Sci 181:4928–4942

Niu JJ, Huang CC, Li JH, Fan M (2018) Parallel computing techniques for concept-cognitive learning based on granular computing. Int J Mach Learn Cybern 9:1785–1805

Pawlak Z (1991) Rough sets: theoretical aspects of reasoning about data. Kluwer, Dordrecht

Qian YH, Liang JY, Pedrycz W, Dang CY (2010) Positive approximation: an accelerator for attribute reduction in rough set theory. Artif Intell 174:597–618

Qian YH, Xu H, Liang JY, Liu B, Wang JT (2015) Fusing monotonic decision trees. IEEE Trans Knowl Data Eng 27:2717–2728

Qian YH, Wang Q, Cheng HH, Liang JY, Dang CY (2015) Fuzzy-rough feature selection accelerator. Fuzzy Sets Syst 258:61–78

Qian YH, Liang XY, Wang Q, Liang JY, Liu B, Skowron A, Yao YY, Ma JM, Dang CY (2018) Local rough set: a solution to rough data analysis in big data. Int J Approx Reason 97:38–63

Rao XS, Yang XB, Yang X, Chen XJ, Liu D, Qian YH (2020) Quickly calculating reduct: an attribute relationship based approach. Knowl Based Syst 200:106014

Sarkar C, Cooley S, Srivastava J (2014) Robust feature selection technique using rank aggregation. Appl Artif Intell 28:243–257

Shi Y, Mi YL, Li JH, Liu WQ (2019) Concurrent concept-cognitive learning model for classification. Inf Sci 496:65–81

Ślȩzak D (2002) Approximate entropy reducts. Fund Inf 53:365–390

Sun BZ, Ma WM, Zhao HY (2016) An approach to emergency decision making based on decision-theoretic rough set over two universes. Soft Comput 20:3617–3628

Tsang Eric CC, Sun BZ, Ma WM (2015) General relation-based variable precision rough fuzzy set. Int J Mach Learn Cybern 6:1–11

Tsang Eric CC, Hu QH, Chen DG (2016) Feature and instance reduction for PNN classifiers based on fuzzy rough sets. Int J Mach Learn Cybern 7:1–11

Wang YB, Chen XJ, Dong K (2019) Attribute reduction via local conditional entropy. Int J Mach Learn Cybern 10:3619–3634

Wang TX, Li HX, Zhou XZ, Huang B, Zhu HB (2020) A prospect theory-based three-way decision model. Knowl Based Syst. https://doi.org/10.1016/j.knosys.2020.106129

Wang TX, Li HX, Zhang LB, Zhou XZ, Huang B (2020) A three-way decision model based on cumulative prospect theory. Inf Sci 519:74–92

Wei W, Liang JY, Wang JH, Qian YH (2013) Decision-relative discernibility matrices in the sense of entropies. Int J Gen Syst 42:721–738

Wu WZ, Leung Y (2013) Optimal scale selection for multi-scale decision tables. Int J Approx Reason 54:1107–1129

Xu, SP., Yang, XB., Song, XN., Yu, HL.: Prediction of protein structural classes by decreasing nearest neighbor error rate. In: 2015 International conference on machine learning & cybernetics, Guangzhou, China, July 12–15, pp 7–13 (2015)

Xu WH, Li WT (2016) Granular computing approach to two-way learning based on formal concept analysis in fuzzy datasets. IEEE Trans Cybern 46:366–379

Xu SP, Yang XB, Yu HL, Yu DJ, Yang JY, Tsang Eric CC (2016) Multi-label learning with label-specific feature reduction. Knowl-Based Syst 104:52–61

Yang XB, Yao YY (2018) Ensemble selector for attribute reduction. Appl Soft Comput 70:1–11

Yang XB, Qi YS, Song XN, Yang JY (2013) Test cost sensitive multigranulation rough set: model and minimal cost selection. Inf Sci 250:184–199

Yang XB, Qi Y, Yu HL, Song XN, Yang JY (2014) Updating multigranulation rough approximations with increasing of granular structures. Knowl-Based Syst 64:59–69

Yang XB, Xu SP, Dou HL, Song XN, Yu HL, Yang JY (2017) Multigranulation rough set: a multiset based strategy. Int J Comput Intell Syst 10:277–292

Yang L, Xu WH, Zhang XY, Sang BB (2020) Multi-granulation method for information fusion in multi-source decision information system. Int J Approx Reason 122:47–65

Yao YY (2016) A triarchic theory of granular computing. Granul Comput 1:145–157

Yao YY (2016) Three-way decisions and cognitive computing. Cogn Comput 8:543–554

Yao YY, Zhao Y (2009) Discernibility matrix simplification for constructing attribute reducts. Inf Sci 179:867–882

Yao YY, Zhao LQ (2012) A measurement theory view on the granularity of partitions. Inf Sci 213:1–13

Yao YY, Zhao Y, Wang J (2008) On reduct construction algorithms, Transactions on Computational. Science 2:100–117

Yu L, Ding C, Loscalzo S (2008) Stable feature selection via dense feature groups, In: ACM international conference on knowledge discovery and data mining, Las Vegas, Nevada, USA, August, pp 803–811

Zhang ML, Wu L (2015) LIFT: multi-label learning with label-specific features. IEEE Trans Pattern Anal Mach Intell 37:107–120

Zhang X, Mei CL, Chen DG, Li JH (2016) Feature selection in mixed data: a method using a novel fuzzy rough set-based information entropy. Pattern Recogn 56:1–15

Zhao Y, Yao YY, Luo F (2007) Data analysis based on discernibility and indiscernibility. Inf Sci 177:4959–4976

Zhao SY, Chen H, Li CP, Du XY, Sun H (2015) A novel approach to building a robust fuzzy rough classifier. IEEE Trans Fuzzy Syst 23:769–786

Zhou J, Miao DQ (2011) \(\beta\)-Interval attribute reduction in variable precision rough set model. Soft Comput 15:1643–1656

Acknowledgements

This work is supported by the Natural Science Foundation of China (nos. 62076111, 62006099, 62006128, 61906078), Nature Science Foundation of Jiangsu, China (no. BK20191457), Open Project Foundation of Intelligent Information Processing Key Laboratory of Shanxi Province (no. CICIP2020004) and the Key Laboratory of Oceanographic Big Data Mining & Application of Zhejiang Province (no. OBDMA202002).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Wang, X., Wang, P., Yang, X. et al. Attribution reduction based on sequential three-way search of granularity. Int. J. Mach. Learn. & Cyber. 12, 1439–1458 (2021). https://doi.org/10.1007/s13042-020-01244-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-020-01244-x