Abstract

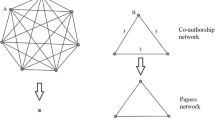

How do the social graphs of Technical Program Committees of conferences look like? and how can studying these social graphs help us to decide whether there is a bias in paper selection processes for conferences? This work empirically studies the structure of program committees’ social graphs, their unique structure and characteristics, and examines the existence of a bias in favor of the collaborators of the program committee members. Specifically, it checks whether a paper written by a past collaborator of a program committee member is more likely to be accepted to the conference (which may be interpreted as indicating a bias on behalf of the program committee members). Twelve ACM/IEEE conferences over several years were studied, and the social network of each annual meeting of a conference was constructed. The vertex set of such a network consists of the program committee members and the authors of the papers accepted to the meeting, and two researchers are neighbors in the network if they were collaborators (namely, have co-authored a paper) prior to the meeting. For each meeting network, the coverage of the program committee in the network, namely, the ratio between the number of authors that are collaborators of the program committee and the total number of the authors-vertices of the meeting, was calculated. The coverage of the real meeting’s social networks was compared to the coverage in artificial meetings. A program committee is viewed as coverage biased if its coverage is significantly higher than that of corresponding artificial meetings of the conference. Our findings show that, although there are some coverage biased program committees, for most conferences the coverage in the real meetings is the same as, and sometimes lower than, that of the artificial ones, indicating that on average there is probably no bias in favor of papers written by collaborators of the program committee members for these high-quality conferences.

Similar content being viewed by others

Notes

Our thanks to Bernard Rous and Craig Rodkin from ACM for providing additional data that are not used in the current work.

Our data are available upon request.

In fact, due to the challenging task of name disambiguation that the DBLP database faces, errors are likely to exist, and there may be duplicate users, or other inaccuracies. One may observe cases where two different authors have the same name, or cases where the same author appears as more than one DBLP user, possibly with different variations of his/her name. This problem is intensified when the researchers, usually females, change their name significantly (for example, after marriage). We handle the latter by our own means, with a high rate of success. Consequently we assume that, mainly after our own corrections, these errors are negligible.

It may be advantageous to consider an extended weighted version of our model, where each edge has a weight depending on the number of papers that the two researchers wrote together and/or on how far in the past those papers were written (e.g., a paper written in the year of the meeting may present a stronger connection between the researchers than one written ten years earlier). The study of such an extended weighted model is beyond the scope of the current work, and is left for future work.

The coverage parameter \(\kappa\) is explained later in the text and discussed in detail in Sect. 4.

The ‘experience’ measure for seniority is based on the indication in the DBLP database of the first year in which the researcher had published a paper. This year is defined as the researcher’s ‘start’ year and the experience is simply the difference between the year of the meeting and the researcher’s start year.

As implemented in the Mathematica program.

Note that in the PA graph the chairs (the first three) form a clique, which is less favorable for the starness measure. However, since the starness measure allows some closure of triangles, the PA model still exhibits stars, though a little less than the ER model.

We also compared to the ER model, but this comparison is irrelevant, since we get that the relative betweenness of the chairs is close to 1. This happens because in this model edges are chosen uniformly.

Using the PA model for the cliqueness measure is irrelevant since the three first nodes in this model, which we choose to be the chairs, form a clique by definition.

Using the PA model is irrelevant here, since in the PA model all nodes are connected by definition.

References

ACM Digital Library: http://dl.acm.org/

Aiken A (2011) Advice for program chairs. SIGPLAN Not 46(4):19

Albert R, Barabási A (2002) Statistical mechanics of complex networks. Rev Mod Phys 74(1):47–97

Anderson T (2009) Conference reviewing considered harmful. ACM SIGOPS Oper Syst Rev 43(2):108–116

Avin C, Lotker Z, Peleg D, Turkel I (2015) Social network analysis of program committees and paper acceptance fairness. In: Proceedings of the 2015 IEEE/ACM international conference on advances in social networks analysis and mining 2015, pp 488–495. ACM

Bowen DD, Perloff R, Jacoby J (1972) Improving manuscript evaluation procedures. Am Psychol 27(3):221

Erdős P, Rényi A (1960) On the evolution of random graphs. Publ Math Inst Hung Acad 5:17–61

Fisher RA (1935) The design of experiments (1960, Hafner: New York)

Freeman LC (1977) A set of measures of centrality based on betweenness. Sociometry 40:35–41

Freeman LC (1979) Centrality in social networks conceptual clarification. Soc Netw 1(3):215–239

Laband DN, Piette MJ (1994) Favoritism versus search for good papers: empirical evidence regarding the behavior of journal editors. J Political Econ 102:194–203

Ley M (2009) Dblp: some lessons learned. Proc VLDB Endow 2:1493–1500

Martens B, Cerovsek T (2004) Experiences with web-based scientific collaboration: managing the submission and review process of scientific conferences. In: ELPUB

Newman MEJ (2001) The structure of scientific collaboration networks. Proc Natl Acad Sci 98(2):404–409. doi:10.1073/pnas.98.2.404

Oswald AJ (2008) Can we test for bias in scientific peer-review?

Papagelis M, Plexousakis D, Nikolaou PN (2005) Confious: managing the electronic submission and reviewing process of scientific conferences. In: Kitsuregawa M et al (eds) Web information systems engineering. Springer, Heidelberg, p 711–720

Pesenhofer A, Mayer R, Rauber A (2006) Improving scientific conferences by enhancing conference management systems with information mining capabilities. In: 1st international conference on digital information management, pp 359–366. IEEE

Pesenhofer A, Mayer R, Rauber A et al (2008) Automating the management of scientific conferences using information mining techniques. J. Digit Inf Manag 6(1):3

Schulzrinne H (2009) Double-blind reviewing: more placebo than miracle cure? ACM SIGCOMM Comput Commun Rev 39(2):56–59

Stehbens W (1999) Basic philosophy and concepts underlying scientific peer review. Med Hypotheses 52(1):31–36

Vardi MY (2009) Conferences vs. journals in computing research. Commun ACM 52(5):5

Watts DJ, Strogatz SH (1998) Collective dynamics of small-worldnetworks. Nature 393(6684):440–442

Acknowledgments

All authors were supported in part by the Israel Science Foundation (Grant 1549/13) and the second author was also supported in part by Fondation des Sciences Mathmatiques de Paris and by the Ministry of Science Technology and Space, Israel, and by French–Israeli project MAIMONIDE 31768XL, and by the French–Israeli Laboratory FILOFOCS.

Author information

Authors and Affiliations

Corresponding author

Additional information

This work is part of the Ph.D. Thesis of Itzik Turkel.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Avin, C., Lotker, Z., Peleg, D. et al. On social networks of program committees. Soc. Netw. Anal. Min. 6, 18 (2016). https://doi.org/10.1007/s13278-016-0328-y

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13278-016-0328-y