Abstract

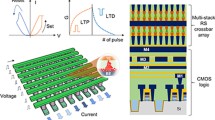

Although significant breakthrough has been made in deep neural networks (DNNs), which show impressive potential as a general solution to the field of artificial intelligence (AI), DNN computing tasks generally need billions of floating-point multiplication and accumulation (MAC) operations, bringing great challenges on hardware resource, power consumption, and communication bandwidth. Computing-in-memory (CIM) architecture, especially the one based on spintronic memories, which integrates the memory and computing together, shows fascinating prospects in DNNs for its high energy efficiency and good endurance. In this work, we leveraged coupled magnetic tunnel junctions (MTJs), which are driven by the interplay of field-free spin orbit torque (SOT) and spin transfer torque (STT) effects, to realize two different innovative stateful CIM paradigms for ternary MAC operations. Based on both paradigms, we further demonstrated the highly parallel array structures to implement a memory array supportive of functioning both as memory and CIM for ternary neural networks (TNNs). Our results demonstrated that the area overhead for CIM is only about 0.8% of the memory array. The advantage of our design in power consumption was illustrated in comparison with the CPU, GPU and other state-of-the-art works.

Similar content being viewed by others

References

Agrawal, A., Jaiswal, A., Roy, D., et al.: Xcel-ram: accelerating binary neural networks in high-throughput sram compute arrays. IEEE Trans. Circuits Syst. I Regul. Pap. 66(8), 3064–3076 (2019). https://doi.org/10.1109/TCSI.2019.2907488

Ben-Hur, R., Ronen, R., Haj-Ali, A., et al.: Simpler magic: Synthesis and mapping of in-memory logic executed in a single row to improve throughput. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 39(10), 2434–2447 (2020). https://doi.org/10.1109/TCAD.2019.2931188

Bocquet, M., Hirztlin, T., Klein, J., et al.: In-memory and error-immune differential rram implementation of binarized deep neural networks. In: 2018 IEEE International Electron Devices Meeting (IEDM). pp. 20.6.1–20.6.4 (2018). https://doi.org/10.1109/IEDM.2018.8614639.

Cai, H., Jiang, H., Zhou, Y., et al.: Interplay bitwise operation in emerging mram for efficient in-memory computing. CCF Trans High Perform Comput 2(3), 282–296 (2020). https://doi.org/10.1007/s42514-020-00045-6

Chang, L., Ma, X., Wang, Z., et al.: Dasm: data-streaming-based computing in nonvolatile memory architecture for embedded system. IEEE Trans Very Large Scale Integration (VLSI) Syst 27(9), 2046–2059 (2019a)

Chang, L., Ma, X., Wang, Z., et al.: Pxnor-bnn: In/with spin-orbit torque mram preset-xnor operation-based binary neural networks. IEEE Trans Very Large Scale Integration (VLSI) Syst 27(11), 2668–2679 (2019b)

Chen, W. H., Li, K. X., Lin, W. Y., et al.: A 65nm 1mb nonvolatile computing-in-memory reram macro with sub-16ns multiply-and-accumulate for binary dnn ai edge processors. In: 2018 IEEE International Solid - State Circuits Conference - (ISSCC). pp. 494–496 (2018). https://doi.org/10.1109/ISSCC.2018.8310400.

Chen, H. M., Ni, C. E., Chang, K. Y., et al.: On reconfiguring memory-centric ai edge devices for cim. In: 2021 18th International SoC Design Conference (ISOCC). pp. 262–263 (2021). https://doi.org/10.1109/ISOCC53507.2021.9613893.

Cofano, M., Vacca, M., Santoro, G., et al.: Exploiting the logic-in-memory paradigm for speeding-up data-intensive algorithms. Integration. (2019). https://doi.org/10.1016/j.vlsi.2019.02.007

Dong, X., Xu, C., Xie, Y., et al.: Nvsim: A circuit-level performance, energy, and area model for emerging nonvolatile memory. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 31(7), 994–1007 (2012). https://doi.org/10.1109/TCAD.2012.2185930

Gallo, M.L., Sebastian, A., Cherubini, G., et al.: Compressed sensing with approximate message passing using in-memory computing. IEEE Trans. Electron Devices 65(10), 4304–4312 (2018). https://doi.org/10.1109/TED.2018.2865352

Hamdioui, S., Nguyen, H. A. D., Taouil, M., et al.: Applications of computation-in-memory architectures based on memristive devices. In: 2019 Design, Automation & Test in Europe Conference & Exhibition (DATE). pp. 486–491 (2019). https://doi.org/10.23919/DATE.2019.8715020.

Huang, S., Jiang, H., Peng, X., et al.: Xor-cim: Compute-in-memory sram architecture with embedded xor encryption. In: 2020 IEEE/ACM International Conference On Computer Aided Design (ICCAD). pp. 1–6 (2020).

Ielmini, D., Wong, H.S.P.: In-memory computing with resistive switching devices. Nat. Electron. 1(6), 333–343 (2018). https://doi.org/10.1038/s41928-018-0092-2

Jiang, Z., Yin, S., Seo, J.-s., et al.: Xnor-sram. In: Proceedings of the 2019 on Great Lakes Symposium on VLSI. pp. 417–422 (2019). https://doi.org/10.1145/3299874.3319458.

Kang, W., Ran, Y., Zhang, Y., et al.: Modeling and exploration of the voltage-controlled magnetic anisotropy effect for the next-generation low-power and high-speed mram applications. IEEE Trans. Nanotechnol. 16(3), 387–395 (2017). https://doi.org/10.1109/TNANO.2017.2660530

Kang, W., Zhang, H., Zhao, W.: Spintronic memories: From memory to computing-in-memory. In: 2019 IEEE/ACM International Symposium on Nanoscale Architectures (NANOARCH). pp. 1–2 (2019). https://doi.org/10.1109/NANOARCH47378.2019.181298.

Kang, W., Deng, E., Wang, Z., et al.: Spintronic logic-in-memory paradigms and implementations. 63. pp. 215–229 (2020). https://doi.org/10.1007/978-981-13-8379-3_9.

Keckler, S.W., Dally, W.J., Khailany, B., et al.: Gpus and the future of parallel computing. IEEE Micro 31(5), 7–17 (2011). https://doi.org/10.1109/MM.2011.89

Krizhevsky, A., Hinton, G.: Learning multiple layers of features from tiny images. Computer Science Department, University of Toronto, Tech. Rep, 1(pp. (2009).

Lecun, Y., Bottou, L., Bengio, Y., et al.: Gradient-based learning applied to document recognition. Proc. IEEE 86(11), 2278–2324 (1998). https://doi.org/10.1109/5.726791

Li, H., Gao, B., Chen, Z., et al.: A learnable parallel processing architecture towards unity of memory and computing. Sci. Rep. 5(1), 13330 (2015). https://doi.org/10.1038/srep13330

Li, Z., Wang, Z., Xu, L., et al.: Rram-dnn: An rram and model-compression empowered all-weights-on-chip dnn accelerator. IEEE J. Solid-State Circuits 56(4), 1105–1115 (2021). https://doi.org/10.1109/JSSC.2020.3045369

Li, S., Xu, C., Zou, Q., et al.: Pinatubo: A processing-in-memory architecture for bulk bitwise operations in emerging non-volatile memories. In: 2016a 53nd ACM/EDAC/IEEE Design Automation Conference (DAC). pp. 1–6 (2016a). https://doi.org/10.1145/2897937.2898064.

Li, F., Zhang, B., Liu, B.: Ternary weight networks. arXiv e-prints, (2016b). arXiv:1605.04711.

Liang, S., Yin, S., Liu, L., et al.: Fp-bnn: Binarized neural network on fpga. Neurocomputing 275, 1072–1086 (2018). https://doi.org/10.1016/j.neucom.2017.09.046

Linn, E., Rosezin, R., Tappertzhofen, S., et al.: Beyond von neumann–logic operations in passive crossbar arrays alongside memory operations. Nanotechnology 23(30), 305205 (2012). https://doi.org/10.1088/0957-4484/23/30/305205

Luo, L., Zhang, H., Bai, J., et al.: Spinlim: Spin orbit torque memory for ternary neural networks based on the logic-in-memory architecture. In: 2021 Design, Automation & Test in Europe Conference & Exhibition (DATE). pp. 1865–1870 (2021). https://doi.org/10.23919/DATE51398.2021.9474022.

Oh, H., Kim, H., Kang, N., et al.: Single rram cell-based in-memory accelerator architecture for binary neural networks. In: 2021 IEEE 3rd International Conference on Artificial Intelligence Circuits and Systems (AICAS). pp. 1–4 (2021). https://doi.org/10.1109/AICAS51828.2021.9458444.

Pan, Y., Jia, X., Cheng, Z., et al.: An stt-mram based reconfigurable computing-in-memory architecture for general purpose computing. CCF Trans. High Perform. Comput. 2(3), 272–281 (2020). https://doi.org/10.1007/s42514-020-00038-5

Qin, H., Gong, R., Liu, X., et al.: Forward and backward information retention for accurate binary neural networks. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). pp. 2247–2256 (2020). https://doi.org/10.1109/CVPR42600.2020.00232.

Qiu, K., Chen, W., Xu, Y., et al.: A peripheral circuit reuse structure integrated with a retimed data flow for low power rram crossbar-based cnn. In: 2018 Design, Automation & Test in Europe Conference & Exhibition (DATE). pp. 1057–1062 (2018). https://doi.org/10.23919/DATE.2018.8342168.

Resch, S., Khatamifard, S.K., Chowdhury, Z.I., et al.: Pimball: Binary neural networks in spintronic memory. ACM Trans. Arch. Code Optimiz. 16(4), 1–26 (2018)

Santoro, G., Turvani, G., Graziano, M.: New logic-in-memory paradigms: an architectural and technological perspective. Micromachines 10(6), 368 (2019). https://doi.org/10.3390/mi10060368

Sebastian, A., Gallo, M., Burr, G., et al.: Tutorial: brain-inspired computing using phase-change memory devices. J. Appl. Phys. 124(11), 111101 (2018). https://doi.org/10.1063/1.5042413

Shen, W., Huang, P., Fan, M., et al.: Stateful logic operations in one-transistor-one- resistor resistive random access memory array. IEEE Electron Device Lett. 40(9), 1538–1541 (2019). https://doi.org/10.1109/LED.2019.2931947

Si, X., Chang, M.-F., Khwa, W.-S., et al.: A dual-split 6t sram-based computing-in-memory unit-macro with fully parallel product-sum operation for binarized dnn edge processors. IEEE Trans. Circuits Syst. I Regul. Pap. 66(11), 4172–4185 (2019). https://doi.org/10.1109/tcsi.2019.2928043

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. Computer Science. https://doi.org/10.48550/arXiv.1409.1556 (2014).

Wang, Z., Su, Y., Li, Y., et al.: Functionally complete boolean logic in 1t1r resistive random access memory. IEEE Electron Device Lett. 38(2), 179–182 (2017). https://doi.org/10.1109/LED.2016.2645946

Wang, M., Cai, W., Zhu, D., et al.: Field-free switching of a perpendicular magnetic tunnel junction through the interplay of spin-orbit and spin-transfer torques. Nat. Electron. 1(11), 582–588 (2018). https://doi.org/10.1038/s41928-018-0160-7

Wang, H., Kang, W., Zhang, L., et al.: High-density, low-power voltage-control spin orbit torque memory with synchronous two-step write and symmetric read techniques. In: 2020 Design, Automation & Test in Europe Conference & Exhibition (DATE). pp. 1217–1222 (2020). https://doi.org/10.23919/DATE48585.2020.9116576.

Xu, L., Yuan, R., Zhu, Z., et al.: Memristor-based efficient in-memory logic for cryptologic and arithmetic applications. Adv. Mater. Technol. (2019). https://doi.org/10.1002/admt.201900212

Yin, S., Ouyang, P., Yang, J., et al.: An energy-efficient reconfigurable processor for binary-and ternary-weight neural networks with flexible data bit width. IEEE J. Solid-State Circuits 54(4), 1120–1136 (2019). https://doi.org/10.1109/jssc.2018.2881913

Yuan, Z., Yue, J., Yang, H., et al.: Sticker: A 0.41–62.1 tops/w 8bit neural network processor with multi-sparsity compatible convolution arrays and online tuning acceleration for fully connected layers. In: 2018 IEEE Symposium on VLSI Circuits. pp. 33–34 (2018). https://doi.org/10.1109/VLSIC.2018.8502404.

Yue, J., Feng, X., He, Y., et al.: 15.2 a 2.75-to-75.9tops/w computing-in-memory nn processor supporting set-associate block-wise zero skipping and ping-pong cim with simultaneous computation and weight updating. In: 2021 IEEE International Solid- State Circuits Conference (ISSCC). pp. 238–240 (2021). https://doi.org/10.1109/ISSCC42613.2021.9365958.

Zhang, H., Kang, W., Wang, L., et al.: Stateful reconfigurable logic via a single-voltage-gated spin hall-effect driven magnetic tunnel junction in a spintronic memory. IEEE Trans. Electron Devices 64(10), 4295–4301 (2017). https://doi.org/10.1109/TED.2017.2726544

Zhang, H., Kang, W., Cao, K., et al.: Spintronic processing unit in spin transfer torque magnetic random access memory. IEEE Trans. Electron Devices 4, 1–6 (2019a). https://doi.org/10.1109/TED.2019.2898391

Zhang, H., Kang, W., Wu, B., et al.: Spintronic processing unit within voltage-gated spin hall effect mrams. IEEE Trans. Nanotechnol. 18, 473–483 (2019b). https://doi.org/10.1109/tnano.2019.2914009

Zhang, H., Liu, J., Kang, W., et al.: A 40nm 33.6tops/w 8t-sram computing-in-memory macro with dac-less spike-pulse-truncation input and adc-less charge-reservoir-integrate-counter output. In: 2021 IEEE International Conference on Integrated Circuits, Technologies and Applications (ICTA). pp. 123–124 (2021). https://doi.org/10.1109/ICTA53157.2021.9661898.

Zhao, W., Chappert, C., Javerliac, V., et al.: High speed, high stability and low power sensing amplifier for mtj/cmos hybrid logic circuits. IEEE Trans. Magn. 45(10), 3784–3787 (2009). https://doi.org/10.1109/TMAG.2009.2024325

Zhao, R., Song, W., Zhang, W., et al.: Accelerating binarized convolutional neural networks with software-programmable fpgas. In: Proceedings of the 2017 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays. pp. 15–24 (2017). https://doi.org/10.1145/3020078.3021741.

Zhou, X., Zhu, X., Chen, B., et al.: An 8-bit rram based multiplier for hybrid memory computing. In: 2019 IEEE International Workshop on Future Computing (IWOFC). pp. 1–3 (2019). https://doi.org/10.1109/IWOFC48002.2019.9078444.

Acknowledgements

This work is supported by the Beijing Nova Program from Beijing Municipal Science and Technology Commission (No. Z201100006820042 and No. Z211100002121014), National Natural Science Foundation of China (Grants No. 61871008). Lichuan Luo and He Zhang contributed equally to this work. On behalf of all authors, the corresponding author (Wang Kang) states there is no conflict of interest.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Luo, L., Liu, D., Zhang, H. et al. SpinCIM: spin orbit torque memory for ternary neural networks based on the computing-in-memory architecture. CCF Trans. HPC 4, 421–434 (2022). https://doi.org/10.1007/s42514-022-00108-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42514-022-00108-w