Abstract

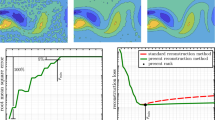

The purpose of kernel adaptive filtering (KAF) is to map input samples into reproducing kernel Hilbert spaces and use the stochastic gradient approximation to address learning problems. However, the growth of the weighted networks for KAF based on existing kernel functions leads to high computational complexity. This paper introduces a reduced Gaussian kernel that is a finite-order Taylor expansion of a decomposed Gaussian kernel. The corresponding reduced Gaussian kernel least-mean-square (RGKLMS) algorithm is derived. The proposed algorithm avoids the sustained growth of the weighted network in a nonstationary environment via an implicit feature map. To verify the performance of the proposed algorithm, extensive simulations are conducted based on scenarios involving time-series prediction and nonlinear channel equalization, thereby proving that the RGKLMS algorithm is a universal approximator under suitable conditions. The simulation results also demonstrate that the RGKLMS algorithm can exhibit a comparable steady-state mean-square-error performance with a much lower computational complexity compared with other algorithms.

Similar content being viewed by others

References

B. Chen, S. Zhao, P. Zhu, J.C. Principe, Quantized kernel least mean square algorithm. IEEE Trans. Neural Netw. Learn. Syst. 23(1), 22–32 (2012)

B. Chen, N. Zheng, J.C. Principe, Sparse kernel recursive least squares using l1 regularization and a fixed-point sub-iteration, in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5257–5261 (2014)

J. Choi, A.C.C. Lima, S. Haykin, Kalman filter-trained recurrent neural equalizers for time-varying channels. IEEE Trans. Commun. 53(3), 472–480 (2005)

N. Cristianini, J. Shawe-Taylor, An Introduction to Support Vector Machines: and Other Kernel-Based Learning Methods (University Press, Cambridge, 2000)

Y. Engel, S. Mannor, R. Meir, The kernel recursive least-squares algorithm. IEEE Trans. Signal Process. 52(8), 2275–2285 (2004)

S. Fine, K. Scheinberg, Efficient SVM training using low-rank kernel representations. J. Mach. Learn. Res. 2(2), 243–264 (2002)

W. Gao, J. Chen, C. Richard, J. Huang, Convergence analysis of the augmented complex klms algorithm with pre-tuned dictionary, in IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 2006–2010 (2015)

W. Gao, J. Chen, C. Richard, J.C.M. Bermudez, J. Huang, Online dictionary learning for kernel LMS. IEEE Trans. Signal Process. 62(11), 2765–2777 (2014)

Z. Hu, M. Lin, C. Zhang, Dependent online kernel learning with constant number of random fourier features. IEEE Trans. Neural Netw. Learn. Syst. 26(10), 2464 (2015)

C.P. John, A resource-allocating network for function interpolation. Neural Comput. 3, 213–225 (1991)

G. Kechriotis, E. Zervas, E.S. Manolakos, Using recurrent neural networks for adaptive communication channel equalization. IEEE Trans. Neural Netw. 5(2), 267–278 (1994)

K. Li, J.C. Principe, Transfer learning in adaptive filters: the nearest-instance-centroid-estimation kernel least-mean-square algorithm. IEEE Trans. Signal Process. PP(99), 1–1 (2017)

Q. Liang, J.M. Mendel, Equalization of nonlinear time-varying channels using type-2 fuzzy adaptive filters. IEEE Trans. Fuzzy Syst. 8(5), 551–563 (2000)

W. Liu, I. Park, J.C. Principe, An information theoretic approach of designing sparse kernel adaptive filters. IEEE Trans. Neural Netw. 20(12), 1950–1961 (2009)

W. Liu, I. Park, Y. Wang, J.C. Principe, Extended kernel recursive least squares algorithm. IEEE Trans. Signal Process. 57(10), 3801–3814 (2009)

W. Liu, P.P. Pokharel, J.C. Principe, The kernel least-mean-square algorithm. IEEE Trans. Signal Process. 56(2), 543–554 (2008)

W. Liu, J.C. Principe, Kernel affine projection algorithms. EURASIP J. Adv. Signal Process. 2008(1), 784292 (2008)

W. Liu, J.C. Principe, S. Haykin, Kernel Adaptive Filtering: A Comprehensive Introduction (Wiley, Hoboken, 2010)

S. Maji, A.C. Berg, Max-margin additive classifiers for detection, in IEEE International Conference on Computer Vision, pp. 40–47 (2010)

K. Muller, S. Mika, G. Ratsch, K. Tsuda, B. Scholkopf, K.R. Muller, G. Ratsch, B. Scholkopf, An introduction to kernel-based learning algorithms. IEEE Trans. Neural Netw. 12(2), 181 (2001)

J.C. Patra, P.K. Meher, G. Chakraborty, Nonlinear channel equalization for wireless communication systems using Legendre neural networks. Signal Process. 89(11), 2251–2262 (2009)

T.K. Paul, T. Ogunfunmi, A kernel adaptive algorithm for quaternion-valued inputs. IEEE Trans. Neural Netw. Learn. Syst. 26(10), 2422–2439 (2015)

F. Porikli, Constant time o(1) bilateral filtering, in IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–8 (2008)

A. Rahimi, B. Recht, Random features for large-scale kernel machines, in International Conference on Neural Information Processing Systems, pp. 1177–1184 (2007)

C. Richard, J.C.M. Bermudez, P. Honeine, Online prediction of time series data with kernels. IEEE Trans. Signal Process. 57(3), 1058–1067 (2009)

M. Ring, B.M. Eskofier, An approximation of the gaussian rbf kernel for efficient classification with svms. Pattern Recogn. Lett. 84, 107–113 (2016)

M.A. Takizawa, M. Yukawa, Efficient dictionary-refining kernel adaptive filter with fundamental insights. IEEE Trans. Signal Process. 64(16), 4337–4350 (2016)

S. Wang, Y. Zheng, C. Ling, Regularized kernel least mean square algorithm with multiple-delay feedback. IEEE Signal Process. Lett. 23(1), 98–101 (2015)

C.K.I. Williams, M. Seeger, Using the Nyström method to speed up kernel machines, in International Conference on Neural Information Processing Systems, pp. 661–667 (2000)

L. Xu, D. Huang, Y. Guo, Robust blind learning algorithm for nonlinear equalization using input decision information. IEEE Trans. Neural Netw. Learn. Syst. 26(12), 3009–3020 (2015)

S. Zhao, B. Chen, P. Zhu, J.C. Principe, Fixed budget quantized kernel least-mean-square algorithm. Signal Process. 93(9), 2759–2770 (2013)

Y. Zheng, S. Wang, J. Feng, K.T. Chi, A modified quantized kernel least mean square algorithm for prediction of chaotic time series. Digital Signal Process. 48(C), 130–136 (2016)

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

To prove that the reduced Gaussian kernel function expressed in Eq. (7) is a kernel, the characteristics of kernels are used. The following proposition is given [4]. Let \(K_1\) and \(K_2\) be kernels over \(X\times X\), where \(X\subseteq R\); let \(\alpha \in R^{+}\); let \(f(\cdot )\) be a real-valued function on the space X; and let \(\varPhi X \rightarrow R^m\). Then, the following propositions can be established:

Proposition 1

\(K=K_1+K_2\) is a kernel if \(K_1\) and \(K_2\) are kernels.

Proposition 2

Due to the characteristics of kernels, \(K=\alpha K_1 (k>0)\) is a kernel.

Proposition 3

\(K=f(x_i)f(x_j)\).

Hypothesis I Each order term of Eq. (7) is a kernel. Based on Proposition 1, Eq. (7) is a kernel if Hypothesis I is satisfied. This is equivalent to saying that for any \(p=\{1,2,\ldots ,P\}\), the function \(\sum _{p=1}^{P}\frac{(2a)^p}{p!}x_i^p x_j^p \hbox {exp}(-a(x_i^2+x_j^2))\) is a kernel. Therefore, we need to identify only the pth-order term as a kernel, where \(p=\{1,2,\ldots ,P\}\), because a sum of kernels is itself a kernel.

Hypothesis II The kernel parameter a is assumed to be larger than 0.

If Hypothesis I is satisfied, then the coefficients \(\frac{(2a)^2}{p!}\) of each order are larger than 0. Based on Proposition 2, Hypothesis II can be said to be satisfied if the function \(x_i^p x_j^p \hbox {exp}(-a(x_i^2+x_j^2))\) is a kernel for any \(p=\{1,2,\ldots ,P\}\). Clearly, based on Proposition 3, \(x_i^p x_j^p \hbox {exp}(-a(x_i^2+x_j^2))=x_i^p x_j^p \hbox {exp}(-ax_i^2) \hbox {exp}(-ax_j^2)=f(x_i)f(x_j)\) holds. Therefore, the reduced Gaussian kernel function given in Eq. (7) is a kernel.

Rights and permissions

About this article

Cite this article

Liu, Y., Sun, C. & Jiang, S. A Reduced Gaussian Kernel Least-Mean-Square Algorithm for Nonlinear Adaptive Signal Processing. Circuits Syst Signal Process 38, 371–394 (2019). https://doi.org/10.1007/s00034-018-0862-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00034-018-0862-0