Abstract

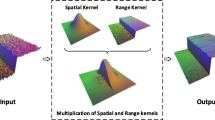

Depth image super-resolution (DISR) is a significant yet challenging task. In this paper, we propose a novel edge-guided framework for color-guided DISR aiming at reducing the artifacts caused by the introduced color image. Considering the different view synthesis characteristics of texture and smooth regions in depth image, we propose that the edge and smooth regions of depth map should be reconstructed in different ways. In our framework, a novel joint trilateral filter is built firstly, which has two different modes: one for the pixels on the edges and the other for the pixels in the smooth regions. Secondly, in each filtering iteration during the whole upsampling process, we use the edge map updated by the upsampled depth map as a guidance to decide when to change the filter mode. Benefiting from the strategy, the high-resolution depth map reconstructed has less texture copying and contains sharp and smooth edges. Experimental results demonstrate the effectiveness of our approach over prior depth map upsampling works.

Similar content being viewed by others

Availability of data and materials

The data that support the findings of this study are openly available at https://vision.middlebury.edu/stereo/data/scenes2014/, reference number [35].

References

Alexiadis, D.S., Zarpalas, D., Daras, P.: Real-time, realistic full-body 3D reconstruction and texture mapping from multiple Kinects. In: IVMSP 2013, Seoul, pp. 1–4 (2013)

Zhang, Z., Wei, S., Song, Y., Zhang, Y.: Gesture recognition using enhanced depth motion map and static pose map. In: 2017 12th IEEE International Conference on Automatic Face and Gesture Recognition (FG 2017), Washington, DC, pp. 238–244 (2017)

Chiang, M., Feng, J., Zeng, W., Fang, C., Chen, S.: A vision-based human action recognition system for companion robots and human interaction. In: 2018 IEEE 4th International Conference on Computer and Communications (ICCC), Chengdu, China, pp. 1445–1452 (2018)

Kopf, J., Cohen, M., Lischinski, D., Uyttendaele, M.: Joint bilateral upsampling. ACM Trans. Graph. 26(3), 839–846 (2007)

Diebel, J., Thrun, S.: An application of Markov random fields to range sensing. In: Proceedings of Conference on Neural Information Processing System (NIPS), Vancouver, BC, Canada, pp. 291–298 (2005)

Ferstl, D., Reinbacher, C., Ranftl, R., Ruether, M., Bischof, H.: Image guided depth upsampling using anisotropic total generalized variation. In: 2013 IEEE International Conference on Computer Vision, Sydney, NSW, pp. 993–1000 (2013)

Yang, J., Ye, X., Li, K., Hou, C., Wang, Y.: Color-guided depth recovery from RGB-D data using an adaptive autoregressive model. IEEE Trans. Image Process. 23, 3443–3458 (2014)

Liu, X., Zhai, D., Chen, R., Ji, X., Zhao, D., Gao, W.: Depth super-resolution via joint color-guided internal and external regularizations. IEEE Trans. Image Process. 28(4), 1636–1645 (2019)

Yang, Q., Yang, R., Davis, J., Nister, D.: Spatial-depth super resolution for range images. In: IEEE International Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–8 (2007)

Riemens, A.K., Gangwal, O.P., Barenbrug, B., Berretty, R.-P.M.: Multistep joint bilateral depth upsampling. In: Proceedings of SPIE 7257, Visual Communications and Image Processing (2009)

Chan, D., Buisman, H., Theobalt, C., Thrun, S.: A noise-aware filter for real-time depth upsampling. In: Proceedings of Workshop Multi-Camera Multi-Modal Sensor Fusion Algorithms Application, pp. 1–12 (2008)

Lo, K., Wang, Y.F., Hua, K.: Edge-preserving depth map upsampling by joint trilateral filter. IEEE Trans. Cybernet. 48(1), 371–384 (2018)

Ren, Y., Liu, J., Yuan, H., Xiao, Y.: Depth up-sampling via pixel-classifying and joint bilateral filtering. KSII Trans. Internet Inf. Syst. 12, 3217–3238 (2018)

Xiang, X., Yan, Z., Nan, C., Xu, W., Zhang, L.: A modified joint trilateral filter based depth map refinement method. In: 2016 12th World Congress on Intelligent Control and Automation (WCICA), Guilin, pp. 1403–1407 (2016)

Lu, X., Guo, Y., Liu, N., et al.: Non-convex joint bilateral guided depth upsampling. Multimed. Tools Appl. 77, 15521–15544 (2018)

Yuan, L., Jin, X., Li, Y., Yuan, C.: (2017) Depth map super-resolution via low-resolution depth guided joint trilateral up-sampling. J. Vis. Commun. Image Represent. 46, 280–291 (2017)

Yang, H., Zhang, Z.: Depth image upsampling based on guided filter with low gradient minimization. Vis. Comput. 36, 1411–1422 (2020)

He, K., Sun, J., Tang, X.: Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 35(6), 1397–1409 (2013)

Min, D., Lu, J., Do, M.N.: Depth video enhancement based on weighted mode filtering. IEEE Trans. Image Process. 21(3), 1176–1190 (2012)

Ma, Z., He, K., Wei, Y., Sun, J., Wu, E.: Constant time weighted median filtering for stereo matching and beyond. In: 2013 IEEE International Conference on Computer Vision, Sydney, NSW, pp. 49–56 (2013)

Yang, Q.: Stereo matching using tree filtering. IEEE Trans. Pattern Anal. Mach. Intell. 37(4), 834–846 (2015)

Liu, M., Tuzel, O., Taguchi, Y.: Joint geodesic upsampling of depth images. In: 2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, pp. 169–176 (2013)

Eichhardt, I., Chetverikov, D., Jank\(\acute{o}\), Z.: Image-guided ToF depth upsampling: a survey, Z. Mach. Vis. Appl. 28, 267 (2017)

Dong, C., Loy, C. C., He, K., Tang, X.: Learning a deep convolutional network for image super-resolution. In: Proceedings of 12th European Conference on Computer Vision (ECCV), pp. 184–199 (2014)

Lim, B., Son, S., Kim, H., Nah, S., Lee, K. M.: Enhanced deep residual networks for single image super-resolution. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, pp. 1132–1140 (2017)

Wen, Y., Sheng, B., Li, P., Lin, W., Feng, D.D.: Deep color guided coarse-to-fine convolutional network cascade for depth image super-resolution. IEEE Trans. Image Process. 28(2), 994–1006 (2019)

Tomasi, C., Manduchi, R.: Bilateral filtering for gray and color images. In: Sixth International Conference on Computer Vision (IEEE Cat. No.98CH36271), Bombay, India, pp. 839–846 (1998)

Eisemann, E., Durand, F.: Flash photography enhancement via intrinsic relighting. ACM Trans. Graph. 23(3), 673–678 (2004)

Petschnigg, G., et al.: Digital photography with flash and no-flash image pairs. ACM Trans. Graph. (TOG) 23, 664–672 (2004)

Ding, K., Chen, W., Wu, X.: Optimum inpainting for depth map based on L0 total variation. Vis. Comput. 30, 1311–1320 (2014)

Ferstl, D., R\(\ddot{u}\)ther, M., Bischof, H.: Variational depth superresolution using example-based edge representations. In: IEEE International Conference on Computer Vision (ICCV), Santiago, pp. 513–521 (2015)

Aodha, O. M., Campbell, N. D. F., Nair, A., Brostow, G. J.: Patch based synthesis for single depth image super-resolution. In: Proceedings of 12th European Conference on Computer Vision (ECCV), pp. 71–84 (2012)

Xie, J., Feris, R.S., Sun, M.: Edge-guided single depth image super resolution. IEEE Trans. Image Process. 25(1), 428–438 (2016)

Zhou, D., Wang, R., Yang, X., Zhang, Q., Wei, X.: Depth image superresolution reconstruction based on a modified joint trilateral filter. R. Soc. Open Sci. 6, 181074 (2019)

Scharstein, D., et al.: High-resolution stereo datasets with subpixel accurate ground truth. In: Proceedings of German Conference on Pattern Recognition, pp. 31–42 (2014)

Gilboa, G., Sochen, N., Zeevi, Y.Y.: Image enhancement and denoising by complex diffusion processes. IEEE Trans. Pattern Anal. Mach. Intell. 26(8), 1020–1036 (2004)

Funding

This study was funded by the National Natural Science Foundation of China under grant No.41830110.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yang, S., Cao, N., Guo, B. et al. Depth map super-resolution based on edge-guided joint trilateral upsampling. Vis Comput 38, 883–895 (2022). https://doi.org/10.1007/s00371-021-02057-x

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-021-02057-x