Abstract

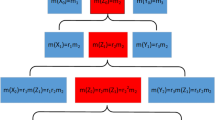

The information volume of mass function based on extropy is proposed in this paper. Although the information volume of the probability distribution can be calculated by Shannon entropy, how to calculate the information of the mass function is still being explored. Recently, the concept of extropy was proposed by Lad et al. Based on extropy, the information volume of mass function is proposed in this paper. For a basic probability assignment function (BPA), if the focal elements of the frame of discernment (FOD) are all single elements, the information volume proposed in this paper is equal to the corresponding extropy. Otherwise, the information volume is greater than the corresponding extropy. Besides, when the cardinality of the FOD is identical, both the total uncertainty case and the mass function distribution of the maximum extropy have the same information volume. More precisely, the distribution of the latter can be regarded as the former obtained by decomposing the BPA once. Finally, the experiment proves that the maximum information volume increases with the increase in the cardinality of the FOD, and has the same limit value log\(_2e\) as the maximum extropy. Some numerical examples are given to prove the nature of the information volume.

Similar content being viewed by others

Availability of data and materials

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.

Code Availability Statement

Not applicable.

References

Al-Labadi L, Berry S (2020) Bayesian estimation of extropy and goodness of fit tests. J Appl Stat. https://doi.org/10.1080/02664763.2020.1812545

Almarashi AM, Algarni A, Abdel-Khalek S, Abd-Elmougod GA, Raqab MZ (2020) Quantum extropy and statistical properties of the radiation field for photonic binomial and even binomial distributions. J Russ Laser Res 41(4):334–343

Becerra A, Ismael de la Rosa J, Gonzalez E, David A, Iracemi Escalante N (2018) Training deep neural networks with non-uniform frame-level cost function for automatic speech recognition. Multimed Tools Appl 77(20):27231–27267

Becerra A, Ismael de la Rosa J, Gonzalez E, Pedroza AD, Iracemi Escalante N, Santos E (2020) A comparative case study of neural network training by using frame-level cost functions for automatic speech recognition purposes in Spanish. Multimed Tools Appl 79(27–28):19669–19715

Bortot S, Pereira RAM, Stamatopoulou A (2020) Shapley and super Shapley aggregation emerging from consensus dynamics in the multicriteria Choquet framework. Decis Econ Finance 43(2):583–611

Buono F, Longobardi M (2020) A dual measure of uncertainty: the Deng extropy. Entropy 22(5):582

Cao Z, Ding W, Wang Y, Hussain FK, Al-Jumaily A, Lin CT (2020) Effects of repetitive SSVEPS on EEG complexity using multiscale inherent fuzzy entropy. Neurocomputing 389:198–206

Chen L, Deng Y, Cheong KH (2021) Probability transformation of mass function: a weighted network method based on the ordered visibility graph. Eng Appl Artif Intell 105:104438. https://doi.org/10.1016/j.engappai.2021.104438

Dempster AP (1967) Upper and lower probabilities induced by a multivalued mapping. Ann Math Stat 38(2):325–339

Deng Y (2020a) Information volume of mass function. Int J Comput Commun Control 15(6):3983

Deng Y (2020b) Uncertainty measure in evidence theory. Sci China Inf Sci 63(11):210201

Deng J, Deng Y (2021) Information volume of fuzzy membership function. Int J Comput Commun Control. https://doi.org/10.15837/ijccc.2021.1.4106

Deng X, Jiang W (2019) A total uncertainty measure for D numbers based on belief intervals. Int J Intell Syst 34(12):3302–3316

Gao X, Su X, Qian H, Pan X (2021) Dependence assessment in human reliability analysis under uncertain and dynamic situations. Nuclear Eng Technol(accepted)

Gilio A, Sanfilippo G (2019) Generalized logical operations among conditional events. Appl Intell 49(1):79–102

Hohle U (1987) A general theory of fuzzy plausibility measures. J Math Anal Appl 127(2):346–364

James RG, Crutchfield JP (2017) Multivariate dependence beyond Shannon information. Entropy 19(10):531

Jahanshahi SMA, Zarei H, Khammar AH (2020) On cumulative residual extropy. Probab Eng Inf Sci 34(4):605–625

Jose J, Sathar EIA (2019) Residual extropy of k-record values. Stat Probab Lett 146:1–6

Jousselme A-L, Liu C, Grenier D, Bosse E (2005) Measuring ambiguity in the evidence theory. IEEE Trans Syst Man Cybern A Syst Hum 36(5):890–903

Kamari O, Buono F (2020) On extropy of past lifetime distribution. Ricerche di Matematica. https://doi.org/10.1007/s11587-020-00488-7

Krishnan AS, Sunoj SM, Nair N (2020) Some reliability properties of extropy for residual and past lifetime random variables. J Korean Stat Soc 49(2):457–474

Lad F, Sanfilippo G, Agrò G (2015) Extropy: complementary dual of entropy Stat Sci 30(1):40–58

Lad F, Sanfilippo G, Agrò G (2018) The duality of entropy/extropy, and completion of the Kullback information complex. Entropy 20(8). https://doi.org/10.3390/e20080593

Maya R, Irshad MR (2019) Kernel estimation of residual extropy function under alpha-mixing dependence condition. S Afr Stat J 53(2):65–72

Noughabi HA, Jarrahiferiz J (2019) On the estimation of extropy. J Nonparametr Stat 31(1):88–99

Noughabi HA, Jarrahiferiz J (2020) Extropy of order statistics applied to testing symmetry. Commun Stat Simul Comput 1:1–11. https://doi.org/10.1080/03610918.2020.1714660

Qiu G (2017) The extropy of order statistics and record values. Stat Probab Lett 120:52–60

Qiu G, Jia K (2018) The residual extropy of order statistics. Stat Probab Lett 133:15–22

Raqab MZ, Qiu G (2019) On extropy properties of ranked set sampling. Statistics 53(1–3):210–226

Rényi A (1961) On measures of entropy and information. In: Proceedings IV Berkeley symposium on mathematical statistics and probability, vol 1, pp 547–561

Sanfilippo G, Gilio A, Over DE, Pfeifer N (2020) Probabilities of conditionals and previsions of iterated conditionals. Int J Approx Reason 121:150–173

Sathar EIA, Nair DR (2021) On dynamic survival extropy. Commun Stat Theory Methods 50(6):1295–1313

Shannon CE (1948) Mathematical theory of communication. Bell Syst Tech J 27(3):379–423

Singh S, Lalotra S, Sharma S (2019) Dual concepts in fuzzy theory: entropy and knowledge measure. Int J Intell Syst 34(5):1034–1059

Song Y, Deng Y (2021) Entropic explanation of power set. Int J Comput Commun Control 16(4):4413

Srivastava A, Kaur L (2019) Uncertainty and negation-information theoretic applications. Int J Intell Syst 34(6):1248–1260

Tahmasebi S, Toomaj A (2020) On negative cumulative extropy with applications. Commun Stat Theory Methods 5:1–23. https://doi.org/10.1080/03610926.2020.1831541

Tsallis C (1988) Possible generalization of Boltzmann–Gibbs statistics. J Stat Phys 52(1–2):479–487

Weiss CH (2019) Measures of dispersion and serial dependence in categorical time series. Econometrics 7(2):17

Xiao F (2020) On the maximum entropy negation of a complex-valued distribution. IEEE Trans Fuzzy Syst. https://doi.org/10.1109/TFUZZ.2020.3016723

Xiao F (2021a) CEQD: a complex mass function to predict interference effects. IEEE Trans Cybern. https://doi.org/10.1109/TCYB.2020.3040770

Xiao F (2021b) CaFtR: a fuzzy complex event processing method. Int J Fuzzy Syst. https://doi.org/10.1007/s40815-021-01118-6

Xiao F (2021c) GIQ: a generalized intelligent quality-based approach for fusing multi-source information. IEEE Trans Fuzzy Syst 29(7):2018–2031

Xiong L, Su X, Qian H (2021) Conflicting evidence combination from the perspective of networks. Inf Sci 580:408–418. https://doi.org/10.1016/j.ins.2021.08.088

Xue Y, Deng Y (2021a) Tsallis eXtropy. Commun Stat Theory Methods. https://doi.org/10.1080/03610926.2021.1921804

Xue Y, Deng Y (2021b) Interval-valued belief entropies for Dempster–Shafer structures. Soft Comput 25:8063–8071

Yager RR (1983) Entropy and specificity in a mathematical theory of evidence. Int J Gen Syst 9(4):249–260

Yang J, Xia W, Hu T (2019) Bounds on extropy with variational distance constraint. Probab Eng Inf Sci 33(2):186-204

Zadeh LA (1965) Fuzzy sets. Inf Control 8(3):338–353

Funding

This research is supported by the National Natural Science Foundation of China (No. 62003280). The authors greatly appreciate the reviewers’ suggestions and the editor’s encouragement.

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Material preparation, data collection and analysis were performed by FX. The first draft of the manuscript was written by JL and all authors commented on previous versions of the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors state that there are no conflict of interest.

Ethics approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Liu, J., Xiao, F. Information volume of mass function based on extropy. Soft Comput 26, 2409–2418 (2022). https://doi.org/10.1007/s00500-021-06410-z

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-021-06410-z