Summary

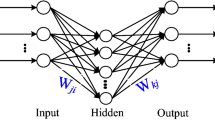

As a generalization of the multi-layer perceptron (MLP), the circular back-propagation neural network (CBP) possesses better adaptability. An improved version of the CBP (the ICBP) is presented in this paper. Despite having less adjustable weights, the ICBP has better adaptability than the CBP, which quite equals the famous Occam’s razor principle for model selection. In its application to time series, considering both structural changes and correlations of time series itself, we introduce the principle of the discounted least squares (DLS) in CBP and ICBP, respectively, and investigate their predicting capacity further. Introduction of DLS improves the predicting performance of both on a benchmark time series data set. Finally, the comparison of experimental results shows that ICBP with DLS (DLS-ICBP) has better predicting performance than DLS-CBP.

Similar content being viewed by others

References

Haykin S (1999) Neural networks: a comprehensive foundation, 2nd edn. Prentice-Hall

Ridolla S, Rovetta S, Zunino R (1997) Circular back-propagation networks for classification. IEEE Trans Neural Net 8(1):84–97

Ridolla S, Rovetta S, Zunino R (1997) CBP networks as a generalized neural model. In: Proceedings of international conference on neural networks, Houston, June, pp 210–214

Ridolla S, Rovetta S, Zunino R (1999) Circular backpropagation networks embed vector quantization. IEEE Trans Neural Net 10(4):972–975

Benzhu Z, Songcan C (2001) Equivalence between vector quantization and ICBP networks (in Chinese). J Data Acquisition Process 16(3):291–294

Benzhu Z (2001) The research on the performance and applications of improved BP neural networks, Thesis of Master degree (in Chinese). Nanjing University of Aeronautics and Astronautics

Dai Qun, Chen Songcan, Zhang Benzhu (2003) Improved CBP neural networks with its applications in time series prediction. Neural Proc Lett 18(3):217–231

Zhang Benzhu, Chen Songcan (2001) The equivalence between ICBP and the Bayesian classifier. Technical Report, Nanjing University of Aeronautics & Astronautics

Refenes A-P, Bentz Y, Bunn DW (1997) Financial time series modelling with discounted least squares backpropagation. Neurocomputing 14:123–138

Philip Chen CL, Wan John Z (1999) A Rapid Learning and Dynamic Stepwise Updating Algorithm for Flat Neural Networks and the Application to Time-Series Prediction. IEEE Trans Syst Man and Cybern Part B 29(1):62–72

Vapnik VN (1995) The nature of statistical learning theory. Springer, Berlin Heidelberg New York

Acknowledgements

We are very grateful for the reviewers’ constructive comments which have improved the presentation of this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Supported by Natural Science (grant No.BK2002092) and “QingLan” project foundations of Jiangsu province and Returnee foundation of China.

Rights and permissions

About this article

Cite this article

Chen, S., Dai, Q. Discounted least squares-improved circular back-propogation neural networks with applications in time series prediction. Neural Comput & Applic 14, 250–255 (2005). https://doi.org/10.1007/s00521-004-0461-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-004-0461-9