Abstract

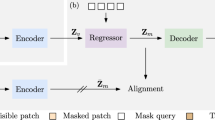

For the original generative adversarial networks (GANs) model, there are three problems that (1) the generator is not robust to the input random noise; (2) the discriminating ability of discriminator gradually reduces in the later stage of training; and (3) it is difficult to reach at the theoretical Nash equilibrium point in the process of training. To solve the above problems, in this paper, a GANs model with denoising penalty and sample augmentation is proposed. In this model, a denoising constraint is firstly designed as the penalty term of the generator, which minimizes the F-norm between the input noise and the encoding of the image generated by the corresponding perturbed noise, respectively. The generator is forced to learn more robust invariant characteristics. Secondly, we put forward a sample augmentation discriminator to improve the ability of discriminator, which is trained by mixing the generated and real images as training samples. Thirdly, in order to achieve the theoretical optimization as far as possible, our model combines denoising penalty and sample augmentation discriminator. Then, denoising penalty and sample augmentation discriminator are applied to five different GANs models whose loss functions include the original GANs, Hinge and least squares loss. Finally, experimental results on the LSUN and CelebA datasets show that our proposed method can help the baseline models improve the quality of generated images.

Similar content being viewed by others

References

Goodfellow I, Pouget-Abadie J, Mirza M (2014) Generative adversarial nets. In: Advances in neural information processing systems, pp 2672–2680

Zhang H, Goodfellow I, Metaxas D (2018) Self-attention generative adversarial networks. arXiv preprint arXiv:1805.08318

Brock A, Jeff D, Karen S (2018) Large scale GAN training for high fidelity natural image synthesis. arXiv preprint arXiv:1809.11096

Saito Y, Takamichi S, Saruwatari H (2018) Statistical parametric speech synthesis incorporating generative adversarial networks. IEEE/ACM Trans Audio Speech Lang Process 26(1):84–96

Bollepalli B, Lauri J, Paavo A (2019) Generative adversarial network-based glottal waveform model for statistical parametric speech synthesis. arXiv preprint arXiv:1903.05955

Dai B, Fidler S, Urtasun R (2017) Towards diverse and natural imagedescriptions via a conditional GAN. In: Proceedings of the IEEE international conference on computer vision, pp 2970–2979

Li D (2018) Generating diverse and accurate visual captions by comparative adversarial learning. arXiv preprint arXiv:1804.00861

Wang TC, Liu MY, Zhu JY (2018) Video-to-video synthesis. arXiv preprint arXiv:1808.06601

Pan Y, Zhaofan Q, Ting Y, Houqiang L, Tao M (2017) To create what you tell: generating videos from captions. In: Proceedings of the 25th ACM international conference on Multimedia, pp 1789–1798

Zhang J, Shu Y, Xu S (2018) Sparsely grouped multi-task generative adversarial networks for facial attribute manipulation. arXiv preprint arXiv:1805.07509

Pumarola A, Antonio A, Martinez AM, Sanfeliu A, Moreno-Noguer F (2018) Ganimation: anatomically-aware facial animation from a single image. In: Proceedings of the European conference on computer vision, pp 818–833

Li T, Qian R, Dong C (2018) Beautygan: instance-level facial makeup transfer with deep generative adversarial network. In: ACM multimedia conference on multimedia conference, pp 645–653

Schlegl T, Seebock P, Waldstein SM (2017) Unsupervised anomaly detection with generative adversarial networks to guide marker discovery. In: International conference on information processing in medical imaging, pp 146–157

Nagarajan V, Kolter JZ (2017) Gradient descent GAN optimization is locally stable. In: Advances in neural information processing systems, pp 5585–5595

Odena A, Buckman J, Olsson C (2018) Is generator conditioning causally related to GAN performance. In: International conference on machine learning, pp 3846–3855

Warde-Farley D, Bengio Y (2016) Improving generative adversarial networks with denoising feature matching

Zhang H, Sun Y, Liu L, Wang X, Li L, Liu W (2018) ClothingOut: a category-supervised GAN model for clothing segmentation and retrieval. In: Neural computing and applications, pp 1–12

Gulrajani I, Ahmed F, Arjovsky M (2017) Improved training of wasserstein GANs. In: Advances in neural information processing systems, pp 5767–5777

Miyato T, Kataoka T, Koyama M (2018) Spectral normalization for generative adversarial networks. arXiv preprint arXiv:1802.05957

Gan Y, Gong J, Ye M, Qian Y, Liu K, Zhang S (2018) GANs with multiple constraints for image translation. Complexity 2018:4613935. https://doi.org/10.1155/2018/4613935

Salimans T, Goodfellow I, Zaremba W (2016) Improved techniques for training GANs. In: Advances in neural information processing systems, pp 2234–2242

Nam S, Kim Y, Kim SJ (2018) Text-adaptive generative adversarial networks: manipulating images with natural language. In: Advances in neural information processing systems, pp 42–51

Liu L, Zhang H, Ji Y, Wu QJ (2019) Toward AI fashion design: an attribute-GAN model for clothing match. Neurocomputing 341:156–167

Mirza M, Osindero S (2014) Conditional generative adversarial nets. arXiv preprint arXiv:1411.1784

Wang X, Girshick R, Gupta A (2018) Non-local neural networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7794–7803

Xu T, Zhang P, Huang Q (2018) AttnGAN: fine-grained text to image generation with attentional generative adversarial networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1316–1324

Kingma D P, Welling M (2013) Auto-encoding variational Bayes. arXiv preprint arXiv:1312.6114

Oord A, Kalchbrenner N, Kavukcuoglu K (2016) Pixel recurrent neural networks. arXiv preprint arXiv:1601.06759

Salimans T, Karpathy A, Chen X (2017) Pixelcnn++: improving the pixelcnn with discretized logistic mixture likelihood and other modifications. arXiv preprint arXiv:1701.05517

Radford A, Metz L, Chintala S (2015) Unsupervised representation learning with deep convolutional generative adversarial networks. arXiv preprint arXiv:1511.06434

Gan Y, Liu K, Ye M, Qian Y (2019) Generative adversarial networks with augmentation and penalty, Neurocomputing, https://doi.org/10.1016/j.neucom.2019.06.015

Zhang H, Xu T, Li H (2018) StackGAN++: Realistic image synthesis with stacked generative adversarial networks. In: IEEE transactions on pattern analysis and machine intelligence

Zhu J Y, Park T, Isola P (2017) Unpaired image-to-image translation using cycle-consistent adversarial networks. In: Proceedings of the IEEE international conference on computer vision, pp 2223–2232

Gan Y, Gong J, Ye M (2018) Unpaired cross domain image translation with augmented auxiliary domain information. Neurocomputing 316:112–123

Yi Z, Zhang H, Tan P (2017) DualGAN: unsupervised dual learning for image-to-image translation. In: Proceedings of the IEEE international conference on computer vision, pp 2849–2857

Bau D, Zhu JY, Strobelt H (2018) GAN dissection: visualizing and understanding generative adversarial networks. arXiv preprint arXiv:1811.10597

Yu J, Lin Z, Yang J (2018) Generative image inpainting with contextual attention. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 5505–5514

Salimans T, Goodfellow I, Zaremba W, Cheung V, Radford A, Cheung X (2016) Improved techniques for training GANs. In: Advances in neural information processing systems, pp 2234–2242

Hou M, Chaib-Draa B, Li C, Zhao Q (2018) Generative adversarial positive-unlabeled learning. In: Proceedings of the 27th international joint conference on artificial intelligence, pp 2255–2261

Mao X, Li Q, Xie H (2017) Least squares generative adversarial networks. In: Proceedings of the IEEE international conference on computer vision, pp 2794–2802

Arjovsky M, Chintala S, Bottou L (2017) Wasserstein GAN. arXiv preprint arXiv:1701.07875

Yu F, Seff A, Zhang Y (2015) LSUN: construction of a large-scale image dataset using deep learning with humans in the loop. arXiv preprint arXiv:1506.03365

Liu Z, Luo P, Wang X (2015) Deep learning face attributes in the wild. In: Proceedings of the IEEE international conference on computer vision, pp 3730–3738

Heusel M, Ramsauer H, Unterthiner T (2017) Gans trained by a two time-scale update rule converge to a local Nash equilibrium. In: Advances in neural information processing systems, pp 6626–6637

Choi Y, Choi M, Kim M (2018) StarGAN: unified generative adversarial networks for multi-domain image-to-image translation. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 8789–8797

Acknowledgements

This work was supported in part by the National Key R&D Program of China (2018YFE0203903), National Natural Science Foundation of China (61773093), Important Science and Technology Innovation Projects in Chengdu (2018-YF08-00039-GX) and Research Programs of Sichuan Science and Technology Department (17ZDYF3184).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

We declare that we have no financial and personal relationships with other people or organizations that can inappropriately influence our work and there is no professional or other personal interest of any nature or kind in any product, service and/or company that could be construed as influencing the position presented in, or the review of, the manuscript entitled Generative Adversarial Networks with Denoising Penalty and Sample Augmentation.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Gan, Y., Liu, K., Ye, M. et al. Generative adversarial networks with denoising penalty and sample augmentation. Neural Comput & Applic 32, 9995–10005 (2020). https://doi.org/10.1007/s00521-019-04526-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-019-04526-w