Abstract

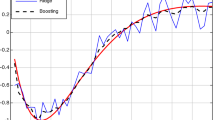

Various forms of additive modeling techniques have been successfully used in many data mining and machine learning–related applications. In spite of their great success, boosting algorithms still suffer from a few open-ended problems that require closer investigation. The efficiency of any additive modeling technique relies significantly on the choice of the weak learners and the form of the loss function. In this paper, we propose a novel multi-resolution approach for choosing the weak learners during additive modeling. Our method applies insights from multi-resolution analysis and chooses the optimal learners at multiple resolutions during different iterations of the boosting algorithms, which are simple yet powerful additive modeling methods. We demonstrate the advantages of this novel framework in both classification and regression problems and show results on both synthetic and real-world datasets taken from the UCI machine learning repository. Though demonstrated specifically in the context of boosting algorithms, our framework can be easily accommodated in general additive modeling techniques. Similarities and distinctions of the proposed algorithm with the popularly used methods like radial basis function networks are also discussed.

Similar content being viewed by others

References

Allwein E, Schapire R, Singer Y (2001) Reducing multiclass to binary: a unifying approach for margin classifiers. J Mach Learn Res 1: 113–141

Athitsos V, Alon J, Sclaroff S, Kollios G (2008) Boostmap: an embedding method for efficient nearest neighbor retrieval. IEEE Trans Pattern Anal Mach Intell 30(1): 89–104

Bauer E, Kohavi R (1999) An empirical comparison of voting classification algorithms: bagging, boosting, and variants. Mach Learn 36(1–2): 105–139

Bishop CM (1995) Neural networks for pattern recognition. Oxford University Press, Oxford

Blake CL, Merz CJ (1998) UCI repository of machine learning databases. http://www.ics.uci.edu/mlearn/MLRepository.html, University of California, Irvine, Deptartment of Information and Computer Sciences

Breiman L (1996) Bagging predictors. Mach Learn 24(2): 123–140

Breiman L (1998) Arcing classifiers. Ann Stat 26(3): 801–849

Buhlmann P, Yu B (2003) Boosting with the l2 loss: regression and classification. J Am Stat Assoc 98(462): 324–339

Collins M, Schapire RE, Singer Y (2002) Logistic regression, adaboost and bregman distances. Mach Learn 48(1–3): 253–285

Duffy N, Helmbold D (2000) Leveraging for regression. In: Proceedings of 13th annual conference on computational learning theory. pp 208–219

Friedman JH (2001) Greedy function approximation: a gradient boosting machine. Ann Stat 29(5): 1189–1232

Friedman JH, Hastie T, Tibshirani R (2000) Additive logistic regression: a statistical view of boosting. Ann Stat 28(2): 337–407

Fritzke B (1994) Fast learning with incremental RBF networks. Neural Process Lett 1(1): 2–5

Graps AL (1995) An introduction to wavelets. IEEE Comput Sci Eng 2(2): 50–61

Hastie T, Tibshirani R, Friedman J (2001) The elements of statistical learning. Data mining, inference, and prediction, chapter boosting and additive trees. Springer, New York

Hong P, Liu XS, Zhou Q, Lu X, Liu JS, Wong WH (2005) A boosting approach for motif modeling using chip-chip data. Bioinformatics 21(11): 2636–2643

Kadiyala S, Shiri N (2008) A compact multi-resolution index for variable length queries in time series databases. Knowl Inf Syst 15(2): 131–147

Krishnaraj Y, Reddy CK (2008) Boosting methods for protein fold recognition: an empirical comparison. In: IEEE International Conference on Bioinformatics and Biomedicine. pp 393–396

Leung Y, Zhang J, Xu Z (2000) Clustering by scale-space filtering. IEEE Trans Pattern Anal Mach Intell 22(12): 1396–1410

Lindeberg T (1994) Scale-space theory in computer vision. Kluwer Academic Publishers, Dordrecht

Mallat S (1989) A theory for multiresolution signal decomposition: the wavelet representation. IEEE Trans Pattern Anal Mach Intell 11: 674–693

National Institute of Standards Information Technology Laboratory and Technology (NIST). Nist strd (statistics reference datasets). http://www.itl.nist.gov/div898/strd/

Park D (2009) Multiresolution-based bilinear recurrent neural network. Knowl Inf Syst 19(2): 235–248

Park J-H, Reddy CK (2007) Scale-space based boosting for weak regressors. In: Proceedings of European Conference on Machine Learning, (ECML ’07). Warsaw, Poland, pp 666–673

Preisach C, Schmidt-Thieme L (2008) Ensembles of relational classifiers. Knowl Inf Syst 14(3): 249–272

Reddy CK, Park J-H (2008) Scale-space kernels for additive modeling. In: Joint IAPR international workshop on structural syntactic and statistical pattern recognition (SSPR & SPR). Orlando, USA, p. 714–723

Reddy CK, Park J-H (2009) Multi-resolution boosting for classification and regression problems. In: Proceedings of Pacific-Asia conference on knowledge discovery and data mining (PAKDD). Bangkok, Thailand, pp 196–207

Rudin C, Schapire RE, Daubechies I (2007) Analysis of boosting algorithms using the smooth margin function. Ann Stat 35(6): 2723–2768

Schapire R, Singer Y, Singhal A (1998) Boosting and rocchio applied to text filtering. In: Proceedings of ACM SIGIR. pp 215–223

Schapire RE, Freund Y, Bartlett P, Lee WS (1998) Boosting the margin: a new explanation for the effectiveness of voting methods. Ann Stat 26(5): 1651–1686

Schapire RE, Singer Y (1999) Improved boosting using confidence-rated predictions. Mach Learn 37(3): 297–336

Sporring J, Nielsen M, Florack L, Johansen P (1997) Gaussian scale-space theory. Kluwer Academic Publishers, Dordrecht

Tieu K, Viola PA (2004) Boosting image retrieval. Int J Comput Vis 56(1–2): 17–36

Viola PA, Jones MJ (2004) Robust real-time face detection. Int J Comput Vis 57(2): 137–154

Webb GI (2000) Multiboosting: a technique for combining boosting and wagging. Mach Learn 40(2): 159–196

Witten IH, Frank E (2005) Data mining: practical machine learning tools and techniques, 2nd edn. Morgan Kaufmann, Los Altos

Zemel RS, Pitassi T (2000) A gradient-based boosting algorithm for regression problems. In: Neural information processing systems. pp 696–702

Zhu J, Rosset S, Zou H, Hastie T (2005) Multi-class adaboost. Technical Report 430, Department of Statistics, University of Michigan

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Reddy, C.K., Park, JH. Multi-resolution boosting for classification and regression problems. Knowl Inf Syst 29, 435–456 (2011). https://doi.org/10.1007/s10115-010-0358-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-010-0358-0