Abstract

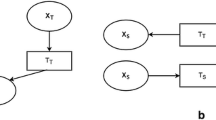

Transductive transfer learning is one special type of transfer learning problem, in which abundant labeled examples are available in the source domain and only unlabeled examples are available in the target domain. It easily finds applications in spam filtering, microblogging mining, and so on. In this paper, we propose a general framework to solve the problem by mapping the input features in both the source domain and the target domain into a shared latent space and simultaneously minimizing the feature reconstruction loss and prediction loss. We develop one specific example of the framework, namely latent large-margin transductive transfer learning algorithm, and analyze its theoretic bound of classification loss via Rademacher complexity. We also provide a unified view of several popular transfer learning algorithms under our framework. Experiment results on one synthetic dataset and three application datasets demonstrate the advantages of the proposed algorithm over the other state-of-the-art ones.

Similar content being viewed by others

References

Argyriou A, Evgeniou T, Pontil M (2007) Multi-task feature learning. In Proceedings of advances in neural information processing systems, MIT Press

Argyriou A, Evgeniou T, Pontil M (2007) A comparative study of methods for transductive transfer learning, In: Proceedings of IEEE international conference on data miningW

Bahadori MT, Liu Y, Zhang D (2011) Learning with minimum supervision: a general framework for transductive transfer learning. In: Proceedings of IEEE international conference on data mining

Bartlett PL, Mendelson S (2003) Rademacher and Gaussian complexities: risk bounds and structural results. J Mach Learn Res 3:463–482

Ben-David S, Blitzer J, Crammer K, Pereira F (2007) Analysis of representations for domain adaptation. In: Proceedings of advances in neural information processing systems

Blitzer J, McDonald R, Pereira F (2006) Domain adaptation with structural correspondence learning. In: EMNLP

John B, Mark D, Fernando P (2007) Biographies. Domain adaptation for sentiment classification. In: Proceedings of the annual meeting of the Association for Computational Linguistics, Bollywood, Boom-boxes and Blenders

Bonilla E, Chai KM, Williams C (2008) Multi-task Gaussian process prediction. In: Proceedings of advances in neural information processing systems

Bottou L (1998) Online algorithms and stochastic approximations. In: David S (ed) Online learning and neural networks. Cambridge University Press, Cambridge

Bottou L (2011) Stochastic gradient descent (version 2). http://leon.bottou.org/projects/sgd

Boyd S, Vandenberghe L (2004) Convex optimization. Cambridge University Press, Cambridge

Bradley DM, Andrew BJ (2009) Convex coding. In: Proceedings of conference on uncertainty in artificial intelligence

Chapelle O, Zien A (2005) Semi-supervised classification by low density separation. In proceedings of the Tenth Internationlal Workshop on Artificial Intelligence and Statistics, pp 57–64

Chapelle O, Sindhwani V, Keerthi SS (2008) Optimization techniques for semi-supervised support vector machines. J Mach Learn Res 9:203–233

Chong EKP, Zak SH (2008) An introduction to optimization (Wiley-Interscience series in discrete mathematics and optimization), 3rd edn. Wiley-Interscience, New York

Collobert R, Sinz F, Weston J, Bottou L (2006) Large scale transductive SVMs. J Mach Learn Res 7:1687–1712

Dai W, Yang Q, Xue G-R, Yu Y (2007) Boosting for transfer learning. In: Proceedings of international conference on machine learning

Dai W, Yang Q, Xue G-R, Yu Y (2008) Self-taught clustering. In: Proceedings of international conference on machine learning

Daum H (2007) Frustratingly easy domain adaptation. In: Proceedings of the annual meeting of the Association for Computational Linguistics

Davis J, Domingos P (2009) Deep transfer via second-order markov logic. In: Proceedings of international conference on machine learning

Duan L, Tsang IW, Xu D, Maybank SJ (2009) Domain transfer svm for video concept detection. In: IEEE conference on computer vision and pattern recognition

El-Yaniv R, Pechyony D (2007) Transductive rademacher complexity and its applications. In: Proceedings of conference on learning theory

Gu Z, Rothberg E, Bixby R (2011) Gurobi 4.6.2, 2011. http://www.gurobi.com/

He J, Liu Y, Lawrence R (2009) Graph-based transfer learning. In: Proceedings of conference on information and knowledge management

Joachims T (1999) Transductive inference for text classification using support vector machines. In: Proceedings of international conference on machine learning

Lanckriet G, Cristianini N, Bartlett P, Ghaoui LE (2004) Learning the kernel matrix with semidefinite programming. J Mach Learn Res 5:27–72

Lawrence ND, Platt JC (2004) Learning to learn with the informative vector machine. In: Proceedings of international conference on machine learning

Lee H, Battle A, Raina R, AY Ng (2007) Efficient sparse coding algorithms. In: Proceedings of advances in neural information processing systems

Liu L, Liang Q (2011) A high-performing comprehensive learning algorithm for text classification without pre-labeled training set. Knowl Inf Syst 29(3):727–738

Mihalkova L, Mooney RJ (2008) Transfer learning by mapping with minimal target data. In: Proceedings of AAAI conference on artificial intelligence

Mihalkova L, Huynh T, Mooney RJ (2007) Mapping and revising markov logic networks for transfer learning. In: Proceedings of AAAI conference on artificial intelligence

Pan SJ, Yang Q (2010) A survey on transfer learning. TKDE

Pan SJ, Kwok JT, Yang Q (2008) Transfer learning via dimensionality reduction. In: Proceedings of AAAI conference on artificial intelligence

Quanz B, Huan J (2009) Large margin transductive transfer learning. In: Proceedings of conference on information and knowledge management

Raina R, Battle A, Lee H, Packer B, Ng AY (2007) Self-taught learning: transfer learning from unlabeled data. In: Proceedings of international conference on machine learning

Shalev-Shwartz S, Singer Y, Srebro N (2007) Pegasos: primal estimated sub-gradient solver for svm. In: Proceedings of international conference on machine learning

Shao H, Tong B, Suzuki B (2012) Extended MDL principle for feature-based inductive transfer learning. Knowl Inf Syst 35(2):365–369

Shawe-Taylor J, Cristianini N (2004) Kernel methods for pattern analysis. Cambridge University Press, Cambridge

Thrun S, Pratt L (eds) (1998) Learning to learn. Kluwer Academic Publishers, Norwell

Vapnik VN (1995) The nature of statistical learning theory. Springer, New York

Junhui W, Xiaotong S, Wei P (2007) On transductive support vector machines. In: Prediction and Discovery. American Mathematical Society

Xu W (2011) Towards optimal one pass large scale learning with averaged stochastic gradient descent. CoRR

Xu Z, Jin R, Zhu J, King I, Lyu M (2008) Efficient convex relaxation for transductive support vector machine. In: Proceedings of advances in neural information processing systems

Xue Y, Liao X, Carin L, Krishnapuram B (2007) Multi-task learning for classification with dirichlet process priors. J Mach Learn Res 8:35–63

Zhang D, Liu Y, Lawrence RD, Chenthamarakshan V (2011) Transfer latent semantic learning: Microblog mining with less supervision. In: Proceedings of AAAI conference on artificial intelligence

Acknowledgments

The work in this paper is sponsored by the US Defense Advanced Research Projects Agency (DARPA) under the Anomaly Detection at Multiple Scales (ADAMS) program, Agreement Number W911NF-11-C-0200. The views and conclusions contained in this document are those of the author(s) and should not be interpreted as representing the official policies, either expressed or implied, of the US Defense Advanced Research Projects Agency or the US Government. The US Government is authorized to reproduce and distribute reprints for Government purposes notwithstanding any copyright notation hereon.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Bahadori, M.T., Liu, Y. & Zhang, D. A general framework for scalable transductive transfer learning. Knowl Inf Syst 38, 61–83 (2014). https://doi.org/10.1007/s10115-013-0647-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-013-0647-5