Abstract

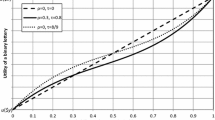

Risk attitude reflects an intelligent agent’s preference for different uncertain rewards. Cost is the resource consumed; wealth is the total amount of the resource an agent holds. In a risk-aware system whose risk attitude is not independent of wealth, utility is a function of wealth. Given the utility function is one-switch, if we simply use cost based utility functions for the reasoning, unless the initial wealth is zero, we cannot precisely obtain the optimum preference in every decision step. A bridge algorithm between cost and wealth helps us solve this problem. We provide a framework of the bridge algorithm for risk-aware Markov decision processes. We present an example of the block-world problem to explain the algorithm.

An effective bridge algorithm helps planners to make reasonable decisions according to their risk attitudes, without changing the structure of Markov domains. A bridge between cost and wealth enables us to deal with planning domains using the powerful backward induction approach instead of decision tree. This will have profound theoretical and realistic influence on artificial intelligence, economics, health care, as well as other areas concerning risk.

Similar content being viewed by others

References

Bell DE (1988) One-switch utility functions and a measure of risk. Manag Sci 34(12):1416–1424

Bell DE, Fishburn PC (2000) Utility functions for wealth. J Risk Uncertain 20(1):5–44

Bell DE, Fishburn PC (2001) Strong one-switch utility. Manag Sci 47(4):601–604

Bellman R (1957) A markovian decision process. J Math Mech 6

Blythe J (1999) Decision-theoretic planning. AI Mag 20(2):37–54

Boddy MS, Dean T (1989) Solving time-dependent planning problems. In: Proceedings of IJCAI, pp 979–984

Bosse T, Gerritsen C, Treur J (2009) Combining rational and biological factors in virtual agent decision making. Appl Intell 1–15

Dantzig GB (1998) Planning under uncertainty. Ann Oper Res 84

Dean T, Kaelbling LP, Kirman J, Nicholson A (1995) Planning under time constraints in stochastic domains. Artif Intell 76(1–2):35–74

Fishburn PC (1982) Foundations of expected utility. Reidel, Dordrecht

Howard RA, Matheson JE (1972) Risk-sensitive Markov decision processes. Manag Sci 18(7):356–369

Koenig S, Simmons RG (1994) Risk-sensitive planning with probabilistic decision graphs. In: Proceedings of the 4th international conference on principles of knowledge representation and reasoning, pp 363–373

Kramkov D, Sîrbu M (2007) Sensitivity analysis of utility-based prices and risk-tolerance wealth processes. Ann Appl Probab 16(4)

Lam KM, Leung HF (2007) Incorporating risk attitude and reputation into infinitely repeated games and an analysis on the iterated prisoner’s dilemma. In: ICTAI, vol 1. IEEE Computer Society, Los Alamitos, pp 60–67

Littman ML, Cassandra AR, Kaelbling LP (1995) Learning policies for partially observable environments: scaling up. In: Proceedings of ICML, pp 362–370

Liu Y, Koenig S (2005) Existence and finiteness conditions for risk-sensitive planning: results and conjectures. In: UAI. AUAI Press, Arlington, pp 354–363

Liu Y, Koenig S (2005) Risk-sensitive planning with one-switch utility functions: value iteration. In: Proceedings of AAAI, pp 993–999

Liu Y, Koenig S (2006) Functional value iteration for decision-theoretic planning with general utility functions. In: Proceedings of AAAI. AAAI Press, Menlo Park, pp 1186–1186

Marcus S, et al (1997) Risk sensitive Markov decision processes. In: Systems and control in the twenty-first century, vol 29

Melo FS, Ribeiro MI (2006) Transition entropy in partially observable Markov decision processes. In: Intelligent autonomous systems. IOS Press, Amsterdam, pp 282–289

Nejat G, Zhang Z (2006) Finding disaster victims: robot-assisted 3D mapping of urban search and rescue environments via landmark identification. In: International conference on automation, robotics and computer vision, pp 1–6

von Neumann J, Morgenstern O (1944) Theory of games and economic behavior. Princeton University Press, Princeton

de Palma A, et al (2008) Risk, uncertainty and discrete choice models. Mark Lett 19(3):269–285

Pereira A, Broed R (2006) Methods for uncertainty and sensitivity analysis: review and recomendations for implementation in ecolego

Perny P, Spanjaard O, Storme LX (2007) State space search for risk-averse agents. In: Proceedings of IJCAI, pp 2353–2358

Puterman, ML (1994) Markov decision processes: discrete stochastic dynamic programming. Wiley, New York

Ugrinovskii VA (2009) Risk-sensitivity conditions for stochastic uncertain model validation. Automatica 45(11):2651–2658

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Lin, Y., Makedon, F. & Ding, C. Towards a bridge between cost and wealth in risk-aware planning. Appl Intell 36, 605–616 (2012). https://doi.org/10.1007/s10489-011-0279-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-011-0279-y