Abstract

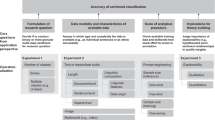

Aspect term extraction (ATE), a fundamental subtask in aspect-based sentiment analysis, aims to extract explicit aspect term from reviewers’ expressed opinions. However, the distribution of samples containing different numbers of aspect terms is long-tailed. Due to the scarcity of long-tailed samples and the existence of multiple variable-length aspect terms inside each sample, most ATE models converge to an inferior state because they have difficulty capturing features. Popular data augmentation techniques used for addressing this problem, such as synonym replacement and back translation, cannot produce substantial improvements when using pretrained language models. In this paper, we present a novel span-level aspect term extraction (SATE) framework, which includes three main components: a simple and effective tag data augmentation (TaDA) module, an original pretrained language model, and an optimized heuristic decoding algorithm module. TaDA is based on a span-level tagging scheme and generates new pseudo training samples for long-tailed multiaspect samples. The pretrained model, deemed a general feature extractor, yields contextual token representations. Then, the decoding algorithm adopts an adjustment factor to extract the variable-length aspect terms simultaneously. All the techniques are seamlessly integrated into the stacked SATE framework to pinpoint the aspect terms. Empirical experiments on SemEval benchmark datasets of multiple domains achieve F1-scores of 86.92% and 86.28% for laptops and restaurants, respectively, demonstrating the superiority of our model compared with the well-known baseline models.

Similar content being viewed by others

References

Liu B (2012) Sentiment analysis and opinion mining. Synth Lect Hum Lang Technol 5(1):1–167

Rana TA, Cheah Y-N, Rana T (2020) Multi-level knowledge-based approach for implicit aspect identification. Appl Intell 50(12):4616–4630

Akhtar M S, Garg T, Ekbal A (2020) Multi-task learning for aspect term extraction and aspect sentiment classification. Neurocomputing 398:247–256

Hu M, Peng Y, Huang Z, Li D, Lv Y (2019) Open-domain targeted sentiment analysis via span-based extraction and classification. In: Proceedings of the 57th annual meeting of the association for computational linguistics, pp 537–546

Li X, Bing L, Li P, Lam W (2019) A unified model for opinion target extraction and target sentiment prediction. In: Proceedings of the AAAI conference on artificial intelligence, vol 33, pp 6714–6721

Qiu G, Liu B, Bu J, Chen C (2011) Opinion word expansion and target extraction through double propagation. Comput Linguist 37(1):9–27

Zhuang L, Jing F, Zhu X-Y (2006) Movie review mining and summarization. In: Proceedings of the 15th ACM international conference on information and knowledge management, pp 43–50

Xu H, Liu B, Shu L, Yu PS (2018) Double embeddings and CNN-based sequence labeling for aspect extraction. In: Proceedings of the 56th annual meeting of the association for computational linguistics (Volume 2: Short Papers). Association for Computational Linguistics, Melbourne, Australia, pp 592– 598

Zhang Q, Shi C (2020) Exploiting bert with global-local context and label dependency for aspect term extraction. In: 2020 IEEE 7th International Conference on Data Science and Advanced Analytics (DSAA). IEEE, pp 354–362

Brody S, Elhadad N (2010) An unsupervised aspect-sentiment model for online reviews. In: Human language technologies: The 2010 annual conference of the north american chapter of the association for computational linguistics. Association for Computational Linguistics, Los Angeles, pp 804–812

Chen Z, Mukherjee A, Liu B (2014) Aspect extraction with automated prior knowledge learning. In: Proceedings of the 52nd annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Baltimore, pp 347–358

Shin Y, goo Lee S (2020) Learning context using segment-level lstm for neural sequence labeling. IEEE/ACM Trans Audio Speech Lang Process 28:105–115

Segal E, Efrat A, Shoham M, Globerson A, Berant J (2020) A simple and effective model for answering multi-span questions. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP). Association for Computational Linguistics, Online, pp 3074–3080

Kumar V, Choudhary A, Cho E (December 2020) Data augmentation using pre-trained transformer models. In: Proceedings of the 2nd workshop on life-long learning for spoken language systems. Association for Computational Linguistics, Suzhou , pp 18–26

Wu X, Lv S, Zang L, Han J, Hu S (2019) Conditional bert contextual augmentation. In: International conference on computational science. Springer, pp 84–95

Norouzi S, Fleet DJ, Norouzi M (2020) Exemplar vae: Linking generative models, nearest neighbor retrieval, and data augmentation. In: NeurIPS

Zhang X, Zhao JJ, LeCun Y (2015) Character-level convolutional networks for text classification. In: Cortes C, Lawrence ND, Lee D D, Sugiyama M, Garnett R (eds) Advances in neural information processing systems 28: annual conference on neural information processing systems 2015, Montreal, pp 649–657

Wei J, Zou K (November 2019) EDA: Easy data augmentation techniques for boosting performance on text classification tasks. In: Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP). Association for Computational Linguistics, Hong Kong, pp 6382–6388

Miller GA (1995) Wordnet: a lexical database for english. Commun ACM 38(11):39–41

Kobayashi S (June 2018) Contextual augmentation: Data augmentation by words with paradigmatic relations. In: Proceedings of the 2018 conference of the north american chapter of the association for computational linguistics: Human Language Technologies, Volume 2 (Short Papers). Association for Computational Linguistics, New Orleans, pp 452–457

Devlin J, Chang M-W, Lee K, Toutanova K (2019) BERT: Pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: Human Language Technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, pp 4171–4186

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Kaiser L, Polosukhin I (2017) Attention is all you need. In: Guyon I, von Luxburg U, Bengio S, Wallach H M, Fergus R, Vishwanathan SVN, Garnett R (eds) Advances in neural information processing systems 30: Annual conference on neural information processing systems 2017, Long Beach, pp 5998–6008

Lan Z, Chen M, Goodman S, Gimpel K, Sharma P, Soricut R (2020) ALBERT: A lite BERT for self-supervised learning of language representations. In: 8th International Conference on Learning Representations, ICLR 2020. OpenReview.net, Addis Ababa

Pontiki M, Galanis D, Pavlopoulos J, Papageorgiou H, Androutsopoulos I, Manandhar S (2014) SemEval-2014 task 4: Aspect based sentiment analysis. In: Proceedings of the 8th International Workshop on Semantic Evaluation (SemEval 2014). Association for Computational Linguistics, Dublin, pp 27–35

Pontiki M, Galanis D, Papageorgiou H, Manandhar S, Androutsopoulos I (2015) Semeval-2015 task 12: Aspect based sentiment analysis. In: Proceedings of the 9th international workshop on semantic evaluation (SemEval 2015), pp 486–495

Pontiki M, Galanis D, Papageorgiou H, Androutsopoulos I, Manandhar S, Al-Smadi M, Al-Ayyoub M, Zhao Y, Qin B, De Clercq O et al (2016) Semeval-2016 task 5: Aspect based sentiment analysis. In: International workshop on semantic evaluation, pp 19–30

He R, Lee WS, Ng HT, Dahlmeier D (2017) An unsupervised neural attention model for aspect extraction. In: Proceedings of the 55th annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Vancouver, pp 388–397

Mukherjee A, Liu B (2012) Aspect extraction through semi-supervised modeling. In: Proceedings of the 50th annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Jeju Island, pp 339–348

Qu Z, Wu C, Wang X (2019) Aspects extraction based on semi-supervised self-training. CAAI Trans Intell Syst 14(04):635–641

Shu L, Xu H, Liu B (2017) Lifelong learning CRF for supervised aspect extraction. In: Proceedings of the 55th annual meeting of the association for computational linguistics (Volume 2: Short Papers). Association for Computational Linguistics, Vancouver, pp 148–154

Wang W, Pan SJ, Dahlmeier D, Xiao X (2016) Recursive neural conditional random fields for aspect-based sentiment analysis. In: Proceedings of the 2016 conference on empirical methods in natural language processing. Association for Computational Linguistics, Austin, pp 616–626

Poria S, Cambria E, Gelbukh A (2016) Aspect extraction for opinion mining with a deep convolutional neural network. Knowl Based Syst 108:42–49

Wei Y, Zhang H, Fang J, Wen J, Ma J, Zhang G (2021) Joint aspect terms extraction and aspect categories detection via multi-task learning. Expert Syst Appl 174:114688

Li X, Bing L, Li P, Lam W, Yang Z (2018) Aspect term extraction with history attention and selective transformation. In: Lang J (ed) Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence, IJCAI 2018. ijcai.org, Stockholm, pp 4194–4200

Luo H, Li T, Liu B, Zhang J (2019) DOER: Dual cross-shared RNN for aspect term-polarity co-extraction. In: Proceedings of the 57th annual meeting of the association for computational linguistics. Association for Computational Linguistics, Florence, pp 591–601

Kumar A, Veerubhotla A S, Narapareddy V T, Aruru V, Neti L B M, Malapati A (2021) Aspect term extraction for opinion mining using a hierarchical self-attention network. Neurocomputing 465:195–204

Peters M, Neumann M, Iyyer M, Gardner M, Clark C, Lee K, Zettlemoyer L (2018) Deep contextualized word representations. In: Proceedings of the 2018 conference of the north american chapter of the association for computational linguistics: Human Language Technologies, Volume 1 (Long Papers). Association for Computational Linguistics, New Orleans, pp 2227–2237

Brown T B, Mann B, Ryder N, Subbiah M, Kaplan J, Dhariwal P, Neelakantan A, Shyam P, Sastry G, Askell A, Agarwal S, Herbert-Voss A, Krueger G, Henighan T, Child R, Ramesh A, Ziegler D M, Wu J, Winter C, Hesse C, Chen M, Sigler E, Litwin M, Gray S, Chess B, Clark J, Berner C, McCandlish S, Radford A, Sutskever I, Amodei D (2020) Language models are few-shot learners. In: Larochelle H, Ranzato M, Hadsell R, Balcan M-F, Lin H-T (eds) Advances in neural information processing Systems 33: annual conference on neural information Processing Systems 2020, NeurIPS 2020, virtual

Chen Z, Qian T (2020) Enhancing aspect term extraction with soft prototypes. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP). Association for Computational Linguistics, Online, pp 2107–2117

Xu H, Liu B, Shu L, Yu P (2019) BERT post-training for review reading comprehension and aspect-based sentiment analysis. In: Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: Human Language Technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, pp 2324–2335

Ma X, Hovy E (2016) End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF. In: Proceedings of the 54th annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Berlin, pp 1064–1074

Wang L, Liu K, Cao Z, Zhao J, de Melo G (2015) Sentiment-aspect extraction based on restricted boltzmann machines. In: Proceedings of the 53rd annual meeting of the association for computational linguistics and the 7th international Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, Beijing, pp 616–625

Chen Z, Qian T (2021) Bridge-based active domain adaptation for aspect term extraction. In: ACL/IJCNLP

Li K, Chen C, Quan X, Ling Q, Song Y (2020) Conditional augmentation for aspect term extraction via masked sequence-to-sequence generation. In: Proceedings of the 58th annual meeting of the association for computational linguistics. Association for Computational Linguistics, Online, pp 7056–7066

Gao M, Zhang J, Yu J, Li J, Xiong Q (2021) Recommender systems based on generative adversarial networks: A problem-driven perspective. Inf Sci 546:1166–1185

Yu A W, Dohan D, Luong M-T, Zhao R, Chen K, Norouzi M, Le Q V (2018) Qanet: Combining local convolution with global self-attention for reading comprehension. In: 6th International Conference on Learning Representations, ICLR 2018, Conference Track Proceedings. OpenReview.net, Vancouver

Karimi A, Rossi L, Prati A (2021) Aeda: An easier data augmentation technique for text classification. In: EMNLP

Anaby-Tavor A, Carmeli B, Goldbraich E, Kantor A, Kour G, Shlomov S, Tepper N, Zwerdling N (2020) Do not have enough data? deep learning to the rescue!. In: Proceedings of the AAAI conference on artificial intelligence, vol 34, pp 7383–7390

Radford A, Wu J, Child R, Luan D, Amodei D, Sutskever I et al (2019) Language models are unsupervised multitask learners. OpenAI blog 1(8):9

Lewis M, Liu Y, Goyal N, Ghazvininejad M, Mohamed A, Levy O, Stoyanov V, Zettlemoyer L (2020) BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. In: Proceedings of the 58th annual meeting of the association for computational linguistics. Association for Computational Linguistics, Online, pp 7871–7880

Jiang Q, Chen L, Xu R, Ao X, Yang M (2019) A challenge dataset and effective models for aspect-based sentiment analysis. In: EMNLP

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Liu, B., Lin, T. & Li, M. Improving aspect term extraction via span-level tag data augmentation. Appl Intell 53, 3207–3220 (2023). https://doi.org/10.1007/s10489-022-03558-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-022-03558-5