Abstract

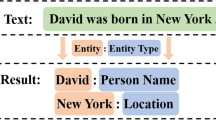

Entity and relation extraction is an essential task in knowledge acquisition and representation. Since novel relations continue to emerge in practice, the extraction for novel relational triplets is urgently needed but understudied. Existing works heavily rely on large-scale artificial external knowledge instead of just the corpus itself to predict novel relations, which require costly human work. In this paper, we propose a semantic-consistent learning method (SCL) for one-shot joint entity and relation extraction, whichbootstraps novel relational triplets from only a single instance of each class. The key is to take full advantage of semantic consistency. Specifically, we estimate the semantic consistency not only between relations and triplets by entropy to refine candidate triplets generated by a joint model, but also between triplets and plain texts by template-based contrastive learning for candidate triplets ranking. Experimental results on benchmarks demonstrate that our method can generalize novel relational triplets with strong robustness and achieve promising performance by micro F1-score 45.12% and 67.02% on two common datasets, respectively.

Similar content being viewed by others

References

Devlin J, Chang M, Lee K et al (2019) BERT: pre-training of deep bidirectional transformers for language understanding. In: Burstein J, Doran C, Solorio T (eds) Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: human language technologies, NAACL-HLT 2019, Minneapolis, MN, USA, 2-7 June, 2019, Vol 1 (long and short papers). Association for computational linguistics, pp 4171–4186. https://doi.org/10.18653/v1/n19-1423

Eberts M, Ulges A (2020) Span-based joint entity and relation extraction with transformer pre-training. In: Giacomo GD, Catalá A, Dilkina B et al (eds) ECAI 2020 - 24th european conference on artificial intelligence, 29 August-8 September 2020, Santiago de Compostela, Spain, August 29 - September 8, 2020 - including 10th conference on prestigious applications of artificial intelligence (PAIS 2020), frontiers in artificial intelligence and applications, vol 325. IOS Press, pp 2006–2013. https://doi.org/10.3233/FAIA200321

Hendrickx I, Kim SN, Kozareva Z et al (2010) Semeval-2010 task 8: Multi-way classification of semantic relations between pairs of nominals. In: Erk K, Strapparava C (eds) Proceedings of the 5th international workshop on semantic evaluation, SemEval@ACL 2010, Uppsala University, Uppsala, Sweden, 15-16 July, 2010. The association for computer linguistics, pp 33–38. https://aclanthology.org/S10-1006/

Huang Z, Xu W, Yu K (2015) Bidirectional LSTM-CRF models for sequence tagging. CoRR, arXiv:1508.01991

Ji G, Liu K, He S et al (2017) Distant supervision for relation extraction with sentence-level attention and entity descriptions. In: Singh SP, Markovitch S (eds) Proceedings of the thirty-first AAAI conference on artificial intelligence, 4-9 February, 2017, San Francisco, California, USA. AAAI Press, pp 3060–3066. http://aaai.org/ocs/index.php/AAAI/AAAI17/paper/view/14491

Joshi M, Chen D, Liu Y et al (2020) Spanbert: Improving pre-training by representing and predicting spans. Trans Assoc Comput Linguistics 8:64–77. https://transacl.org/ojs/index.php/tacl/article/view/1853

Levy O, Seo M, Choi E et al (2017) Zero-shot relation extraction via reading comprehension. In: Levy R, Specia L (eds) Proceedings of the 21st conference on computational natural language learning (CoNLL 2017), Vancouver, Canada, 3-4 August, 2017. Association for computational linguistics, pp 333–342. https://doi.org/10.18653/v1/K17-1034

De Lhoneux M, Bjerva J, Augenstein I et al (2018) Parameter sharing between dependency parsers for related languages. In: Riloff E, Chiang D, Hockenmaier J et al (eds) Proceedings of the 2018 conference on empirical methods in natural language processing, Brussels, Belgium, 31 October - 4 November, 2018. Association for computational linguistics, pp 4992–4997. https://aclanthology.org/D18-1543/

Li J, Wang R, Zhang N et al (2020) Logic-guided semantic representation learning for zero-shot relation classification. In: Scott D, Bel N, Zong C (eds) Proceedings of the 28th international conference on computational linguistics, COLING 2020, Barcelona, Spain (Online), 8-13 December, 2020. International committee on computational linguistics, pp 2967–2978. https://doi.org/10.18653/v1/2020.coling-main.265

Li X, Yin F, Sun Z et al (2019) Entity-relation extraction as multi-turn question answering. In: Korhonen A, Traum DR, Màrquez L (eds) Proceedings of the 57th conference of the association for computational linguistics, ACL 2019, Florence, Italy, 28 July - 2 August, 2019, vol 1: long papers. Association for computational linguistics, pp 1340–1350. https://doi.org/10.18653/v1/p19-1129

Liu Z, Winata GI, Xu P et al (2020) Coach: a coarse-to-fine approach for cross-domain slot filling. In: Jurafsky D, Chai J, Schluter N et al (eds) Proceedings of the 58th annual meeting of the association for computational linguistics, ACL 2020, Online, 5-10 July, 2020. Association for computational linguistics, pp 19–25. https://doi.org/10.18653/v1/2020.acl-main.3

Obamuyide A, Vlachos A (2018) Zero-shot relation classification as textual entailment. In: Proceedings of the first workshop on fact extraction and verification (FEVER). Association for computational linguistics, Brussels, Belgium, pp 72–78. https://doi.org/10.18653/v1/W18-5511

Pushp PK, Srivastava MM (2017) Train once, test anywhere: zero-shot learning for text classification. (CoRR), 1712.05972

Reimers N, Gurevych I (2019) Sentence-bert: Sentence embeddings using siamese bert-networks. In: Inui K, Jiang J, Ng V et al (eds) Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing, EMNLP-IJCNLP 2019, Hong Kong, China, 3-7 November, 2019. Association for computational linguistics, pp 3980–3990. https://doi.org/10.18653/v1/D19-1410

Takanobu R, Zhang T, Liu J et al (2019) A hierarchical framework for relation extraction with reinforcement learning. In: The thirty-third AAAI conference on artificial intelligence, AAAI 2019, the thirty-first innovative applications of artificial intelligence conference, IAAI 2019, The Ninth AAAI symposium on educational advances in artificial intelligence, EAAI 2019, Honolulu, Hawaii, USA, 27 January - 1 February, 2019. AAAI Press, pp 7072–7079. https://doi.org/10.1609/aaai.v33i01.33017072

Vargas C, Zhang Q, Izquierdo E (2020) One shot logo recognition based on siamese neural networks. In: Gurrin C, Jónsson B, Kando N et al (eds) Proceedings of the 2020 on international conference on multimedia retrieval, ICMR 2020, Dublin, Ireland, 8-11 June, 2020. ACM, pp 321–325. https://doi.org/10.1145/3372278.3390734

Wang J, Lu W (2020) Two are better than one: Joint entity and relation extraction with table-sequence encoders. In: Webber B, Cohn T, He Y et al (eds) Proceedings of the 2020 conference on empirical methods in natural language processing, EMNLP 2020, Online, 16-20 November, 2020. Association for computational linguistics, pp 1706–1721. https://doi.org/10.18653/v1/2020.emnlp-main.133

Wang S, Zhang Y, Che W et al (2018) Joint extraction of entities and relations based on a novel graph scheme. In: Lang J (ed) Proceedings of the twenty-seventh international joint conference on artificial intelligence, IJCAI 2018, 13-19 July, 2018, Stockholm, Sweden. ijcai.org, pp 4461–4467. https://doi.org/10.24963/ijcai.2018/620

Wei Z, Su J, Wang Y et al (2020) A novel cascade binary tagging framework for relational triple extraction. In: Jurafsky D, Chai J, Schluter N et al (eds) Proceedings of the 58th annual meeting of the association for computational linguistics, ACL 2020, Online, 5-10 July, 2020. Association for computational linguistics, pp 1476–1488. https://doi.org/10.18653/v1/2020.acl-main.136

Yang Z, Chen H, Zhang J et al (2020) Attention-based multi-level feature fusion for named entity recognition. In: Bessiere C (ed) Proceedings of the twenty-ninth international joint conference on artificial intelligence, IJCAI 2020. ijcai.org, pp 3594–3600. https://doi.org/10.24963/ijcai.2020/497

Yuan J, Guo H, Jin Z et al (2017) One-shot learning for fine-grained relation extraction via convolutiona siamese neural network. In: Nie J, Obradovic Z, Suzumura T et al (eds) 2017 IEEE international conference on big data (IEEE BigData 2017), Boston, MA, USA, 11-14 December, 2017. IEEE computer society, pp 2194–2199. https://doi.org/10.1109/BigData.2017.8258168

Zheng S, Wang F, Bao H et al (2017) Joint extraction of entities and relations based on a novel tagging scheme. In: Barzilay R, Kan M (eds) Proceedings of the 55th annual meeting of the association for computational linguistics, ACL 2017, Vancouver, Canada, 30 July - 4 August, vol 1: long papers. Association for computational linguistics, pp 1227–1236. https://doi.org/10.18653/v1/P17-1113

Zhu P, Cheng D, Yang F et al (2021) ZH-NER: chinese named entity recognition with adversarial multi-task learning and self-attentions. In: Jensen CS, Lim E, Yang D et al (eds) Database systems for advanced applications - 26th international conference, DASFAA 2021, Taipei, Taiwan, 11-14 April, 2021, proceedings, part II, lecture notes in computer science. Springer, vol 12682, pp 603–611. https://doi.org/10.1007/978-3-030-73197-7_40

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Li, J., Xu, Y., Lin, H. et al. Semantic-consistent learning for one-shot joint entity and relation extraction. Appl Intell 53, 5963–5976 (2023). https://doi.org/10.1007/s10489-022-03812-w

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-022-03812-w