Abstract

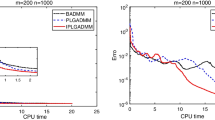

We propose two diagonal BFGS-type updates. One is the diagonal part of the ordinary BFGS update on a diagonal matrix. The other is its inverse version. Both diagonal updates preserve the positive definiteness as the ordinary BFGS update. The related diagonal BFGS methods can be regarded as extensions of the well-known Barzilai-Borwein method. Under appropriate conditions, we prove that both diagonal BFGS methods are globally convergent when applied to minimizing a convex or non-convex function. In addition, the diagonal quasi-Newton method with inverse diagonal BFGS update can be even superlinearly convergent if the function to be minimized is uniformly convex and completely separable. We apply the proposed diagonal BFGS updates to the limited memory BFGS (L-BFGS) method using the diagonal BFGS matrix as initial matrix. Our numerical results show the efficiency of the L-BFGS methods with diagonal BFGS updates.

Similar content being viewed by others

Data availability

The CUTEst dataset and L-BFGS code used in this paper are openly available at (https://github.com/ralna/CUTEst) and (https://github.com/ganguli-lab/minFunc), respectively. All other data supporting the findings of this study are available within the article.

References

Andrei, N.: A diagonal quasi-Newton method based on minimizing the measure function of Byrd and Nocedal for unconstatined optimization. Optimization 67, 1553–1568 (2018)

Andrei, N.: A new diagonal quasi-Newton updating method with scaled forward finite differences directional derivative for unconstrained optimization. Numer. Funct. Anal. Optim. 40, 1467–1488 (2019)

Andrei, N.: A diagonal quasi-Newton updating method for unconstrained optimization. Numer. Algor. 81, 575–590 (2019)

Al-Baali, M.: Improved Hessian approximations for the limited memory BFGS method. Numer. Algor. 22, 99–112 (1999)

Barzilai, J., Borwein, L.M.: Two-point step size gradient methods. IMA J. Numer. Anal. 8, 141–148 (1988)

Burdakov, O., Gong, L.-J., Zikrin, S., Yuan, Y.-X.: On efficiently combining limited-memory and trust-region techniques. Math. Progr. Comput. 9, 101–134 (2017)

Dai, Y.: Alternate step gradient method. Optimization 52, 395–415 (2003)

Dai, Y., Fletcher, R.: Projected Barzilai-Borwein methods for large-scale box-constrained quadratic programming. Numer. Mathematik 100, 21–47 (2005)

Dai, Y., Hager, W.W., Zhang, H.: The cyclic Barzilai-Borwein method for unconstrained optimization. IMA J. Numer. Anal. 26, 604–627 (2006)

Dai, Y.H., Liao, L.-Z.: R-linear convergence of the barzilai and borwein gradient method. IMA J. Numer. Anal. 22, 1–10 (2002)

Dai, Y., Yuan, J., Yuan, Y.-X.: Modified two-point step size gradient methods for unconstrained optimization. Comput. Optim. Appl. 22, 103–109 (2002)

Dennis, J.E., Jr., Moré, J.J.: Quasi-Newton methods, motivation and theory. SIAM Rev. 19, 46–89 (1977)

Dennis, J.E., Jr., Wolkowicz, H.: Sizing and least change secant update. SIAM J. Numer. Anal. 30, 1291–1314 (1993)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Progr. 91, 201–213 (2002)

Enshaei, S., Leong, W.J., Farid, M.: Diagonal quasi-Newton method via variational principle under generalized Frobenius norm. Optim. Methods Softw. 31, 1258–1271 (2016)

Fletcher, R.: Low storage methods for unconstrained optimization, in lectures in applied mathematics (AMS), vol. 26, pp. 165–179. American Mathematical Society, Providence, RI (1990)

Friedlander, A., Martłnez, J.M., Raydan, M.: Gradient method with retards and generalizations. SIAM J. Numer. Anal. 36, 275–289 (1999)

Gilbert, J.-Ch., Lemaréchal, C.: Some numerical experiments with variable storage quasi-Newton algorithms. Math. Progr. 45, 407–435 (1989)

Gill, P.E., Murray, W., Conjugate gradient methods for large-scale nonlinear optimization, Technical Report SOL,: 79–15. Stanford University, Stanford, CA, USA, Department of Operations Research (1979)

Gould, N.I., Orban, D., Toint, Ph.: Cutest: a constrained and unconstrained testing environment with safe threads for mathematical optimization. Comput. Optim. Appl. 60, 545–557 (2015)

Li, D.H., Fukushima, M.: On the global convergence of BFGS method for nonconvex unconstrained optimization problems. SIAM J. Optim. 11, 1054–1064 (2001)

Li, D.H., Fukushima, M.: A modified BFGS method and its global convergence in nonconvex minimization. J. Comput. Appl. Math. 129, 15–35 (2001)

Liu, D.C., Nocedal, J.: On the limited memory BFGS method for large scale optimization. Math. Progr. 45, 503–528 (1989)

Luenberger, D.G.: Linear and nonlinear programming, 2nd edn. Addison-Wesley, Reading, MA (1994)

Leong, W.J., Farid, M., Hassan, M.A.: Scaling on diagonal quasi-Newton update for large-scale unconstrained optimization. Bull. Malay. Math. Sci. Soc. 35, 247–256 (2012)

Nocedal, J.: Updating quasi-Newton matrices with limited storage. Math. Comput. 35, 773–782 (1980)

Nocedal, J., Wright, S.J.: Numerical optimization, 2nd edn. Springer-Verlag, New York Inc (1999)

Raydan, M.: On the Barzilai and Borwein choice of steplength for the gradient method. IMA J. Numer. Anal. 13, 321–326 (1993)

Raydan, M.: The Barzilai and Borwein gradient method for the large scale unconstrained minimization problem. SIAM J. Optim. 7, 26–33 (1997)

Schmidt,M.: minFunc: unconstrained differentiable multivariate optimization in Matlab, http://www.cs.ubc.ca/ schmidtm/Software/minFunc.html, (2005)

Yuan, Y.-X.: A new step size for the steepest descent method. J. Comput. Math. 24, 149–156 (2006)

Zhu, M., Nazareth, J.L., Wolkowicz, H.: The quasi-Cauchy relation and diagonal updating. SIAM J. Optim. 9, 1192–1204 (1999)

Acknowledgements

Supported by the Chinese NSF grant 11771157. The authors would like to thank the editor and anonymous referees for their very helpful comments.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Li, D., Wang, X. & Huang, J. Diagonal BFGS updates and applications to the limited memory BFGS method. Comput Optim Appl 81, 829–856 (2022). https://doi.org/10.1007/s10589-022-00353-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-022-00353-3