Abstract

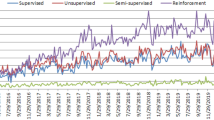

We investigate a powerful nonconvex optimization approach based on Difference of Convex functions (DC) programming and DC Algorithm (DCA) for reinforcement learning, a general class of machine learning techniques which aims to estimate the optimal learning policy in a dynamic environment typically formulated as a Markov decision process (with an incomplete model). The problem is tackled as finding the zero of the so-called optimal Bellman residual via the linear value-function approximation for which two optimization models are proposed: minimizing the \(\ell _{p}\)-norm of a vector-valued convex function, and minimizing a concave function under linear constraints. They are all formulated as DC programs for which attractive DCA schemes are developed. Numerical experiments on various examples of the two benchmarks of Markov decision process problems—Garnet and Gridworld problems, show the efficiency of our approaches in comparison with two existing DCA based algorithms and two state-of-the-art reinforcement learning algorithms.

Similar content being viewed by others

References

Abbeel, P., Ng, A.Y.: Apprenticeship learning via inverse reinforcement learning. In: Proceedings of the Twenty-first International Conference on Machine Learning, ICML. ACM, New York (2004)

Antos, A., Szepesvári, C., Munos, R.: Learning near-optimal policies with bellman-residual minimization based fitted policy iteration and a single sample path. Mach. Learn. 71(1), 89–129 (2008)

Baird, L.C.I.: Residual algorithms: reinforcement learning with function approximation. In: Prieditis, A., Russell, S. (eds.) Machine Learning Proceedings 1995, pp. 30–37. Morgan Kaufmann, San Francisco (1995)

Bellman, R.: A markovian decision process. Indiana Univ. Math. J. 6(4), 679–684 (1957)

Bertsekas, D.P. (ed.): Dynamic Programming: Deterministic and Stochastic Models. Prentice-Hall Inc, Upper Saddle River (1987)

Bertsekas, D.P., Tsitsiklis, J.N. (eds.): Neuro-Dynamic Programming. Athena Scientific, Belmont (1996)

Bhatnagar, S., Sutton, R.S., Ghavamzadeh, M., Lee, M.: Natural actor-critic algorithms. Automatica 45(11), 2471–2482 (2009)

Blanquero, R., Carrizosa, E.: Optimization of the norm of a vector-valued dc function and applications. J. Optim. Theory Appl. 107(2), 245–260 (2000)

Blanquero, R., Carrizosa, E.: On the norm of a dc function. J. Glob. Optim. 48(2), 209–213 (2010)

Buşoniu, L., Babuska, R., Schutter, B.D., Ernst, D.: Reinforcement Learning and Dynamic Programming Using Function Approximators, 1st edn. CRC Press Inc, Boca Raton (2010)

Coulom, R.: Reinforcement learning using neural networks, with applications to motor control. Ph.D. thesis, Institut National Polytechnique de Grenoble (2002)

Cruz Neto, J.X., Lopes, J.O., Santos, P.S.M., Souza, J.C.O.: An interior proximal linearized method for DC programming based on Bregman distance or second-order homogeneous kernels. Optimization, 1–15 (2018). https://doi.org/10.1080/02331934.2018.1476859

Ernst, D., Geurts, P., Wehenkel, L.: Tree-based batch mode reinforcement learning. J. Mach. Learn. Res. 6, 503–556 (2005)

Esser, E., Lou, Y., Xin, J.: A method for finding structured sparse solutions to non-negative least squares problems with applications. SIAM J. Imaging Sci. 6(4), 2010–2046 (2013)

Gaudioso, M., Giallombardo, G., Miglionico, G., Bagirov, A.M.: Minimizing nonsmooth dc functions via successive dc piecewise-affine approximations. J. Glob. Optim. 71(1), 37–55 (2018)

Geist, M., Pietquin, O.: Algorithmic survey of parametric value function approximation. IEEE Trans. Neural Netw. Learn. Syst. 24(6), 845–867 (2013)

Geramifard, A., Walsh, T.J., Tellex, S., Chowdhary, G., Roy, N., How, J.P.: A tutorial on linear function approximators for dynamic programming and reinforcement learning. Found. Trends Mach. Learn. 6(4), 375–451 (2013)

Gosavi, A.: Reinforcement learning: a tutorial survey and recent advances. INFORMS J. Comput. 21(2), 178–192 (2009)

Ho, V.T., Le Thi, H.A.: Solving an infinite-horizon discounted markov decision process by DC programming and DCA. In: Nguyen, T.B., van Do, T., An Le Thi, H., Nguyen, N.T. (eds.) Advanced Computational Methods for Knowledge Engineering, pp. 43–55. Springer, Berlin (2016)

Joki, K., Bagirov, A., Karmitsa, N., Mäkelä, M., Taheri, S.: Double bundle method for finding clarke stationary points in nonsmooth dc programming. SIAM J. Optim. 28(2), 1892–1919 (2018)

Joki, K., Bagirov, A.M., Karmitsa, N., Mäkelä, M.M.: A proximal bundle method for nonsmooth dc optimization utilizing nonconvex cutting planes. J. Glob. Optim. 68(3), 501–535 (2017)

Koshi, S.: Convergence of convex functions and duality. Hokkaido Math. J. 14(3), 399–414 (1985)

Lagoudakis, M.G., Parr, R.: Least-squares policy iteration. J. Mach. Learn. Res. 4, 1107–1149 (2003)

Lange, S., Gabel, T., Riedmiller, M.: Batch Reinforcement Learning. In: Wiering, M., van Otterlo, M. (eds.) Reinforcement Learning., vol. 12, chap. 2, pp. 45–73. Springer, Berlin, Heidelberg, Hillsdale (2012)

Le Thi, H.A.: DC Programming and DCA. http://www.lita.univ-lorraine.fr/~lethi/index.php/en/research/dc-programming-and-dca.html (homepage) (2005). Accessed 1 Dec 2005

Le Thi, H.A., Le, H.M., Pham Dinh, T.: Feature selection in machine learning: an exact penalty approach using a difference of convex function algorithm. Mach. Learn. 101(1–3), 163–186 (2015)

Le Thi, H.A., Nguyen, M.C.: Self-organizing maps by difference of convex functions optimization. Data Min. Knowl. Discov. 28(5–6), 1336–1365 (2014)

Le Thi, H.A., Nguyen, M.C., Pham Dinh, T.: A dc programming approach for finding communities in networks. Neural Comput. 26(12), 2827–2854 (2014)

Le Thi, H.A., Pham Dinh, T.: Solving a class of linearly constrained indefinite quadratic problems by D.C. algorithms. J. Glob. Optim. 11(3), 253–285 (1997)

Le Thi, H.A., Pham Dinh, T.: The DC (difference of convex functions) programming and DCA revisited with DC models of real world nonconvex optimization problems. Ann. Oper. Res. 133(1–4), 23–46 (2005)

Le Thi, H.A., Pham Dinh, T.: DC programming and DCA: thirty years of developments. Math. Program. Spec. Issue DC Program. Theory Algorithms Appl. 169(1), 5–68 (2018)

Le Thi, H.A., Pham Dinh, T., Le, H.M., Vo, X.T.: DC approximation approaches for sparse optimization. Eur. J. Oper. Res. 244(1), 26–46 (2015)

Le Thi, H.A., Vo, X.T., Pham Dinh, T.: Feature selection for linear SVMs under uncertain data: robust optimization based on difference of convex functions algorithms. Neural Netw. 59, 36–50 (2014)

Liu, Y., Shen, X., Doss, H.: Multicategory \(\psi \)-learning and support vector machines: computational tools. J. Comput. Gr. Stat. 14(1), 219–236 (2005)

Maillard, O.A., Munos, R., Lazaric, A., Ghavamzadeh, M.: Finite sample analysis of Bellman residual minimization. In: Sugiyama,M., Yang, Q. (eds.) Asian Conference on Machine Learpning. JMLR: Workshop and Conference Proceedings, vol. 13, pp. 309–324 (2010)

Munos, R.: Performance bounds in \(L_p\) norm for approximate value iteration. SIAM J. Control Optim. 46(2), 541–561 (2007)

Oliveira, W.D.: Proximal bundle methods for nonsmooth DC programming (2017). https://drive.google.com/file/d/0ByLZhUZ45Y-HQnVvOEZ3REw0Sk0/view. Accessed 20 July 2018

Oliveira, W.D., Tcheou, M.: An inertial algorithm for DC programming (2018). https://drive.google.com/file/d/1CUQRJBBVMtH2dFMuIa5_s6xcEjAG5xeC/view. Accessed 20 July 2018

Pashenkova, E., Rish, I., Dechter, R.: Value iteration and policy iteration algorithms for markov decision problem. In Proceedings of the National Conference on Artificial Intelligence (AAAI) Workshop on Structural Issues in Planning and Temporal Reasoning, April (1996)

Pham Dinh, T., El Bernoussi, S.: Algorithms for solving a class of nonconvex optimization problems. methods of subgradients. In: Hiriart-Urruty, J.B. (ed.) Fermat Days 85: Mathematics for Optimization. North-Holland Mathematics Studies, vol. 129, pp. 249–271. North-Holland, Amsterdam (1986)

Pham Dinh, T., Le Thi, H.A.: Convex analysis approach to DC programming: theory, algorithms and applications. Acta Mathematica Vietnamica 22(1), 289–355 (1997)

Pham Dinh, T., Le Thi, H.A.: DC optimization algorithms for solving the trust region subproblem. SIAM J. Optim. 8(2), 476–505 (1998)

Pham Dinh, T., Le Thi, H.A.: Recent advances in DC programming and DCA. In: Nguyen, N.T., Le Thi, H.A. (eds.) Transactions on Computational Intelligence XIII, vol. 8342, pp. 1–37. Springer, Berlin, Heidelberg (2014)

Piot, B., Geist, M., Pietquin, O.: Difference of convex functions programming for reinforcement learning. In: Advances in Neural Information Processing Systems (NIPS 2014) (2014)

Puterman, M.L. (ed.): Markov Decision Processes: Discrete Stochastic Dynamic Programming. Wiley, New York (1994)

Rockafellar, R.T.: Convex Analysis. Princeton Mathematical Series. Princeton University Press, Princeton (1970)

Salinetti, G., Wets, R.J.: On the relations between two types of convergence for convex functions. J. Math. Anal. Appl. 60(1), 211–226 (1977)

Scherrer, B.: Should one compute the Temporal Difference fix point or minimize the Bellman Residual? The unified oblique projection view. In: 27th International Conference on Machine Learning—ICML 2010. Haïfa, Israel (2010)

Schüle, T., Schnörr, C., Weber, S., Hornegger, J.: Discrete tomography by convex–concave regularization and d.c. programming. Discrete Appl. Math. 151, 229–243 (2005)

Schweitzer, P., Seidmann, A.: Generalized polynomial approximations in markovian decision processes. J. Math. Anal. Appl. 110(2), 568–582 (1985)

Sigaud, O., Buffet, O. (eds.): Markov Decision Processes in Artificial Intelligence. Wiley-IEEE Press, Hoboken (2010)

Singh, S., Jaakkola, T., Littman, M.L., Szepesvári, C.: Convergence results for single-step on-policy reinforcement-learning algorithms. Mach. Learn. 38(3), 287–308 (2000)

Singh, S.P., Jaakkola, T., Jordan, M.I.: Reinforcement learning with soft state aggregation. In: Tesauro, G., Touretzky, D.S., Leen, T.K. (eds.) Advances in Neural Information Processing Systems, vol. 7, pp. 361–368. MIT Press, San Mateo (1995)

Souza, J.C.O., Oliveira, P.R., Soubeyran, A.: Global convergence of a proximal linearized algorithm for difference of convex functions. Optim. Lett. 10(7), 1529–1539 (2016)

Sutton, R.S.: Generalization in reinforcement learning: successful examples using sparse coarse coding. In: Advances in Neural Information Processing Systems, vol. 8, pp. 1038–1044. MIT Press (1996)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (1998)

Szepesvári, C.: Algorithms for Reinforcement Learning. Morgan & Claypool, San Rafael (2010)

Szepesvári, C., Smart, W.D.: Interpolation-based q-learning. In: Proceedings of the Twenty-First International Conference on Machine Learning, ICML ’04, pp. 791–798. ACM, New York (2004)

Tor, A.H., Bagirov, A., Karasözen, B.: Aggregate codifferential method for nonsmooth dc optimization. J. Comput. Appl. Math. 259, 851–867 (2014)

Vapnik, V.N. (ed.): Statistical Learning Theory. Wiley, Hoboken (1998)

Watkins, C.J.C.H.: Learning from delayed rewards. Ph.D. thesis, King’s College, Cambridge (1989)

Wiering, M., van Otterlo, M. (eds.): Reinforcement Learning: State-of-the-Art. Adaptation, Learning, and Optimization, vol. 12, 1st edn. Springer, Berlin, Heidelberg (2012)

Williams, R.J., Baird, L.C.I.: Tight performance bounds on greedy policies based on imperfect value functions. College of Computer Science, Northeastern University, Tech. rep. (1993)

Xu, X., Zuo, L., Huang, Z.: Reinforcement learning algorithms with function approximation: recent advances and applications. Inf. Sci. 261, 1–31 (2014)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Le Thi, H., Ho, V. & Pham Dinh, T. A unified DC programming framework and efficient DCA based approaches for large scale batch reinforcement learning. J Glob Optim 73, 279–310 (2019). https://doi.org/10.1007/s10898-018-0698-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-018-0698-y