Abstract

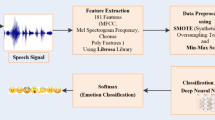

This study presents a new speech emotion recognition (SER) technique using temporal feature stacking and pooling (TFSP). First, Mel-frequency cepstral coefficients, Mel-spectrogram, and emotional silence factor (ESF) are extracted from segmented audio samples. The normalized features are fed into this neural network for training. For final feature representation, the learned features passed through the proposed TFSP framework. Subsequently, a linear support vector machine classifier is employed for emotion classification. It is evident from the confusion matrices that the suggested method can extract emotional content from speech signals efficiently with more unique emotional aspects from commonly confused emotions. According to this study, a shallow neural network can perform as good as the existing deep learning architectures like CNN, RNN, and attention networks. It may be mentioned here that the proposed method also utilises data augmentation by artificially increasing the number of speakers by disrupting the vocal tract length. Furthermore, these highly complex networks employ millions of trainable parameters, resulting in a longer convergence time.

The experiments are carried out on four different language speech emotional datasets, the Berlin emotional speech dataset (EmoDB) in German language, Ryerson Audio-Visual Database of Emotional Speech and Song (RAVDESS) in North American English, Surrey Audio-Visual Expressed Emotion Database (SAVEE) in British English and a newly constructed MNITJ-Simulated Emotional Hindi speech Database (MNITJ-SEHSD) in the Hindi language. Experimental results on the proposed framework achieved an overall accuracy of 95.09%, 90.20%, 95.50% and 94.67%, on EmoDB, RAVDESS, SAVEE and MNITJ-SEHSD, respectively, at much lesser computational complexity. These findings are compared to the baseline of the three existing architectures on the same databases. Classification accuracy, precision, recall and F1-score are used to validate the developed method.

Similar content being viewed by others

References

Aguiar RL, Costa YM, Silla CN (2018) Exploring data augmentation to improve music genre classification with convnets. In: 2018 international joint conference on neural networks (IJCNN). IEEE, pp 1–8

Akçay MB, Oğuz K (2020) Speech emotion recognition: emotional models, databases, features, preprocessing methods, supporting modalities, and classifiers. Speech Comm 116:56–76

Anvarjon T, Kwon S, et al. (2020) Deep-net: a lightweight cnn-based speech emotion recognition system using deep frequency features. Sensors 20 (18):5212

Atila O, Şengür A (2021) Attention guided 3d cnn-lstm model for accurate speech based emotion recognition. Appl Acoust 182:108260

Atmaja BT, Akagi M (2021) Two-stage dimensional emotion recognition by fusing predictions of acoustic and text networks using svm. Speech Comm 126:9–21

Burkhardt F, Paeschke A, Rolfes M, Sendlmeier WF, Weiss B (2005) A database of german emotional speech. In: Ninth European conference on speech communication and technology

Calvo RA, D’Mello S (2010) Affect detection: an interdisciplinary review of models, methods, and their applications. IEEE Trans Affect Comput 1 (1):18–37

Chatterjee R, Mazumdar S, Sherratt RS, Halder R, Maitra T, Giri D (2021) Real-time speech emotion analysis for smart home assistants. IEEE Trans Consum Electron 67(1):68–76

Chatziagapi A, Paraskevopoulos G, Sgouropoulos D, Pantazopoulos G, Nikandrou M, Giannakopoulos T, Katsamanis A, Potamianos A, Narayanan S (2019). In: Interspeech, pp 171–175

Chauhan K, Sharma KK, Varma T (2021) Speech emotion recognition using convolution neural networks. In: 2021 International Conference on Artificial Intelligence and Smart Systems (ICAIS). IEEE, pp 1176–1181

Chen M, He X, Yang J, Zhang H (2018) 3-D convolutional recurrent neural networks with attention model for speech emotion recognition. IEEE Signal Process Lett 25(10):1440–1444

Chen L, Mao X, Xue Y, Cheng LL (2012) Speech emotion recognition: Features and classification models. Digit Signal Process 22(6):1154–1160

Cowie R, Douglas-Cowie E, Tsapatsoulis N, Votsis G, Kollias S, Fellenz W, Taylor JG (2001) Emotion recognition in human-computer interaction. IEEE Signal Proc Mag 18(1):32–80

Dangol R, Alsadoon A, Prasad P, Seher I, Alsadoon OH (2020) Speech emotion recognition usingconvolutional neural network and long-short termmemory. Multimed Tools Appl 79(43):32917–32934

Deb S, Dandapat S (2018) Multiscale amplitude feature and significance of enhanced vocal tract information for emotion classification. IEEE Trans Cybern 49(3):802–815

Deng J, Zhang Z, Marchi E, Schuller B (2013) Sparse autoencoder-based feature transfer learning for speech emotion recognition. In: 2013 humaine association conference on affective computing and intelligent interaction. IEEE, pp 511–516

El Ayadi M, Kamel MS, Karray F (2011) Survey on speech emotion recognition: features, classification schemes, and databases. Pattern Recognit 44 (3):572–587

Guizzo E, Weyde T, Leveson JB (2020) Multi-time-scale convolution for emotion recognition from speech audio signals. In: ICASSP 2020-2020 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE, pp 6489–6493

Han K, Yu D, Tashev I (2014) Speech emotion recognition using deep neural network and extreme learning machine. In: Fifteenth annual conference of the international speech communication association

Hsu C-W, Lin C-J (2002) A comparison of methods for multiclass support vector machines. IEEE Trans Neural Netw 13(2):415–425

Issa D, Demirci MF, Yazici A (2020) Speech emotion recognition with deep convolutional neural networks. Biomedical Signal Processing Control 59:101894

Jackson P, Haq S (2014) Surrey Audio-Visual Expressed Emotion (savee) Database. University of Surrey, Guildford

Jaitly N, Hinton GE (2013) Vocal tract length perturbation (vtlp) improves speech recognition. In: Proc ICML Workshop on Deep Learning for Audio, Speech and Language, vol 117

Javaheri B (2021) Speech & song emotion recognition using multilayer perceptron and standard vector machine. arXiv:2105.09406

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. Adv Neural Inf Process Syst 25:1097–1105

Kwon S, et al. (2020) A cnn-assisted enhanced audio signal processing for speech emotion recognition. Sensors 20(1):183

Lazebnik S, Schmid C, Ponce J (2006) Beyond bags of features: spatial pyramid matching for recognizing natural scene categories. In: 2006 IEEE computer society conference on computer vision and pattern recognition (CVPR’06). IEEE, vol 2, pp 2169–2178

Liu Z-T, Li K, Li D-Y, chen L-F, Tan G-Z (2015) Emotional feature selection of speaker-independent speech based on correlation analysis and fisher. In: 2015 34th Chinese control conference (CCC). IEEE, pp 3780–3784

Livingstone SR, Russo FA (2018) The ryerson audio-visual database of emotional speech and song (ravdess): a dynamic, multimodal set of facial and vocal expressions in north american english. PloS one 13(5):e0196391

Ma E (2019) nlpaug: data augmentation for NLP https://github.com/makcedward/nlpaug. Accessed 01 Nov 2021

Mansoorizadeh M, Charkari NM (2010) Multimodal information fusion application to human emotion recognition from face and speech. Multimed Tools Appl 49(2):277–297

Meng H, Yan T, Yuan F, Wei H (2019) Speech emotion recognition from 3d log-mel spectrograms with deep learning network. IEEE Access 7:125868–125881

Mirsamadi S, Barsoum E, Zhang C (2017) Automatic speech emotion recognition using recurrent neural networks with local attention. In: 2017 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE:2227–2231

Mushtaq Z, Su S-F (2020) nvironmental sound classification using a regularized deep convolutional neural network with data augmentation. Appl Acoust 167:107389

Nediyanchath A, Paramasivam P, Yenigalla P (2020). In: ICASSP 2020-2020 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE, pp 7179–7183

Özseven T (2018) Investigation of the effect of spectrogram images and different texture analysis methods on speech emotion recognition. Appl Acoust 142:70–77

Qayyum ABA, Arefeen A, Shahnaz C (2019) Convolutional neural network (cnn) based speech-emotion recognition. In: 2019 IEEE international conference on signal processing, information, communication & systems (SPICSCON). IEEE, pp 122–125

Sajjad M, Kwon S, et al. (2020) Clustering-based speech emotion recognition by incorporating learned features and deep bilstm. IEEE Access 8:79861–79875

Sarma M, Ghahremani P, Povey D, Goel NK, Sarma KK, Dehak N (2018) Emotion identification from raw speech signals using dnns. In: Interspeech, pp 3097–3101

Schlosberg H (1954) Three dimensions of emotion. Psychol Rev 61 (2):81

Sun L, Zou B, Fu S, Chen J, Wang F (2019) Speech emotion recognition based on dnn-decision tree svm model. Speech Comm 115:29–37

Trigeorgis G, Ringeval F, Brueckner R, Marchi E, Nicolaou MA, Schuller B, Zafeiriou S (2016) Adieu features? end-to-end speech emotion recognition using a deep convolutional recurrent network. In: 2016 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE, pp 5200–5204

Wang K, An N, Li BN, Zhang Y, Li L (2015) Speech emotion recognition using fourier parameters. IEEE Trans Affect Comput 6(1):69–75

Wu S, Falk TH, Chan W-Y (2011) Automatic speech emotion recognition using modulation spectral features. Speech comm 53(5):768–785

Wu C, Huang C, Chen H (2018) Text-independent speech emotion recognition using frequency adaptive features. Multimed Tools Appl 77 (18):24353–24363

Wu X, Liu S, Cao Y, Li X, Yu J, Dai D, Ma X, Hu S, Wu Z, Liu X et al (2019) Speech emotion recognition using capsule networks. In: ICASSP 2019-2019 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE, pp 6695–6699

Xie Y, Liang R, Liang Z, Huang C, Zou C, Schuller B (2019) Speech emotion classification using attention-based lstm. IEEE/ACM Trans Audio, Speech, Language Process 27(11):1675–1685

Zhang Y, Du J, Wang Z, Zhang J, Tu Y (2018) Attention based fully convolutional network for speech emotion recognition. In: 2018 Asia-pacific signal and information processing association annual summit and conference (APSIPA ASC). IEEE, pp 1771–1775

Zhao J, Mao X, Chen L (2019) Speech emotion recognition using deep 1d & 2d cnn lstm networks. Biomedical Signal Processing and Control 47:312–323

Zhong S, Yu B, Zhang H (2020) Exploration of an independent training framework for speech emotion recognition. IEEE Access 8:222533–222543

Acknowledgements

The authors would like to acknowledge the support of the ADSIP Laboratory at MNIT, Jaipur, for providing all the facilities for carrying out the work.

Funding

The authors received no financial support for their research, writing, or publication of this paper.

Author information

Authors and Affiliations

Contributions

Krishna Chauhan: Conceptualization, Methodology, Python implementation, Investigation, Validation, Writing - original draft, Writing - review & editing. Kamalesh Kumar Sharma: Supervision, Project administration, Investigation, Validation, Review & editing. Tarun Varma: Supervision, Project administration, Investigation, Validation, Review & editing.

Corresponding author

Ethics declarations

In research involving human participants, all procedures were carried out in compliance with ethical guidelines.

Conflict of Interests

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Chauhan, K., Sharma, K.K. & Varma, T. A method for simplifying the spoken emotion recognition system using a shallow neural network and temporal feature stacking & pooling (TFSP). Multimed Tools Appl 82, 11265–11283 (2023). https://doi.org/10.1007/s11042-022-13463-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-022-13463-1