Abstract

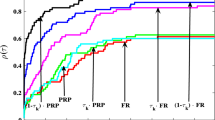

In this paper, by the use of Gram-Schmidt orthogonalization, we propose a class of modified conjugate gradient methods. The methods are modifications of the well-known conjugate gradient methods including the PRP, the HS, the FR and the DY methods. A common property of the modified methods is that the direction generated by any member of the class satisfies \(g_{k}^{T}d_k=-\|g_k\|^2\). Moreover, if line search is exact, the modified method reduces to the standard conjugate gradient method accordingly. In particular, we study the modified YT and YT+ methods. Under suitable conditions, we prove the global convergence of these two methods. Extensive numerical experiments show that the proposed methods are efficient for the test problems from the CUTE library.

Similar content being viewed by others

References

Bongartz, I., Conn, A.R., Gould, N.I.M., Toint, P.I.: CUTE: constrained and unconstrained testing environments. ACM Trans. Math. Softw. 21, 123–160 (1995)

Brigin, E.G., Martínez, J.M.: A spectral conjugate gradient method for unconstrained optimization. Appl. Math. Optim. 43, 117–128 (2001)

Dai, Y.H., Liao, L.Z.: New conjugacy conditions and related nonlinear conjugate gradient methods. Appl. Math. Optim. 43, 87–101 (2001)

Dai, Y.H., Yuan, Y.: A nonlinear conjugate gradient method with a strong global convergence property. SIAM J. Optim. 10, 177–182 (2000)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91, 201–213 (2002)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2, 21–42 (1992)

Hager, W.W., Zhang, H.: A survey of nonlinear conjugate gradient methods. Pacific J. Optim. 2, 35–58 (2006)

Hager, W.W., Zhang, H.: A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J. Optim. 16, 170–192 (2005)

Hestenes, M.R., Stiefel, E.L.: Methods of conjugate gradients for solving linear systems. J. Res. Nat. Bur. Stand. 49, 409–436 (1952)

Liu, D.C., Nocedal, J.: On the limited memory BFGS method for large scale optimization. Math. Program. 45, 503–528 (1989)

Wei, Z., Li, G., Qi, L.: New nonlinear conjugate gradient formulas for large-scale unconstrained optimization problems. Appl. Math. Comput. 179, 407–430 (2006)

Yabe, H., Takano, M.: Global convergence properties of nonlinear conjugate gradient methods with modified secant condition. Comput. Optim. Appl. 28, 203–225 (2004)

Zhang, L., Zhou, W.J., Li, D.H.: A descent modified Polak-Ribiere-Polyak conjugate gradient method and its global convergence. IMA J. Numer. Anal. 26, 629–640 (2006)

Zhang, L., Zhou, W.J., Li, D.H.: Global convergence of a modified Fletcher-Reeves conjugate gradient method with Armijo-type line search. Numer. Math. 104, 561–572 (2006)

Zhang, J.Z., Deng, N.Y., Chen, L.H.: New quasi-Newton equation and related methods for unconstrained optimizaiton. J. Optim. Theory Appl. 102, 147–167 (1999)

Zhang, J.Z., Xu, C.X.: Properties and numerical performance of quasi-Newton methods with modified quasi-Newton equations. J. Comput. Appl. Math. 137, 147–167 (2001)

Author information

Authors and Affiliations

Corresponding author

Additional information

Supported by the NSF of China via grant 10771057.

Rights and permissions

About this article

Cite this article

Cheng, W., Liu, Q. Sufficient descent nonlinear conjugate gradient methods with conjugacy condition. Numer Algor 53, 113–131 (2010). https://doi.org/10.1007/s11075-009-9318-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-009-9318-8