Abstract

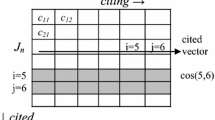

In this paper, we propose new means to quantify journals’ interdisciplinarity by exploiting the bipartite relation between scholars and journals where such scholars do publish. Our proposed approach is entirely data-driven (i.e., unsupervised): we just rely on the spectral properties of the bipartite bibliometric network, without requiring any a-priory classification or labeling of scholars or journals. Our approach is based on two subsequent steps. First, the structure of the bipartite graph is used to co-cluster both journals and scholars in a same low-dimensional space. Then, we measure a journal’s interdisciplinarity by computing various diversity metrics (Shannon entropy, Simpson diversity, Rao-Stirling index) over the journal’s distance with respect to these clusters. The proposed approach is evaluated over a dataset comprising 1258 journals and 2570 scholars in the information and communication technology field.

Similar content being viewed by others

Notes

The Cineca database is freely accessible at http://cercauniversita.cineca.it/php5/docenti/cerca.php.

In Carusi and Bianchi (2019), as a final pre-processing step we discarded journals with papers published by less than five different scholars, and then we discarded scholars with less than five publications on this final set of journals. Before this last pre-processing step, in the present work we also excluded journals with less than 5 different publications authored by the scholars in the dataset.

Please note that, having an entry for each individual scholar (in the ICT case, \(n=2570\)), \(\mathbf {a}_l\) is generally a high-dimensional and sparse vector.

We are borrowing only the term “concept vectors” from Dhillon and Modha (2001), since we resort to the same formulation in terms of normalized centroids after K-Means clustering. It is important to make it clear that here concept vectors are not defined on the original, high-dimensional dataset, as in Dhillon and Modha (2001), but are referred to the reduced SVD space. Therefore, properties of concept vectors discussed in Dhillon and Modha (2001) do not apply to our case.

With respect to the choice of the number of clusters, see discussion in Carusi and Bianchi (2019).

In factor analysis, inspection of factor loadings in order is a common practice to help interpretation of results. Sidorova et al. (2008) provides a similar example for document-word analysis.

The Simpson index is bounded between 0 and 1 by definition. In order to keep all diversity metrics within the same value range [0, 1], the logarithm base in Shannon entropy was set equal to the total number of communities K, whereas for the Rao-Stirling index, since there is no (finite) maximal possible distance d, we normalized all distances as a proportion of the maximum distance \(\max \limits _{i,j} d({\mathcal {C}}_i, {\mathcal {C}}_j)\).

Even though not evident from the 5-community classification, lower-level communities emerge when we increase the number of co-clusters up to 6, 7, 8 and 9 communities, addressing in order research on propagation&antennas, pattern recognition, bioinformatics and pervasive computing. We refer the reader to Carusi and Bianchi (2019) for the full discussion on the results obtained with different choices of the input K of the co-clustering algorithm.

The SCImago Journal Rank is publicly available at https://www.scimagojr.com/journalrank.php.

Out of a total number of 1258 journals comprised in the ICT dataset, there were 133 journals with no SJR score retrieved from the SCImago database, mainly due to differences in the title wording.

References

Bache, K., Newman, D., & Smyth, P. (2013). Text-based measures of document diversity. In: Proceedings of the 19th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 23–31). ACM.

Barjak, F. (2006). Team diversity and research collaboration in life sciences teams: Does a combination of research cultures pay off? Series A: Discussion Paper 2006-W02, University of Applied Sciences, Switzerland.

Bordons, M., Zulueta, M. A., Romero, F., & Barrigón, S. (1999). Measuring interdisciplinary collaboration within a university: The effects of the multidisciplinary research programme. Scientometrics, 46(3), 383–398.

Boyack, K.W., & Klavans, R. (2011). Multiple dimensions of journal specificity: Why journals can’t be assigned to disciplines. In: The 13th conference of the international society for scientometrics and informetrics, ISSI, Leiden University and the University of Zululand Durban, South Africa (vol 1, pp. 123–133).

Boyack, K. W., Klavans, R., & Börner, K. (2005). Mapping the backbone of science. Scientometrics, 64(3), 351–374.

Carusi, C., & Bianchi, G. (2019). Scientific community detection via bipartite scholar/journal graph co-clustering. Journal of Informetrics, 13(1), 354–386.

Chen, S., Arsenault, C., Gingras, Y., & Larivière, V. (2015). Exploring the interdisciplinary evolution of a discipline: the case of biochemistry and molecular biology. Scientometrics, 102(2), 1307–1323.

Dhillon, I. S. (2001). Co-clustering documents and words using bipartite spectral graph partitioning. In: Proceedings of the seventh ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 269–274). ACM.

Dhillon, I. S., & Modha, D. S. (2001). Concept decompositions for large sparse text data using clustering. Machine Learning, 42(1–2), 143–175.

Glänzel, W., & Schubert, A. (2003). A new classification scheme of science fields and subfields designed for scientometric evaluation purposes. Scientometrics, 56(3), 357–367.

Huutoniemi, K., Klein, J. T., Bruun, H., & Hukkinen, J. (2010). Analyzing interdisciplinarity: Typology and indicators. Research Policy, 39(1), 79–88.

Klavans, R., & Boyack, K. W. (2009). Toward a consensus map of science. Journal of the American Society for information science and technology, 60(3), 455–476.

Lattanzi, S., & Sivakumar, D. (2009). Affiliation networks. In: Proceedings of the forty-first annual ACM symposium on Theory of computing (pp. 427–434). ACM.

Leinster, T., & Cobbold, C. A. (2012). Measuring diversity: The importance of species similarity. Ecology, 93(3), 477–489.

Leydesdorff, L. (2007). Betweenness centrality as an indicator of the interdisciplinarity of scientific journals. Journal of the American Society for Information Science and Technology, 58(9), 1303–1319.

Leydesdorff, L., & Rafols, I. (2009). A global map of science based on the isi subject categories. Journal of the American Society for Information Science and Technology, 60(2), 348–362.

Leydesdorff, L., & Rafols, I. (2011). Indicators of the interdisciplinarity of journals: Diversity, centrality, and citations. Journal of Informetrics, 5(1), 87–100.

Leydesdorff, L., de Moya-Anegón, F., & Guerrero-Bote, V. P. (2015). Journal maps, interactive overlays, and the measurement of interdisciplinarity on the basis of s copus data (1996–2012). Journal of the Association for Information Science and Technology, 66(5), 1001–1016.

Minguillo, D. (2010). Toward a new way of mapping scientific fields: Authors’ competence for publishing in scholarly journals. Journal of the Association for Information Science and Technology, 61(4), 772–786.

Mitesser, O., Heinz, M., Havemann, F., & Gläser, J. (2008). Measuring diversity of research by extracting latent themes from bipartite networks of papers and references. In: 4th International conference on webometrics, informetrics and scientometrics (WIS’08), Gesellschaft für Wissenschaftsforschung Berlin.

Morillo, F., Bordons, M., & Gómez, I. (2003). Interdisciplinarity in science: A tentative typology of disciplines and research areas. Journal of the American Society for Information Science and technology, 54(13), 1237–1249.

Ni, C., Sugimoto, C. R., & Jiang, J. (2013). Venue-author-coupling: A measure for identifying disciplines through author communities. Journal of the American Society for Information Science and Technology, 64(2), 265–279.

Park, H., Jeon, M., & Rosen, J. B. (2003). Lower dimensional representation of text data based on centroids and least squares. BIT Numerical mathematics, 43(2), 427–448.

Porter, A., & Rafols, I. (2009). Is science becoming more interdisciplinary? Measuring and mapping six research fields over time. Scientometrics, 81(3), 719–745.

Porter, A., Cohen, A., David Roessner, J., & Perreault, M. (2007). Measuring researcher interdisciplinarity. Scientometrics, 72(1), 117–147.

Rafols, I., & Meyer, M. (2009). Diversity and network coherence as indicators of interdisciplinarity: Case studies in bionanoscience. Scientometrics, 82(2), 263–287.

Rafols, I., Porter, A. L., & Leydesdorff, L. (2010). Science overlay maps: A new tool for research policy and library management. Journal of the American Society for information Science and Technology, 61(9), 1871–1887.

Rao, C. R. (1982). Diversity and dissimilarity coefficients: A unified approach. Theoretical population biology, 21(1), 24–43.

Rinia, E., Van Leeuwen, T. N., Van Vuren, H., & Van Raan, A. (2001). Influence of interdisciplinarity on peer-review and bibliometric evaluations in physics research. Research Policy, 30(3), 357–361.

Schmidt, M., Glaser, J., Havemann, F., & Heinz, M. (2006). A methodological study for measuring the diversity of science. In: International workshop on webometrics, informetrics and scientometrics.

Shannon, C. E. (1948). A mathematical theory of communication. Bell System Technical Journal, 27(3), 379–423.

Sidorova, A., Evangelopoulos, N., Valacich, J. S., & Ramakrishnan, T. (2008). Uncovering the intellectual core of the information systems discipline. Mis Quarterly, 32, 467–482.

Silva, F. N., Rodrigues, F. A., Oliveira, O. N, Jr., & Costa, Ld F. (2013). Quantifying the interdisciplinarity of scientific journals and fields. Journal of Informetrics, 7(2), 469–477.

Simpson, E. H. (1949). Measurement of diversity. Nature, 163(4148), 688.

Stirling, A. (1998). On the economics and analysis of diversity. Science Policy Research Unit (SPRU), Electronic Working Papers Series, Paper 28:1–156.

Stirling, A. (2007). A general framework for analysing diversity in science, technology and society. Journal of the Royal Society Interface, 4(15), 707–719.

Šubelj, L., van Eck, N. J., & Waltman, L. (2016). Clustering scientific publications based on citation relations: A systematic comparison of different methods. PloS ONE, 11(4), e0154404.

Van den Besselaar, P., Heimeriks, G., et al. (2001). Disciplinary, multidisciplinary, interdisciplinary: Concepts and indicators. In: ISSI (pp. 705–716).

van Eck, N. J., & Waltman, L. (2017). Citation-based clustering of publications using citnetexplorer and vosviewer. Scientometrics, 111(2), 1053–1070.

Van Leeuwen, T., & Tijssen, R. (2000). Interdisciplinary dynamics of modern science: Analysis of cross-disciplinary citation flows. Research Evaluation, 9(3), 183–187.

Van Raan, A. F., & Van Leeuwen, T. N. (2002). Assessment of the scientific basis of interdisciplinary, applied research: Application of bibliometric methods in nutrition and food research. Research Policy, 31(4), 611–632.

Wagner, C. S., Roessner, J. D., Bobb, K., Klein, J. T., Boyack, K. W., Keyton, J., et al. (2011). Approaches to understanding and measuring interdisciplinary scientific research (IDR): A review of the literature. Journal of Informetrics, 5(1), 14–26.

Waltman, L., & Eck, N. J. (2012). A new methodology for constructing a publication-level classification system of science. Journal of the Association for Information Science and Technology, 63(12), 2378–2392.

Wasserman, S., & Faust, K. (1994). Social network analysis: Methods and applications (Vol. 8). Cambridge: Cambridge University Press.

Zitt, M. (2005). Facing diversity of science: A challenge for bibliometric indicators. Measurement: Interdisciplinary Research and Perspectives, 3(1), 38–49.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Carusi, C., Bianchi, G. A look at interdisciplinarity using bipartite scholar/journal networks. Scientometrics 122, 867–894 (2020). https://doi.org/10.1007/s11192-019-03309-3

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-019-03309-3