Abstract

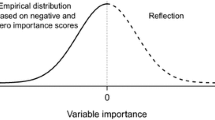

Random forests are widely used in many research fields for prediction and interpretation purposes. Their popularity is rooted in several appealing characteristics, such as their ability to deal with high dimensional data, complex interactions and correlations between variables. Another important feature is that random forests provide variable importance measures that can be used to identify the most important predictor variables. Though there are alternatives like complete case analysis and imputation, existing methods for the computation of such measures cannot be applied straightforward when the data contains missing values. This paper presents a solution to this pitfall by introducing a new variable importance measure that is applicable to any kind of data—whether it does or does not contain missing values. An extensive simulation study shows that the new measure meets sensible requirements and shows good variable ranking properties. An application to two real data sets also indicates that the new approach may provide a more sensible variable ranking than the widespread complete case analysis. It takes the occurrence of missing values into account which makes results also differ from those obtained under multiple imputation.

Similar content being viewed by others

References

Allison, T., Cicchetti, D.V.: Sleep in Mammals: ecological and constitutional correlates. Science 194(4266), 732–734 (1976)

Altmann, A., Tolosi, L., Sander, O., Lengauer, T.: Permutation importance: a corrected feature importance measure. Bioinformatics 26(10), 1340–1347 (2010)

Archer, K., Kimes, R.: Empirical characterization of random forest variable importance measures. Comput. Stat. Data Anal. 52(4), 2249–2260 (2008)

Biau, G., Devroye, L., Lugosi, G.: Consistency of random forests and other averaging classifiers. J. Mach. Learn. Res. 9, 2015–2033 (2008)

Boulesteix, A.-L., Strobl, C., Augustin, T., Daumer, M.: Evaluating microarray-based classifiers: an overview. Cancer Inform. 6, 77–97 (2008)

Breiman, L.: Bagging predictors. Mach. Learn. 24(2), 123–140 (1996)

Breiman, L.: Random forests. Mach. Learn. 45(1), 5–32 (2001)

Breiman, L., Cutler, A.: Random forests (2008). http://www.stat.berkeley.edu/users/breiman/RandomForests/cc_home.htm (accessed 03.02.2011)

Breiman, L., Friedman, J., Stone, C.J., Olshen, R.A.: Classification and Regression Trees. Chapman & Hall/CRC Press, London/Boca Raton (1984)

Chen, X., Wang, M., Zhang, H.: The use of classification trees for bioinformatics. Data Min. Knowl. Discov. 1(1), 55–63 (2011)

Cutler, D.R., Edwards, T.C., Beard, K.H., Cutler, A., Hess, K.T., Gibson, J., Lawler, J.J.: Random forests for classification in ecology. Ecology 88(11), 2783–2792 (2007)

Díaz-Uriarte, R., Alvarez de Andrés, S.: Gene selection and classification of microarray data using random forest. BMC Bioinform. 7(1), 3 (2006)

Dobra, A., Gehrke, J.: Bias correction in classification tree construction. In: Brodley, C.E., Danyluk, A.P. (eds.) Proceedings of the Eighteenth International Conference on Machine Learning (ICML 2001), Williams College, Williamstown, MA, USA, pp. 90–97. Morgan Kaufmann, San Mateo (2001)

Frank, A., Asuncion, A.: UCI machine learning repository (2010)

Genuer, R.: Risk bounds for purely uniformly random forests. Rapport de recherche RR-7318, INRIA (2010)

Genuer, R., Poggi, J.-M., Tuleau, C.: Random forests: some methodological insights. Rapport de recherche RR-6729, INRIA (2008)

Hapfelmeier, A., Hothorn, T., Ulm, K.: Random forest variable importance with missing data (2012)

Hastie, T., Tibshirani, R., Friedman, J.H.: The Elements of Statistical Learning. Springer, Berlin (2009) (corrected edn.)

Hothorn, T., Hornik, K., Zeileis, A.: Unbiased recursive partitioning: a conditional inference framework. J. Comput. Graph. Stat. 15(3), 651–674 (2006)

Hothorn, T., Hornik, K., Strobl, C., Zeileis, A.: Party: a laboratory for recursive part(y)itioning. R package version 0.9-9993 (2008)

Janssen, K.J., Vergouwe, Y., Donders, A.R., Harrell, F.E., Chen, Q., Grobbee, D.E., Moons, K.G.: Dealing with missing predictor values when applying clinical prediction models. Clin. Chem. 55(5), 994–1001 (2009)

Janssen, K.J., Donders, A.R., Harrell, F.E., Vergouwe, Y., Chen, Q., Grobbee, D.E., Moons, K.G.: Missing covariate data in medical research: to impute is better than to ignore. J. Clin. Epidemiol. 63(7), 721–727 (2010)

Kim, H., Loh, W.: Classification trees with unbiased multiway splits. J. Am. Stat. Assoc. 96, 589–604 (2001)

Lin, Y., Jeon, Y.: Random forests and adaptive nearest neighbors. J. Am. Stat. Assoc. 101(474), 578–590 (2006)

Little, R.J.A., Rubin, D.B.: Statistical Analysis with Missing Data, 2nd edn. Wiley-Interscience, New York (2002)

Lunetta, K., Hayward, B.L., Segal, J., Van Eerdewegh, P.: Screening large-scale association study data: exploiting interactions using random forests. BMC Genetics 5(1) (2004)

Nicodemus, K.: Letter to the editor: On the stability and ranking of predictors from random forest variable importance measures. Brief. Bioinform. (2011)

Nicodemus, K., Malley, J., Strobl, C., Ziegler, A.: The behaviour of random forest permutation-based variable importance measures under predictor correlation. BMC Bioinform. 11(1), 110 (2010)

Pearson, R.K.: The problem of disguised missing data. ACM SIGKDD Explor. Newsl. 8(1), 83–92 (2006)

Quinlan, J.R.: C4.5: Programs for Machine Learning (Morgan Kaufmann Series in Machine Learning), 1st edn. Morgan Kaufmann, San Mateo (1993)

R Development Core Team: R: A Language and Environment for Statistical Computing. Vienna, Austria (2010). ISBN 3-900051-07-0

Rieger, A., Hothorn, T., Strobl, C.: Random forests with missing values in the covariates (2010)

Rodenburg, W., Heidema, A.G., Boer, J.M.A., Bovee-Oudenhoven, I.M.J., Feskens, E.J.M., Mariman, E.C.M., Keijer, J.: A framework to identify physiological responses in microarray-based gene expression studies: selection and interpretation of biologically relevant genes. Physiol. Genomics 33(1), 78–90 (2008)

Rubin, D.B.: Inference and missing data. Biometrika 63(3), 581–592 (1976)

Rubin, D.B.: Multiple Imputation for Nonresponse in Surveys. Wiley, New York (1987)

Sandri, M., Zuccolotto, P.: Variable selection using random forests. In: Zani, S., Cerioli, A., Riani, M., Vichi, M. (eds.) Data Analysis, Classification and the Forward Search, Studies in Classification, Data Analysis, and Knowledge Organization, pp. 263–270. Springer, Berlin (2006). doi:10.1007/3-540-35978-8_30

Schafer, J.L., Graham, J.W.: Missing data: our view of the state of the art. Psychol. Methods 7(2), 147–177 (2002)

Strobl, C., Boulesteix, A.-L., Augustin, T.: Unbiased split selection for classification trees based on the gini index. Comput. Stat. Data Anal. 52(1), 483–501 (2007)

Strobl, C., Boulesteix, A.-L., Zeileis, A., Hothorn, T.: Bias in random forest variable importance measures: illustrations, sources and a solution. BMC Bioinform. 8(1), 25 (2007)

Strobl, C., Boulesteix, A.-L., Kneib, T., Augustin, T., Zeileis, A.: Conditional variable importance for random forests. BMC Bioinform. 9(1), 307 (2008)

Strobl, C., Malley, J., Tutz, G.: An introduction to recursive partitioning: rationale, application, and characteristics of classification and regression trees, bagging, and random forests. Psychol. Methods 14(4), 323–348 (2009)

Tang, R., Sinnwell, J., Li, J., Rider, D., de Andrade, M., Biernacka, J.: Identification of genes and haplotypes that predict rheumatoid arthritis using random forests. BMC Proceedings 3(7), S68 (2009)

van Buuren, S., Groothuis-Oudshoorn, K.: MICE: Multivariate Imputation by Chained Equations in R. J. Stat. Softw. 01–68 (2010, in press)

Van Buuren, S., Brand, J.P.L., Groothuis-Oudshoorn, C.G.M., Rubin, D.B.: Fully conditional specification in multivariate imputation. J. Stat. Comput. Simul. 76(12), 1049–1064 (2006)

Wang, M., Chen, X., Zhang, H.: Maximal conditional chi-square importance in random forests. Bioinformatics 26(6), 831–837 (2010)

White, A., Liu, W.: Bias in information based measures in decision tree induction. Mach. Learn. 15(3), 321–329 (1994)

White, I.R., Royston, P., Wood, A.M.: Multiple imputation using chained equations: issues and guidance for practice. Stat. Med. 30(4), 377–399 (2011)

Yang, W.W.W., Gu, C.C.: Selection of important variables by statistical learning in genome-wide association analysis. BMC Proceedings 3(7) (2009)

Yu, X., Hyyppä, J., Vastaranta, M., Holopainen, M., Viitala, R.: Predicting individual tree attributes from airborne laser point clouds based on the random forests technique. ISPRS J. Photogramm. Remote Sens. 66(1), 28–37 (2011)

Zhou, Q., Hong, W., Luo, L., Yang, F.: Gene selection using random forest and proximity differences criterion on dna microarray data. J. Conv. Inf. Technol. 5(6), 161–170 (2010)

Author information

Authors and Affiliations

Corresponding author

Electronic Supplementary Material

Below are the links to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Hapfelmeier, A., Hothorn, T., Ulm, K. et al. A new variable importance measure for random forests with missing data. Stat Comput 24, 21–34 (2014). https://doi.org/10.1007/s11222-012-9349-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-012-9349-1