Abstract

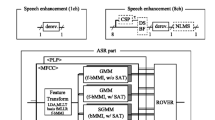

We propose a novel speaker-dependent (SD) multi-condition (MC) training approach to joint learning of deep neural networks (DNNs) of acoustic models and an explicit speech separation structure for recognition of multi-talker mixed speech in a single-channel setting. First, an MC acoustic modeling framework is established to train a SD-DNN model in multi-talker scenarios. Such a recognizer significantly reduces the decoding complexity and improves the recognition accuracy over those using speaker-independent DNN models with a complicated joint decoding structure assuming the speaker identities in mixed speech are known. In addition, a SD regression DNN for mapping the acoustic features of mixed speech to the speech features of a target speaker is jointly trained with the SD-DNN based acoustic models. Experimental results on Speech Separation Challenge (SSC) small-vocabulary recognition show that the proposed approach under multi-condition training achieves an average word error rate (WER) of 3.8%, yielding a relative WER reduction of 65.1% from a top performance, DNN-based pre-processing only approach we proposed earlier under clean-condition training (Tu et al. 2016). Furthermore, the proposed joint training DNN framework generates a relative WER reduction of 13.2% from state-of-the-art systems under multi-condition training. Finally, the effectiveness of the proposed approach is also verified on the Wall Street Journal (WSJ0) task with medium-vocabulary continuous speech recognition in a simulated multi-talker setting.

Similar content being viewed by others

References

Tu, Y., Du, J., Dai, L., & Lee, C. (2016). In Proc. ISCSLP.

Radfar, M.H., & Dansereau, R.M. (2007). IEEE Transactions on Audio, Speech, and Language Processing, 15(8), 2299.

Cooke, M., Hershey, J.R., & Rennie, S.J. (2010). Computer Speech and Language, 24(1), 1.

Kristjansson, T.T., Hershey, J.R., Olsen, P.A., Rennie, S.J., & Gopinath, R.A. (2006). In Proc. annual conference of international speech communication association. (INTERSPEECH).

Virtanen, T. (2006). In Proc. annual conference of international speech communication association. (INTERSPEECH).

Weiss, R.J., & Ellis, D.P.W. (2007). In Proc. IEEE workshop on applications of signal processing to audio and acoustics (WASPAA) (pp. 114–117).

Ghahramani, Z., & Jordan, M.I. (1997). Machine Learning, 29, 245.

Schmidt, M.N., & Olsson, R. (2006). In Proc. annual conference of international speech communication association. (INTERSPEECH).

Jackson, E.P. (2006). In Proc. annual conference of international speech communication association. (INTERSPEECH).

Wang, D., & Brown, G.J. (2006). Journal of the Acoustical Society of America, 124(1), 13.

Barker, J., Ma, N., Coy, A., & Cooke, M. (2010). Computer Speech and Language, 24(1), 94.

Ming, J., Hazen, T.J., & Glass, J.R. (2010). Computer Speech and Language, 24(1), 67.

Shao, Y., Srinivasan, S., Jin, Z., & Wang, D. (2010). Computer Speech and Language, 24(1), 77.

Reynolds, D.A., & Rose, R.C. (1995). IEEE Transactions on Speech and Audio Processing, 3(1), 72.

Hinton, G.E., & Salakhutdinov, R. (2006). Science, 313(5786), 504.

Hinton, G.E., Osindero, S., & Teh, Y. (2006). Neural Computation, 18(7), 1527.

Dahl, G.E., Yu, D., Deng, L., & Acero, A. (2012). In IEEE Transactions on audio, speech, and language processing.

Mohamed, A., Dahl, G.E., & Hinton, G.E. (2012). IEEE Transactions on Audio, Speech, and Language Processing, 20(1), 14.

Hinton, G.E., Deng, L., Yu, D., Dahl, G.E., Mohamed, A., Jaitly, N., Senior, A.W., Vanhoucke, V., Nguyen, P., Sainath, T.N., & et al. (2012). IEEE Signal Processing Magazine, 29(6), 82.

Du, J., Tu, Y., Dai, L., & Lee, C. (2016). IEEE Transactions on Audio, Speech, and Language Processing, 24(8), 1424.

Huang, P., Kim, M., Hasegawajohnson, M., & Smaragdis, P. (2015). IEEE Transactions on Audio, Speech, and Language Processing, 23(12), 2136.

Zohrer, M., Peharz, R., & Pernkopf, F. (2015). IEEE Transactions on Audio, Speech, and Language Processing, 23(12), 2398.

Weng, C., Yu, D., Seltzer, M.L., & Droppo, J. (2015). IEEE Transactions on Audio, Speech, and Language Processing, 23(10), 1670.

Mohri, M., Pereira, F., & Riley, M.P. (2002). Computer Speech and Language, 16(1), 69.

Nadeu, C., Macho, D., & Hernando, J. (2000). Speech Communication, 34(1), 93.

Paul, D.B., & Baker, J.M. (1992). In Proc. 5th DARPA speech and natural lang. workshop (pp. 357–362).

Tu, Y., Du, J., Dai, L., & Lee, C. (2015). In Proc. ICASSP (pp. 61–65).

Yu, D., Seltzer, M.L., Li, J., & Seide, F. (2013). In Proc. CoRR, Vol. 1301.

Zhang, Y., & Glass, J.R. (2009). In Proc. IEEE automat. Speech recognition and understanding workshop.(ASRU).

Wilpon, J.G., Lee, C.H., & Rabiner, L.R. (1989). In Proc. ICASSP (pp. 254–257).

Tu, Y., Du, J., Dai, L., & Lee, C. (2015). In Proc. ICSP (pp. 532–536).

Gales, M. (1998). Computer Speech and Language, 12(2), 75.

Hu, Y., & Huo, Q. (2007). In Proc. annual conference of international speech communication association. (INTERSPEECH).

Bengio, Y. (2009). Foundat. and Trends Mach Learn, 2(1), 1.

Ephraim, Y., & Malah, D. (1984). IEEE Transactions on Acoustics, Speech, and Signal Processing, 32(6), 1109.

Seide, F., Li, G., Chen, X., & Yu, D. (2011). In Proc. IEEE automat. speech recognition and understanding workshop. (ASRU).

De Boer, P., Kroese, D.P., Mannor, S., & Rubinstein, R.y. (2005). Annals of Operations Research, 134 (1), 19.

Cooke, M., & Lee, T.W. (2016). http://staffwww.dcs.shef.ac.uk/people/M.Cooke/SpeechSeparationChallenge.htm.

Cooke, M., Barker, J., Cunningham, S., & Shao, X. (2006). Journal of the Acoustical Society of America, 120(5), 2421.

Xu, Y., Du, J., Dai, L., & Lee, C. (2014). IEEE Signal Processing Letters, 21(1), 65.

Hinton, G.E. A practical guide to training restricted Boltzmann machines (University of Toronto, 2010).

Acknowledgements

This work was supported by the National Natural Science Foundation of China under Grants No. 61671422.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Tu, YH., Du, J. & Lee, CH. A Speaker-Dependent Approach to Single-Channel Joint Speech Separation and Acoustic Modeling Based on Deep Neural Networks for Robust Recognition of Multi-Talker Speech. J Sign Process Syst 90, 963–973 (2018). https://doi.org/10.1007/s11265-017-1295-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11265-017-1295-x