Abstract

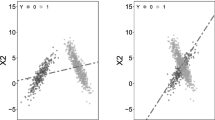

The model for improving the robustness of sparse principal component analysis (PCA) is proposed in this paper. Instead of the l 2-norm variance utilized in the conventional sparse PCA model, the proposed model maximizes the l 1-norm variance, which is less sensitive to noise and outlier. To ensure sparsity, l p -norm (0 ⩽ p ⩽ 1) constraint, which is more general and effective than l 1-norm, is considered. A simple yet efficient algorithm is developed against the proposed model. The complexity of the algorithm approximately linearly increases with both of the size and the dimensionality of the given data, which is comparable to or better than the current sparse PCA methods. The proposed algorithm is also proved to converge to a reasonable local optimum of the model. The efficiency and robustness of the algorithm is verified by a series of experiments on both synthetic and digit number image data.

Similar content being viewed by others

References

Jollife I. Principal Component Analysis. New York: Springer-Verlag, 1986

Jollife I. Rotation of principal components: choice of normalization constraints. J Appl Stat, 1995, 22: 29–35

Cadima J, Jollife I. Loadings and correlations in the interpretation of principal components. J Appl Stat, 1995, 22: 203–214

Tibshirani R. Regression shrinkage and selection via the Lasso. J Roy Statist Soc Ser B Met, 1996, 58: 267–288

Jollife I, Uddin M. A modied principal component technique based on the Lasso. J Comput Graph Stat, 2003, 12: 531–547

Zou H, Hastie T, Tibshirani R. Sparse principal component analysis. J Comput Graph Stat, 2006, 15: 265–286

d’Aspremont A, El Ghaoui L, Jordan M I, et al. A direct formulation for sparse PCA using semidefinite programming. SIAM Rev, 2007, 49: 434–448

Shen H P, Huang J Z. Sparse principal component analysis via regularized low rank matrix approximation. J Multivariate Anal, 2008, 99: 1015–1034

Sigg C D, Buhmann J M. Expectation maximization for sparse and non-negative PCA. In: Proceedings of the 25th International Conference on Machine Learning, Helsinki, 2008. 960–967

Journée M, Nesterov Y, Richtárik P, et al. Generalized power method for sparse principal component analysis. J Mach Learn Res, 2010, 11: 517–55

Sriperumbudur B K, Torres D A, Lanckriet G R. Sparse eigen methods by D.C. programming. In: Proceedings of the 24th International Conference on Machine learning, Corvallis, 2007. 831–838

Lu Z S, Zhang Y. An augmented Lagrangian approach for sparse principal component analysis. Math Program, 2012, 135: 149–193.

Moghaddam B, Weiss Y, Avidan S. Spectral bounds for sparse PCA: exact and greedy algorithms. In: Proceedings of the 19th Conference on Neural Information Processing Systems, Vancouver, 2005. 915–922

d’Aspremont A, Bach F R, Ghaoui L E. Optimal solutions for sparse principal component analysis. J Mach Learn Res, 2008, 9: 1269–1294

Croux C, Filzmoser P, Fritz H. Robust sparse principal component analysis. Technometrics, 2013, 55: 202–214

Meng D Y, Zhao Q, Xu Z B. Improve robustness of sparse PCA based on L 1-norm maximization. Patt Recog, 2012, 45: 487–497

Kwak N. Principal component analysis based on L 1-norm maximization. IEEE Trans Patt Anal Mach Intell, 2008, 30: 1672–1680

De la Torre F, Black M J. A framework for robust subspace learning. Int J Comput Vis, 2003, 54: 117–142

Aanas H, Fisker R, Astrom K, et al. Robust factorization. IEEE Trans Patt Anal Mach Intell, 2002, 24: 1215–1225

Ding C, Zhou D, He X, et al. R1-PCA: rotational invariant L 1-norm principal component analysis for robust subspace factorization. In: Proceedings of the 23rd International Conference on Machine Learning, Pittsburgh, 2006. 281–288

Baccini A, Besse P, Falguerolles A D. A L 1-norm PCA and a heuristic approach. In: Diday E, Lechevalier Y, Opitz O, eds. Ordinal and Symbolic Data Analysis. New York: Springer-Verlag, 1996. 359–368

Ke Q, Kanade T. Robust L 1 norm factorization in the presence of outliers and missing data by alternative convex programming. In: Proceedings of IEEE Computer Society Conference on Computer Vision and Pattern Recognition. Washington D.C.: IEEE, 2005. 739–746

Marjanovic G, Solo V. On L q optimization and matrix completion. IEEE Trans Signal Process, 2012, 60: 5714–5724

Blumensath T, Davies M E. Iterative hard thresholding for compressed sensing. Appl Comput Harmonic Anal, 2009, 27: 265–274

Xu Z B, Chang X Y, Xu F M, et al. L 1/2-regularization: a thresholding representation theory and a fast solver. IEEE Trans Neural Netw Learn Syst, 2012, 23: 1013–1027

Xu Z B, Zhang H, Wang Y, et al. L 1/2-regularization. Sci China Inf Sci, 2010, 53: 1159–1169

Zeng J S, Fang J, Xu Z B. Sparse SAR imaging based on L 1/2 regularization. Sci China Inf Sci, 2012, 55: 1755–1775

Xu Z B, Guo H L, Wang Y, et al. Representative of L 1/2 regularization among L q (0 < q ⩽ 1) regularizations: an experimental study based on phase diagram. Acta Autom Sin, 2012, 38: 1225–1228

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zhao, Q., Meng, D. & Xu, Z. Robust sparse principal component analysis. Sci. China Inf. Sci. 57, 1–14 (2014). https://doi.org/10.1007/s11432-013-4970-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11432-013-4970-y