Abstract

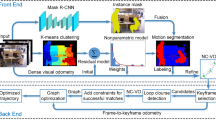

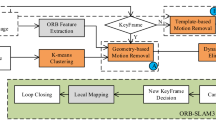

Visual localization is considered an essential capability in robotics and has attracted increasing interest for the past few years. However, most proposed visual localization systems assume that the surrounding environment is static, which is difficult to maintain in real-world scenarios due to the presence of moving objects. In this paper, we present DFR-SLAM, a real-time and accurate RGB-D SLAM based on ORB-SLAM2 that achieves satisfactory performance in a variety of challenging dynamic scenarios. At the core of our system lies a motion consensus filtering algorithm estimating the initial camera pose and a graph-cut optimization framework combining long-term observations, prior information, and spatial coherence to jointly distinguish dynamic and static visual features. Other systems for dynamic environments detect dynamic components by using the information from short time-span frames, whereas our system uses observations from a long period of keyframes. We evaluate our system using dynamic sequences from the public TUM dataset, and the evaluation demonstrates that the proposed system outperforms the original ORB-SLAM2 system significantly. In addition, our system provides competitive localization accuracy with satisfactory real-time performance compared to closely related SLAM systems designed to adapt to dynamic environments.

Similar content being viewed by others

References

Bresson G, Alsayed Z, Yu L, et al. Simultaneous localization and mapping: a survey of current trends in autonomous driving. IEEE Trans Intell Veh, 2017, 2: 194–220

Qin T, Chen T, Chen Y, et al. AVP-SLAM: semantic visual mapping and localization for autonomous vehicles in the parking lot. In: Proceedings of International Conference on Intelligent Robots and Systems, Las Vegas, 2020. 5939–5945

Fang B, Mei G, Yuan X, et al. Visual SLAM for robot navigation in healthcare facility. Pattern Recogn, 2021, 113: 107822

Zhao C H, Fan B, Hu J W, et al. Homography-based camera pose estimation with known gravity direction for UAV navigation. Sci China Inf Sci, 2021, 64: 112204

Yang D F, Sun F C, Wang S C, et al. Simultaneous estimation of ego-motion and vehicle distance by using a monocular camera. Sci China Inf Sci, 2014, 57: 052205

Mur-Artal R, Tardos J D. ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans Robot, 2017, 33: 1255–1262

Lv W J, Kang Y, Qin J H. FVO: floor vision aided odometry. Sci China Inf Sci, 2019, 62: 012202

Yunus R, Li Y, Tombari F. ManhattanSLAM: robust planar tracking and mapping leveraging mixture of manhattan frames. 2021. ArXiv:2103.15068

Fischler M A, Bolles R C. Random sample consensus. Commun ACM, 1981, 24: 381–395

Yu C, Liu Z, Liu X J, et al. DS-SLAM: a semantic visual SLAM towards dynamic environments. In: Proceedings of International Conference on Intelligent Robots and Systems, Madrid, 2018. 1168–1174

Li A, Wang J, Xu M, et al. DP-SLAM: a visual SLAM with moving probability towards dynamic environments. Inf Sci, 2021, 556: 128–142

Cheng J, Zhang H, Meng M Q H. Improving visual localization accuracy in dynamic environments based on dynamic region removal. IEEE Trans Automat Sci Eng, 2020, 17: 1585–1596

Barber C B, Dobkin D P, Huhdanpaa H. The quickhull algorithm for convex hulls. ACM Trans Math Softw, 1996, 22: 469–483

Sturm J, Engelhard N, Endres F, et al. A benchmark for the evaluation of RGB-D SLAM systems. In: Proceedings of International Conference on Intelligent Robots and Systems, Vilamoura, 2012. 573–580

Li S, Lee D. RGB-D SLAM in dynamic environments using static point weighting. IEEE Robot Autom Lett, 2017, 2: 2263–2270

Cheng J, Wang C, Meng M Q H. Robust visual localization in dynamic environments based on sparse motion removal. IEEE Trans Automat Sci Eng, 2019, 17: 658–669

Dai W, Zhang Y, Li P, et al. RGB-D SLAM in dynamic environments using point correlations. IEEE Trans Pattern Anal Mach Intell, 2022, 44: 373–389

Kim D H, Han S B, Kim J H. Visual odometry algorithm using an RGB-D sensor and IMU in a highly dynamic environment. In: Robot Intelligence Technology and Applications 3. Cham: Springer, 2015. 11–26

Yang D, Bi S, Wang W, et al. DRE-SLAM: dynamic RGB-D encoder SLAM for a differential-drive robot. Remote Sens, 2019, 11: 380

Hyun D, Park C, Yang M C, et al. Target-aware convolutional neural network for target-level sentiment analysis. Inf Sci, 2019, 491: 166–178

Bruno H M S, Colombini E L. LIFT-SLAM: a deep-learning feature-based monocular visual SLAM method. Neurocomputing, 2021, 455: 97–110

Yuan X, Chen S. SaD-SLAM: a visual SLAM based on semantic and depth information. In: Proceedings of International Conference on Intelligent Robots and Systems, Las Vegas, 2020. 4930–4935

Bescos B, Campos C, Tardos J D, et al. DynaSLAM II: tightly-coupled multi-object tracking and SLAM. IEEE Robot Autom Lett, 2021, 6: 5191–5198

He K, Gkioxari G, Dollár P, et al. Mask R-CNN. In: Proceedings of the IEEE International Conference on Computer Vision, Venice, 2017. 2961–2969

Arthur D, Vassilvitskii S. k-means++: the advantages of careful seeding. In: Proceedings of the 18th Annual ACM-SIAM Symposium on Discrete Algorithms, New Orleans, 2007

Lepetit V, Moreno-Noguer F, Fua P. EPnP: an accurate O(n) solution to the PnP problem. Int J Comput Vis, 2009, 81: 155–166

Moulon P, Monasse P, Perrot R, et al. OpenMVG: open multiple view geometry. In: Proceedings of International Workshop on Reproducible Research in Pattern Recognition, 2016. 60–74

Khoshelham K, Elberink S O. Accuracy and resolution of Kinect depth data for indoor mapping applications. Sensors, 2012, 12: 1437–1454

Jaimez M, Kerl C, Gonzalez-Jimenez J, et al. Fast odometry and scene flow from RGB-D cameras based on geometric clustering. In: Proceedings of the IEEE International Conference on Robotics and Automation, Singapore, 2017. 3992–3999

Boykov Y Y, Jolly M P. Interactive graph cuts for optimal boundary & region segmentation of objects in ND images. In: Proceedings of the IEEE international conference on computer vision, Vancouver, 2001. 105–112

Boykov Y, Kolmogorov V. An experimental comparison of min-cut/max-flow algorithms for energy minimization in vision. IEEE Trans Pattern Anal Machine Intell, 2004, 26: 1124–1137

Bescos B, Facil J M, Civera J, et al. DynaSLAM: tracking, mapping, and inpainting in dynamic scenes. IEEE Robot Autom Lett, 2018, 3: 4076–4083

Kim D H, Kim J H. Effective background model-based RGB-D dense visual odometry in a dynamic environment. IEEE Trans Robot, 2016, 32: 1565–1573

Acknowledgements

This work was supported in part by National Natural Science Foundation of China (Grant Nos. 61922076, 61873252).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Liu, C., Qin, J., Wang, S. et al. Accurate RGB-D SLAM in dynamic environments based on dynamic visual feature removal. Sci. China Inf. Sci. 65, 202206 (2022). https://doi.org/10.1007/s11432-021-3425-8

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11432-021-3425-8