Abstract

Purpose

We present a fully image-based visual servoing framework for neurosurgical navigation and needle guidance. The proposed servo-control scheme allows for compensation of target anatomy movements, maintaining high navigational accuracy over time, and automatic needle guide alignment for accurate manual insertions.

Method

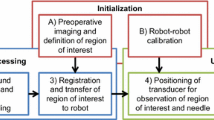

Our system comprises a motorized 3D ultrasound (US) transducer mounted on a robotic arm and equipped with a needle guide. It continuously registers US sweeps in real time with a pre-interventional plan based on CT or MR images and annotations. While a visual control law maintains anatomy visibility and alignment of the needle guide, a force controller is employed for acoustic coupling and tissue pressure. We validate the servoing capabilities of our method on a geometric gel phantom and real human anatomy, and the needle targeting accuracy using CT images on a lumbar spine gel phantom under neurosurgery conditions.

Results

Despite the varying resolution of the acquired 3D sweeps, we achieved direction-independent positioning errors of \(0.35\pm 0.19\) mm and \(0.61^\circ \pm 0.45^\circ \), respectively. Our method is capable of compensating movements of around 25 mm/s and works reliably on human anatomy with errors of \(1.45\pm 0.78\) mm. In all four manual insertions by an expert surgeon, a needle could be successfully inserted into the facet joint, with an estimated targeting accuracy of \(1.33\pm 0.33\) mm, superior to the gold standard.

Conclusion

The experiments demonstrated the feasibility of robotic ultrasound-based navigation and needle guidance for neurosurgical applications such as lumbar spine injections.

Similar content being viewed by others

Notes

Throughout this article, linear transformations and vectors are expressed in computer vision notation, i.e., using 4\(\times \)4 homogeneous matrices and 4\(\times \)1 vectors.

References

Center P, Manchikanti L (2015) A systematic review and best evidence synthesis of effectiveness of therapeutic facet joint interventions in managing chronic spinal pain. Pain phys 18:E535–E582

Yoon SH, O’Brien SL, Tran M (2013) Ultrasound guided spine injections: advancement over fluoroscopic guidance? Curr Phys Med Rehabil Rep 1(2):104–113

Atci IB, Ucler N, Ayden O, Albayrak S, Bitlisli H, Kilic S, Altinsoy HB (2016) The comparison of pain management efficiency of ultrasonography-guided facet joint injection with fluoroscopy-guided injection in lower lumbar facet syndrome. Neurosurg Q 23(3):246–250

Freire V, Grabs D, Lepage-Saucier M, Moser TP (2016) Ultrasound-guided cervical facet joint injections a viable substitution for fluoroscopy-guided injections? J Ultrasound Med 35(6):1253–1258

Soni NJ, Franco-Sadud R, Schnobrich D, Dancel R, Tierney DM, Salame G, Restrepo MI, McHardy P (2016) Ultrasound guidance for lumbar puncture. Neurol Clin Pract 6(4):358–368

de Oliveira Filho GR (2002) The construction of learning curves for basic skills in anesthetic procedures: an application for the cumulative sum method. Anesth Analg 95(2):411–416

Moult E, Ungi T, Welch M, Lu J, McGraw RC, Fichtinger G (2013) Ultrasound-guided facet joint injection training using perk tutor. Int j comput assist radiol surg 8(5):831–836

Evans K, Roll S, Baker J (2009) Work-related musculoskeletal disorders (WRMSD) among registered diagnostic medical sonographers and vascular technologists: a representative sample. J Diagn Med Sonogr 25(6):287–299

Lonjon N, Chan-Seng E, Costalat V, Bonnafoux B, Vassal M, Boetto J (2016) Robot-assisted spine surgery: feasibility study through a prospective case-matched analysis. Eur Spine J 25(3):947–955

Zettinig O, Fuerst B, Kojcev R, Esposito M, Salehi M, Wein W, Rackerseder J, Sinibaldi E, Frisch B, Navab N (2016) “Toward real-time 3D ultrasound registration-based visual servoing for interventional navigation,” In: 2016 IEEE International conference on robotics and automation (ICRA). IEEE, p 945–950

Tran D, Kamani AA, Al-Attas E, Lessoway VA, Massey S, Rohling RN (2010) Single-operator real-time ultrasound-guidance to aim and insert a lumbar epidural needle. Can J Anesth/J can d’anesthésie 57(4):313–321

Brudfors M, Seitel A, Rasoulian A, Lasso A, Lessoway V. A, Osborn J, Maki A, Rohling R. N, Abolmaesumi P (2015) “Towards real-time, tracker-less 3D ultrasound guidance for spine anaesthesia,” International Journal of Computer Assisted Radiology and Surgery, pp. 1–11

Yan CX, Goulet B, Pelletier J, Chen SJ-S, Tampieri D, Collins DL (2011) Towards accurate, robust and practical ultrasound-CT registration of vertebrae for image-guided spine surgery. Int J Comput Assist Radiol Surg 6(4):523–537

Ungi T, Abolmaesumi P, Jalal R, Welch M, Ayukawa I, Nagpal S, Lasso A, Jaeger M, Borschneck DP, Fichtinger G, Mousavi P (2012) Spinal needle navigation by tracked ultrasound snapshots. IEEE Trans Biomed Eng 59(10):2766–2772

Rasoulian A, Osborn J, Sojoudi S, Nouranian S, Lessoway V. A, Rohling R. N, Abolmaesumi P (2014) “A system for ultrasound-guided spinal injections: A feasibility study,” In Information Processing in Computer-Assisted Interventions. Springer, pp. 90–99

Abolmaesumi P, Salcudean S, Zhu W (2000) “Visual servoing for robot-assisted diagnostic ultrasound,” In: Engineering in medicine and biology Society 2000. Proceedings of the 22nd annual international conference of the IEEE, vol. 4. IEEE, pp 2532–2535

Nakadate R, Solis J, Takanishi A, Minagawa E, Sugawara M, Niki K (2011) “Out-of-plane visual servoing method for tracking the carotid artery with a robot-assisted ultrasound diagnostic system,” In: Robotics and automation (ICRA), 2011 IEEE International conference on IEEE, pp. 5267–5272

Nadeau C, Krupa A (2013) Intensity-based ultrasound visual servoing: modeling and validation with 2-d and 3-d probes. IEEE Trans Robot 29(4):1003–1015

Krupa A, Folio D, Novales C, Vieyres P, Li T (2014) Robotized tele-echography: an assisting visibility tool to support expert diagnostic. Syst J IEEE 99:1–10

Chatelain P, Krupa A, Navab N (2015) “Optimization of ultrasound image quality via visual servoing.” In: IEEE International conference on robotics and automation, ICRA

Virga S, Zettinig O, Esposito M, Pfister K, Frisch B, Neff T, Navab N, Hennersperger C (2016) “Automatic force-compliant robotic ultrasound screening of abdominal aortic aneurysms,” In: Intelligent robots and systems (IROS), 2016 IEEE/RSJ International conference on, October 2016 (vol. in press)

Adebar TK, Fletcher AE, Okamura AM (2014) 3-D Ultrasound-guided robotic needle steering in biological tissue. Biomed Eng IEEE Trans 61(12):2899–2910

Krupa A (2014) “3D steering of a flexible needle by visual servoing,” In: Medical image computing and computer-assisted intervention-MICCAI 2014. Springer, Berlin, pp 480–487

Nadeau C, Ren H, Krupa A, Dupont P (2015) Intensity-based visual servoing for instrument and tissue tracking in 3D ultrasound volumes. Autom Sci Eng IEEE Trans 12(1):367–371

De Schutter J, Van Brussel H (1988) Compliant robot motion ii. a control approach based on external control loops. Int J Robot Res 7(4):18–33

Karamalis A, Wein W, Kutter O, Navab N (2009) “Fast hybrid freehand ultrasound volume reconstruction,” In: SPIE medical imaging. International society for optics and photonics, pp 726 114–726 114

Wein W, Khamene A (2008) “Image-based method for in-vivo freehand ultrasound calibration,” In: Medical Imaging. International society for optics and photonics, pp 69 200K–69 200K

Fuerst B, Wein W, Müller M, Navab N (2014) Automatic ultrasound-MRI registration for neurosurgery using the 2D and 3D LC2 metric. Med Image Anal 18(8):1312–1319

Modat M, Ridgway GR, Taylor ZA, Lehmann M, Barnes J, Hawkes DJ, Fox NC, Ourselin S (2010) Fast free-form deformation using graphics processing units. Comput methods progr biomed 98(3):278–284

Powell MJ (2009) The BOBYQA algorithm for bound constrained optimization without derivatives, Cambridge NA Report NA2009/06. University of Cambridge, Cambridge

Wein W, Brunke S, Khamene A, Callstrom MR, Navab N (2008) Automatic CT-ultrasound registration for diagnostic imaging and image-guided intervention. Med Image Anal 12(5):577–585

Lasso A, Heffter T, Rankin A, Pinter C, Ungi T, Fichtinger G (2014) PLUS: open-source toolkit for ultrasound-guided intervention systems. IEEE Trans Biomed Eng 10:2527–2537

Tokuda J, Fischer GS, Papademetris X, Yaniv Z, Ibanez L, Cheng P, Liu H, Blevins J, Arata J, Golby AJ, Kapur T, Pieper S, Burdette EC, Fichtinger G, Tempany CM, Hata N (2009) OpenIGTLink: an open network protocol for image-guided therapy environment. Int J Med Robot Comput Assist Surg 5(4):423–434

Tsai RY, Lenz RK (1989) A new technique for fully autonomous and efficient 3d robotics hand/eye calibration. IEEE Trans Robot Autom 5(3):345–358

Liu Y, Zeng C, Fan M, Hu L, Ma C, Tian W (2016) Assessment of respiration-induced vertebral motion in prone-positioned patients during general anaesthesia. Int J Med Robot Comput Assist Surg 12(2):214–218

Galiano K, Obwegeser AA, Bodner G, Freund M, Maurer H, Kamelger FS, Schatzer R, Ploner F (2005) Ultrasound guidance for facet joint injections in the lumbar spine: a computed tomography-controlled feasibility study. Anesth Analg 101(2):579–583

Kalman RE (1960) A new approach to linear filtering and prediction problems. J Basic Eng 82(1):35–45

Jung B-H, Kim B-H, Hong S-M (2013) Respiratory motion prediction with extended kalman filters based on local circular motion model. Int J Bio-Sci Bio-Technol 5(1):51–58

Provost J, Papadacci C, Arango JE, Imbault M, Fink M, Gennisson J-L, Tanter M, Pernot M (2014) 3D ultrafast ultrasound imaging in vivo. Phys Med Biol 59(19):L1

Acknowledgements

We thank ImFusion GmbH, Munich, Germany, for providing their image processing framework and their continuous support, and the department of nuclear medicine at Klinikum Rechts der Isar for several CT acquisitions. Furthermore, we wish to thank Julia Rackerseder for the production of the used phantoms and Rüdiger Göbl for his assistance during experiments.

Funding This work was partially funded by the Bayerische Forschungsstiftung Award Number AZ-1072-13 (project RoBildOR).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Informed consent

Informed consent was obtained from all individual participants included in the volunteer study. All procedures performed in studies involving human participants were in accordance with the ethical standards of the institutional and national research committees.

Additional information

Oliver Zettinig and Benjamin Frisch have contributed equally to this work. Yu-Mi Ryang and Nassir Navab have contributed equally to this work.

Rights and permissions

About this article

Cite this article

Zettinig, O., Frisch, B., Virga, S. et al. 3D ultrasound registration-based visual servoing for neurosurgical navigation. Int J CARS 12, 1607–1619 (2017). https://doi.org/10.1007/s11548-017-1536-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-017-1536-2