Abstract

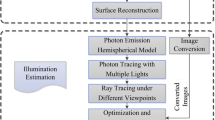

In this paper, the main network of multi-channel light sources is improved, so that multi-channel pictures can be fused for joint training. Secondly, for high-resolution detection pictures, the huge memory consumption leads to a reduction in batches and then affects the model distribution. Group regularization is adopted. We can still train the model normally in small batches; then, combined with the method of the regional candidate network, the final detection accuracy and the accuracy of the candidate frame regression are improved. Finally, through in-depth analysis, based on image lighting technology and physical-based rendering theory, the requirements for lighting effects and performance limitations, combined with a variety of image enhancement technologies, such as gamma correction, HDR, and these technologies used in Java. Real-time lighting algorithms that currently run efficiently on mainstream PCs. The algorithm can be well integrated into the existing rasterization rendering pipeline, while into account better lighting effects and higher operating efficiency. Finally, the lighting effects achieved by the algorithm are tested and compared through experiments. This algorithm not only achieves a very good light and shadow effect when rendering virtual objects with a real scene as the background but also can meet the realistic rendering of picture frames in more complex scenes. Rate requirements. The experimental results show that the virtual light source automatically generated by this algorithm can approximate the lighting of the real scene, and the virtual object and the real object can produce approximately consistent lighting effects in an augmented reality environment with one or more real light sources.

Similar content being viewed by others

References

Rhee, T., Petikam, L., Allen, B., et al.: Mr360: mixed reality rendering for 360 panoramic videos[J]. IEEE Trans. Vis. Comput. Graph. 23(4), 1379–1388 (2017)

Wang, K., Gou, C., Zheng, N., et al.: Parallel vision for perception and understanding of complex scenes: methods, framework, and perspectives[J]. Artif. Intell. Rev. 48(3), 299–329 (2017)

Rohmer, K., Jendersie, J., Grosch, T.: Natural environment illumination: Coherent interactive augmented reality for mobile and non-mobile devices[J]. IEEE Trans. Vis. Comput. Graph. 23(11), 2474–2484 (2017)

Meka, A., Fox, G., Zollhöfer, M., et al.: Live user-guided intrinsic video for static scenes[J]. IEEE Trans. Vis. Comput. Graph. 23(11), 2447–2454 (2017)

Hettig, J., Engelhardt, S., Hansen, C., et al.: AR in VR: Assessing surgical augmented reality visualizations in a steerable virtual reality environment[J]. Int. J. Comput. Assist. Radiol. Surg. 13(11), 1717–1725 (2018)

Morgand, A., Tamaazousti, M., Bartoli, A.: A geometric model for specularity prediction on planar surfaces with multiple light sources[J]. IEEE Trans. Vis. Comput. Graph. 24(5), 1691–1704 (2017)

Kim, K., Billinghurst, M., Bruder, G., et al.: Revisiting trends in augmented reality research: a review of the 2nd decade of ISMAR (2008–2017)[J]. IEEE Trans. Vis. Comput. Graph. 24(11), 2947–2962 (2018)

Guo, Y., Cai, J., Jiang, B., et al.: Cnn-based real-time dense face reconstruction with inverse-rendered photo-realistic face images[J]. IEEE Trans. Pattern Anal. Mach. Intell. 41(6), 1294–1307 (2018)

Liu, B., Xu, K., Martin, R.R.: Static scene illumination estimation from videos with applications[J]. J. Comput. Sci. Technol. 32(3), 430–442 (2017)

Chen, A., Wu, M., Zhang, Y., et al.: Deep surface light fields[J]. Proc. ACM Comput. Graph. Interact. Tech. 1(1), 1–17 (2018)

Chu, Y., Li, X., Yang, X., et al.: Perception enhancement using importance-driven hybrid rendering for augmented reality based endoscopic surgical navigation[J]. Biomed. Opt. Express 9(11), 5205–5226 (2018)

Wang, L., Liang, X., Meng, C., et al.: Fast ray-scene intersection for interactive shadow rendering with thousands of dynamic lights[J]. IEEE Trans. Visual Comput. Graph. 25(6), 2242–2254 (2018)

Macedo, M.C.D.F., Apolinário, A.L.: Euclidean distance transform soft shadow mapping[C]. In: 2017 30th SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), pp. 238–245. IEEE (2017)

Balcı, H., Güdükbay, U.: Sun position estimation and tracking for virtual object placement in time-lapse videos[J]. SIViP 11(5), 817–824 (2017)

Barrile, V., Fotia, A., Bilotta, G.: Geomatics and augmented reality experiments for the cultural heritage[J]. Appl. Geomat. 10(4), 569–578 (2018)

Chen, M., Lu, S., Liu, Q.: Uniform regularity for a Keller–Segel–Navier–Stokes system[J]. Appl. Math. Lett. 107, 106476 (2020)

Kumara, W., Yen, S.H., Hsu, H.H., et al.: Real-time 3D human objects rendering based on multiple camera details[J]. Multimed. Tools Appl. 76(9), 11687–11713 (2017)

De Paolis, L.T., De Luca, V.: Augmented visualization with depth perception cues to improve the surgeon’s performance in minimally invasive surgery[J]. Med. Biol. Eng. Comput. 57(5), 995–1013 (2019)

Nóbrega, R., Correia, N.: Interactive 3D content insertion in images for multimedia applications[J]. Multimedia Tools and Applications 76(1), 163–197 (2017)

Fukuda, T., Yokoi, K., Yabuki, N., et al.: An indoor thermal environment design system for renovation using augmented reality[J]. J. Comput. Des. Eng. 6(2), 179–188 (2019)

Huang, H., Fang, X., Ye, Y., et al.: Practical automatic background substitution for live video[J]. Comput. Vis. Media 3(3), 273–284 (2017)

Milosavljević, A., Rančić, D., Dimitrijević, A., et al.: Integration of GIS and video surveillance[J]. Int. J. Geogr. Inf. Sci. 30(10), 2089–2107 (2016)

Toisoul, A., Ghosh, A.: Practical acquisition and rendering of diffraction effects in surface reflectance[J]. ACM Trans. Graph. (TOG) 36(5), 1–16 (2017)

Bui, G., Le, T., Morago, B., et al.: Point-based rendering enhancement via deep learning[J]. Vis. Comput. 34(6–8), 829–841 (2018)

Carlson, A., Skinner, K.A., Vasudevan, R., et al.: Sensor transfer: Learning optimal sensor effect image augmentation for Sim-to-Real domain adaptation[J]. IEEE Robot. Autom. Lett. 4(3), 2431–2438 (2019)

Acknowledgements

This work is supported by the Key Research Base of Humanities and Social Sciences of Ministry of Education, Major Project of Bashu Culture Research Center of Sichuan Normal University (No. : bszd19-03).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Ni, T., Chen, Y., Liu, S. et al. Detection of real-time augmented reality scene light sources and construction of photorealis tic rendering framework. J Real-Time Image Proc 18, 271–281 (2021). https://doi.org/10.1007/s11554-020-01022-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11554-020-01022-6