Abstract

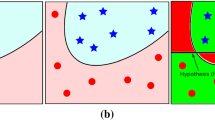

Smoothing methods, extensively used for solving important mathematical programming problems and applications, are applied here to generate and solve an unconstrained smooth reformulation of the support vector machine for pattern classification using a completely arbitrary kernel. We term such reformulation a smooth support vector machine (SSVM). A fast Newton–Armijo algorithm for solving the SSVM converges globally and quadratically. Numerical results and comparisons are given to demonstrate the effectiveness and speed of the algorithm. On six publicly available datasets, tenfold cross validation correctness of SSVM was the highest compared with four other methods as well as the fastest. On larger problems, SSVM was comparable or faster than SVMlight (T. Joachims, in Advances in Kernel Methods—Support Vector Learning, MIT Press: Cambridge, MA, 1999), SOR (O.L. Mangasarian and David R. Musicant, IEEE Transactions on Neural Networks, vol. 10, pp. 1032–1037, 1999) and SMO (J. Platt, in Advances in Kernel Methods—Support Vector Learning, MIT Press: Cambridge, MA, 1999). SSVM can also generate a highly nonlinear separating surface such as a checkerboard.

Similar content being viewed by others

References

L. Armijo, “Minimization of functions having Lipschitz-continuous first partial derivatives,” Pacific Journal of Mathematics, vol. 16, pp. 1–3, 1966.

K.P. Bennett and O.L. Mangasarian, “Robust linear programming discrimination of two linearly inseparable sets,” Optimization Methods and Software, vol. 1, pp. 23–34, 1992.

D.P. Bertsekas, Nonlinear Programming, 2nd ed., Athena Scientific: Belmont, MA, 1999.

P.S. Bradley and O.L. Mangasarian, “Feature selection via concave minimization and support vector machines,” in Machine Learning Proceedings of the Fifteenth International Conference (ICML' 98), J. Shavlik (Ed.), Morgan Kaufmann: San Francisco, CA, 1998, pp. 82–90. ftp://ftp.cs.wisc.edu/mathprog/tech-reports/98-03.ps.

P.S. Bradley and O.L. Mangasarian, “Massive data discrimination via linear support vector machines,” Optimization Methods and Software, vol. 13, pp. 1–10, 2000. ftp://ftp.cs.wisc.edu/math-prog/tech-reports/98-03.ps.

C.J.C. Burges, “A tutorial on support vector machines for pattern recognition,” Data Mining and Knowledge Discovery, vol. 2, pp. 121–167, 1998.

B. Chen and P.T. Harker, “Smooth approximations to nonlinear complementarity problems,” SIAM Journal of Optimization, vol. 7, pp. 403–420, 1997.

Chunhui Chen and O.L. Mangasarian, “Smoothing methods for convex inequalities and linear complementarity problems,” Mathematical Programming, vol. 71, pp. 51–69, 1995.

Chunhui Chen and O.L. Mangasarian, “A class of smoothing functions for nonlinear and mixed complementarity problems,” Computational Optimization and Applications, vol. 5, pp. 97–138, 1996.

X. Chen, L. Qi, and D. Sun, “Global and superlinear convergence of the smoothing Newton method and its application to general box constrained variational inequalities,” Mathematics of Computation, vol. 67, pp. 519–540, 1998.

X. Chen and Y. Ye, “On homotopy-smoothing methods for variational inequalities,” SIAM Journal on Control and Optimization, vol. 37, pp. 589–616, 1999.

V. Cherkassky and F. Mulier, Learning from Data—Concepts, Theory and Methods,” John Wiley & Sons: New York, 1998.

P.W. Christensen and J.-S. Pang, “Frictional contact algorithms based on semismooth Newton methods,” in Reformulation: Nonsmooth, Piecewise Smooth, Semismooth and Smoothing Methods, M. Fukushima and L. Qi (Eds.), Kluwer Academic Publishers: Dordrecht, Netherlands, 1999, pp. 81–116.

CPLEX Optimization Inc., Incline Village, Nevada. Using the CPLEX(TM) Linear Optimizer and CPLEX(TM) Mixed Integer Optimizer (Version 2.0), 1992.

J.E. Dennis and R.B. Schnabel, Numerical Methods for Unconstrained Optimization and Nonlinear Equations. Prentice-Hall: Englewood Cliffs, NJ, 1983.

M. Fukushima and L. Qi, Reformulation: Nonsmooth, Piecewise Smooth, Semismooth and Smoothing Methods. Kluwer Academic Publishers: Dordrecht, the Netherlands, 1999.

T. Joachims, “Making large-scale support vector machine learning practical,” in Advances in Kernel Methods—Support Vector Learning, Bernhard Schölkopf, Christopher J.C. Burges, and Alexander J. Smola (Eds.), MIT Press: Cambridge, MA, 1999, pp. 169–184.

L. Kaufman, “Solving the quadratic programming problem arising in support vector classification,” in Advances in Kernel Methods—Support Vector Learning, Bernhard Schölkopf, Christopher J.C. Burges, and Alexander J. Smola (Eds.), MIT Press: Cambridge, MA, 1999, pp. 147–167.

O.L. Mangasarian, “Mathematical programming in neural networks,” ORSA Journal on Computing, vol. 5, pp. 349–360, 1993.

O.L. Mangasarian, “Parallel gradient distribution in unconstrained optimization,” SIAM Journal on Control and Optimization, vol. 33, 1916–1925, 1995. ftp://ftp.cs.wisc.edu/tech-reports/reports/93/tr1145.ps.

O.L. Mangasarian, “Generalized support vector machines,” in Advances in Large Margin Classifiers, A. Smola, P. Bartlett, B. Schölkopf, and D. Schuurmans (Eds.), MIT Press: Cambridge, MA, 2000, pp. 135–146. ftp://ftp.cs.wisc.edu/math-prog/tech-reports/98-14.ps.

O.L. Mangasarian and David R. Musicant, “Massive support vector regression,” Technical Report 99-02, Data Mining Institute, Computer Sciences Department, University of Wisconsin: Madison, Wisconsin, July 1999. In “Applications and algorithms of complementarity,” M.C. Ferris, O.L. Managasarian, and J.-S. Pang (Eds.), Kluwer Academic Publishers: Boston, 2001, pp. 233–251. ftp://ftp.cs.wisc.edu/pub/dmi/tech-reports/99-02.ps.

O.L. Mangasarian and David R. Musicant, “Successive overrelaxation for support vector machines,” IEEE Transactions on Neural Networks, vol. 10, pp. 1032–1037, 1999. ftp://ftp.cs.wisc.edu/math-prog/techreports/98-18.ps.

O.L. Mangasarian, W.N. Street, and W.H. Wolberg, “Breast cancer diagnosis and prognosis via linear programming,” Operations Research, vol. 43, pp. 570–577, 1995.

MATLAB, User's Guide, The MathWorks, Inc.: Natick, MA 01760, 1992.

P.M. Murphy and D.W. Aha, “UCI repository of machine learning databases,” 1992. www.ics.uci.edu/~mlearn/MLRepository.html.

J. Platt, “Sequential minimal optimization: A fast algorithm for training support vector machines,” in Advances in Kernel Methods—Support Vector Learning, Bernhard Schölkopf, Christopher J.C. Burges, and Alexander J. Smola (Eds.), MIT Press: Cambridge, MA, pp. 185–208, 1999. http://www.research.microsoft.com/~jplatt/smo.html.

M. Stone, “Cross-validatory choice and assessment of statistical predictions,” Journal of the Royal Statistical Society, vol. 36, pp. 111–147, 1974.

P. Tseng, “Analysis of a non-interior continuation method based on chen-mangasarian smoothing functions for complementarity problems,” in Reformulation: Nonsmooth, Piecewise Smooth, Semismooth and Smoothing Methods, M. Fukushima and L. Qi (Eds.), Kluwer Academic Publishers: Dordrecht, the Netherlands, 1999, pp. 381–404.

V.N. Vapnik, The Nature of Statistical Learning Theory. Springer: New York, 1995.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Lee, YJ., Mangasarian, O. SSVM: A Smooth Support Vector Machine for Classification. Computational Optimization and Applications 20, 5–22 (2001). https://doi.org/10.1023/A:1011215321374

Issue Date:

DOI: https://doi.org/10.1023/A:1011215321374