Abstract

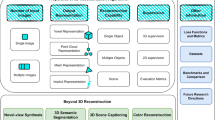

Three-dimensional (3D) reconstruction of shapes is an important research topic in the fields of computer vision, computer graphics, pattern recognition, and virtual reality. Existing 3D reconstruction methods usually suffer from two bottlenecks: (1) they involve multiple manually designed states which can lead to cumulative errors, but can hardly learn semantic features of 3D shapes automatically; (2) they depend heavily on the content and quality of images, as well as precisely calibrated cameras. As a result, it is difficult to improve the reconstruction accuracy of those methods. 3D reconstruction methods based on deep learning overcome both of these bottlenecks by automatically learning semantic features of 3D shapes from low-quality images using deep networks. However, while these methods have various architectures, in-depth analysis and comparisons of them are unavailable so far. We present a comprehensive survey of 3D reconstruction methods based on deep learning. First, based on different deep learning model architectures, we divide 3D reconstruction methods based on deep learning into four types, recurrent neural network, deep autoencoder, generative adversarial network, and convolutional neural network based methods, and analyze the corresponding methodologies carefully. Second, we investigate four representative databases that are commonly used by the above methods in detail. Third, we give a comprehensive comparison of 3D reconstruction methods based on deep learning, which consists of the results of different methods with respect to the same database, the results of each method with respect to different databases, and the robustness of each method with respect to the number of views. Finally, we discuss future development of 3D reconstruction methods based on deep learning.

摘要

三维形状重建是计算机视觉、 计算机图形学、 模式识别和虚拟现实等领域的重要研究课题. 现有三维重建方法通常存在两个瓶颈: (1) 它们涉及多个人工设计阶段, 导致累积误差, 且难以自动学习三维形状的语义特征; (2) 它们严重依赖图像内容和质量, 以及精确校准的摄像机. 因此, 这些方法的重建精度难以提高. 基于深度学习的三维重建方法通过利用深度网络自动学习低质量图像中的三维形状语义特征, 克服了这两个瓶颈. 然而, 这些方法具有多种体系框架, 但是至今未有文献对它们作深入分析和比较. 本文对基于深度学习的三维重建方法进行全面综述. 首先, 基于不同深度学习模型框架, 将基于深度学习的三维重建方法分为4类: 递归神经网络、 深自编码器、 生成对抗网络和卷积神经网络, 并对相应方法作详细分析. 其次, 详细介绍上述方法常用的4个代表性数据库. 再次, 对基于深度学习的三维重建方法进行综合比较, 包括不同方法在同一数据库、 同一方法在不同数据库以及同一方法对于不同视角个数输入的结果比较. 最后, 讨论了基于深度学习的三维重建方法的发展趋势.

Similar content being viewed by others

References

Agarwal S, Snavely N, Simon I, et al., 2009. Building Rome in a day. IEEE 12th Int Conf on Computer Vision, p.72–79. https://doi.org/10.1109/ICCV.2009.5459148

Akhter I, Black MJ, 2015. Pose-conditioned joint angle limits for 3D human pose reconstruction. IEEE Conf on Computer Vision and Pattern Recognition, p.1446–1455. https://doi.org/10.1109/CVPR.2015.7298751

Bansal A, Russell B, Gupta A, 2016. Marr revisited: 2D-3D alignment via surface normal prediction. IEEE Conf on Computer Vision and Pattern Recognition, p.5965–5974. https://doi.org/10.1109/CVPR.2016.642

Bruna J, Zaremba W, Szlam A, et al., 2013. Spectral networks and locally connected networks on graphs. Int Conf on Learning Representations, p.1–14.

Calakli F, Taubin G, 2011. SSD: smooth signed distance surface reconstruction. Comput Graph Forum, 30(7):1993–2002. https://doi.org/10.1111/j.1467-8659.2011.02058.x

Cao YP, Liu ZN, Kuang ZF, et al., 2018. Learning to reconstruct high-quality 3D shapes with cascaded fully convolutional networks. Proc 15th European Conf on Computer Vision, p.616–633. https://doi.org/10.1007/978-3-030-01240-3_38

Chang AX, Funkhouser T, Guibas L, et al., 2015. ShapeNet: an information-rich 3D model repository. https://arxiv.org/abs/1512.03012

Chen K, Lai YK, Hu SM, 2015. 3D indoor scene modeling from RGB-D data: a survey. Comput Vis Media, 1(4): 267–278. https://doi.org/10.1007/s41095-015-0029-x

Choy CB, Xu DF, Gwak J, et al., 2016. 3D-R2N2: a unified approach for single and multi-view 3D object reconstruction. Proc 14th European Conf on Computer Vision, p.628–644. https://doi.org/10.1007/978-3-319-46484-8_38

Cohen TS, Welling M, 2016. Group equivariant convolutional networks. Proc 33rd Int Conf on Machine Learning, p.2990–2999.

Cohen TS, Geiger M, Köhler J, et al., 2018. Spherical CNNs. Int Conf on Learning Representations, p.1–15.

Dai A, Qi CR, Nießner M, 2017. Shape completion using 3D-encoder-predictor CNNs and shape synthesis. IEEE Conf on Computer Vision and Pattern Recognition, p.6545–6554. https://doi.org/10.1109/CVPR.2017.693

Denton E, Chintala S, Szlam A, et al., 2015. Deep generative image models using a Laplacian pyramid of adversarial networks. Proc 28th Int Conf on Neural Information Processing Systems, p.1486–1494.

Engel J, Schöps T, Cremers D, 2014. LSD-SLAM: large-scale direct monocular SLAM. Proc 13th European Conf on Computer Vision, p.834–849. https://doi.org/10.1007/978-3-319-10605-2_54

Everingham M, Eslami SMA, van Gool L, et al., 2015. The PASCAL visual object classes challenge: a retrospective. Int J Comput Vis, 111(1):98–136. https://doi.org/10.1007/s11263-014-0733-5

Fan HQ, Su H, Guibas L, 2017. A point set generation network for 3D object reconstruction from a single image. IEEE Conf on Computer Vision and Pattern Recognition, p.2463–2471. https://doi.org/10.1109/CVPR.2017.264

Fitzgibbon A, Zisserman A, 1998. Automatic 3D model acquisition and generation of new images from video sequences. Proc 9th European Signal Processing Conf, p.129–140.

Furukawa Y, Ponce J, 2006. Carved visual hulls for image-based modeling. Proc 9th European Conf on Computer Vision, p.564–577. https://doi.org/10.1007/11744023_44

Gadelha M, Maji S, Wang R, 2017. 3D shape induction from 2D views of multiple objects. Int Conf on 3D Vision, p.402–411. https://doi.org/10.1109/3DV.2017.00053

Girdhar R, Fouhey DF, Rodriguez M, et al., 2016. Learning a predictable and generative vector representation for objects. Proc 14th European Conf on Computer Vision, p.484–499. https://doi.org/10.1007/978-3-319-46466-4_29

Goesele M, Snavely N, Curless B, et al., 2007. Multi-view stereo for community photo collections. IEEE 11th Int Conf on Computer Vision, p.1–8. https://doi.org/10.1109/ICCV.2007.4408933

Goodfellow I, 2016. NIPS tutorial: generative adversarial networks. https://arxiv.org/abs/1701.00160

Goodfellow IJ, Pouget-Abadie J, Mirza M, et al., 2014. Generative adversarial nets. Proc 27th Int Conf on Neural Information Processing Systems, p.2672–2680.

Graham B, 2014. Spatially-sparse convolutional neural networks. https://arxiv.org/abs/1409.6070v1

Graham B, 2015. Sparse 3D convolutional neural networks. Proc British Machine Vision Conf, p.150.1–150.9. https://doi.org/10.5244/C.29.150

Gregor K, Danihelka I, Graves A, et al., 2015. DRAW: a recurrent neural network for image generation. Proc 32nd Int Conf on Machine Learning, p.1462–1471.

Gulrajani I, Ahmed F, Arjovsky M, et al., 2017. Improved training of Wasserstein GANs. Advances in Neural Information Processing Systems, p.5767–5777.

Gwak J, Choy CB, Chandraker M, et al., 2017. Weakly supervised 3D reconstruction with adversarial constraint. Int Conf on 3D Vision, p.263–272. https://doi.org/10.1109/3DV.2017.00038

Han XF, Laga H, Bennamoun M, 2019. Image-based 3D object reconstruction: state-of-the-art and trends in the deep learning era. IEEE Trans Patt Anal Mach Intell, 43(5):1578–1604. https://doi.org/10.1109/TPAMI.2019.2954885

Han XG, Li Z, Huang HB, et al., 2017. High-resolution shape completion using deep neural networks for global structure and local geometry inference. IEEE Int Conf on Computer Vision, p.85–93. https://doi.org/10.1109/ICCV.2017.19

Häne C, Tulsiani S, Malik J, 2017. Hierarchical surface prediction for 3D object reconstruction. Int Conf on 3D Vision, p.412–420. https://doi.org/10.1109/3DV.2017.00054

Henderson P, Ferrari V, 2019. Learning single-image 3D reconstruction by generative modelling of shape, pose and shading. Int J Comput Vis, 128:835–854. https://doi.org/10.1007/s11263-019-01219-8

Hochreiter S, Schmidhuber J, 1997. Long short-term memory. Neur Comput, 9(8):1735–1780. https://doi.org/10.1162/neco.1997.9.8.1735

Hu WZ, Zhu SC, 2015. Learning 3D object templates by quantizing geometry and appearance spaces. IEEE Trans Patt Anal Mach Intell, 37(6):1190–1205. https://doi.org/10.1109/TPAMI.2014.2362141

Huang QX, Wang H, Koltun V, 2015. Single-view reconstruction via joint analysis of image and shape collections. ACM Trans Graph, 34(4):87. https://doi.org/10.1145/2766890

Kipf TN, Welling M, 2017. Semi-supervised classification with graph convolutional networks. Int Conf on Learning Representations, p.1–13.

Kong C, Lin CH, Lucey S, 2017. Using locally corresponding CAD models for dense 3D reconstructions from a single image. IEEE Conf on Computer Vision and Pattern Recognition, p.5603–5611. https://doi.org/10.1109/CVPR.2017.594

Krizhevsky A, Sutskever I, Hinton GE, 2012. ImageNet classification with deep convolutional neural networks. Proc 25th Int Conf on Neural Information Processing Systems, p.1–9.

Laga H, 2019. A survey on deep learning architectures for image-based depth reconstruction. https://arxiv.org/abs/1906.06113

Lhuillier M, Quan L, 2005. A quasi-dense approach to surface reconstruction from uncalibrated images. IEEE Trans Patt Anal Mach Intell, 27(3):418–433. https://doi.org/10.1109/TPAMI.2005.44

Li C, Wand M, 2016. Precomputed real-time texture synthesis with Markovian generative adversarial networks. Proc 14th European Conf on Computer Vision, p.702–716. https://doi.org/10.1007/978-3-319-46487-9_43

Li YY, Dai A, Guibas L, et al., 2015. Database-assisted object retrieval for real-time 3D reconstruction. Comput Graph Forum, 34(2):435–446. https://doi.org/10.1111/cgf.12573

Lim JJ, Pirsiavash H, Torralba A, 2014. Parsing IKEA objects: fine pose estimation. IEEE Int Conf on Computer Vision, p.2992–2999. https://doi.org/10.1109/ICCV.2013.372

Lin CH, Kong C, Lucey S, 2018. Learning efficient point cloud generation for dense 3D object reconstruction. AAAI Conf on Artificial Intelligence, p.7114–7121.

Liu SC, Chen WK, Li TY, et al., 2019. Soft rasterizer: differentiable rendering for unsupervised single-view mesh reconstruction. https://arxiv.org/abs/1901.05567v1

Lun ZL, Gadelha M, Kalogerakis E, et al., 2017. 3D shape reconstruction from sketches via multi-view convolutional networks. Int Conf on 3D Vision, p.67–77. https://doi.org/10.1109/3DV.2017.00018

Nan LL, Xie K, Sharf A, 2012. A search-classify approach for cluttered indoor scene understanding. ACM Trans Graph, 31(6):137.1–137.10. https://doi.org/10.1145/2366145.2366156

Nash C, Williams CKI, 2017. The shape variational autoencoder: a deep generative model of part-segmented 3D objects. Comput Graph Forum, 36(5):1–12. https://doi.org/10.1111/cgf.13240

Newell A, Yang KY, Deng J, 2016. Stacked hourglass networks for human pose estimation. Proc 14th European Conf on Computer Vision, p.483–499. https://doi.org/10.1007/978-3-319-46484-8_29

Niu CJ, Li J, Xu K, 2018. Im2Struct: recovering 3D shape structure from a single RGB image. IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.1–9. https://doi.org/10.1109/CVPR.2018.00475

Pontes JK, Kong C, Eriksson A, et al., 2017. Compact model representation for 3D reconstruction. Int Conf on 3D Vision, p.88–96. https://doi.org/10.1109/3DV.2017.00020

Pontes JK, Kong C, Sridharan S, et al., 2018. Image2Mesh: a learning framework for single image 3D reconstruction. Proc 14th Asian Conf on Computer Vision, p.365–381. https://doi.org/10.1007/978-3-030-20887-5_23

Radford A, Metz L, Chintala S, 2015. Unsupervised representation learning with deep convolutional generative adversarial networks. Int Conf on Learning Representations, p.1–16.

Rezende DJ, Eslami SMA, Mohamed S, et al., 2016. Unsupervised learning of 3D structure from images. Proc 30th Conf on Neural Information Processing Systems, p.4997–5005.

Shao TJ, Xu WW, Zhou K, et al., 2012. An interactive approach to semantic modeling of indoor scenes with an RGBD camera. ACM Trans Graph, 31(6):136. https://doi.org/10.1145/2366145.2366155

Shi YF, Long PX, Xu K, et al., 2016. Data-driven contextual modeling for 3D scene understanding. Comput Graph, 55:55–67. https://doi.org/10.1016/j.cag.2015.11.003

Silberman N, Hoiem D, Kohli P, et al., 2012. Indoor segmentation and support inference from RGBD images. Proc 12th European Conf on Computer Vision, p.746–760. https://doi.org/10.1007/978-3-642-33715-4_54

Simonyan K, Zisserman A, 2015. Very deep convolutional networks for large-scale image recognitions. Int Conf on Learning Representations, p.1–14.

Smith EJ, Meger D, 2017. Improved adversarial systems for 3D object generation and reconstruction. Proc 1st Annual Conf on Robot Learning, p.87–96.

Sun XY, Wu JJ, Zhang XM, et al., 2018. Pix3D: dataset and methods for single-image 3D shape modeling. IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.2974–2983. https://doi.org/10.1109/CVPR.2018.00314

Sun YY, 2011. A survey of 3D reconstruction based on single image. J North China Univ Technol, 23(1):9–13 (in Chinese). https://doi.org/10.3969/j.issn.1001-5477.2011.01.002

Sundermeyer M, Schlüter R, Ney H, 2012. LSTM neural networks for language modeling. https://core.ac.uk/display/22066040

Sutskever I, Vinyals O, Le Q, 2014. Sequence to sequence learning with neural networks. Proc 27th Int Conf on Neural Information Processing Systems, p.3104–3112.

Tatarchenko M, Dosovitskiy A, Brox T, 2017. Octree generating networks: efficient convolutional architectures for high-resolution 3D outputs. IEEE Int Conf on Computer Vision, p.2107–2115. https://doi.org/10.1109/ICCV.2017.230

Udayan JD, Kim H, Kim JI, 2015. An image-based approach to the reconstruction of ancient architectures by extracting and arranging 3D spatial components. Front Inform Technol Electron Eng, 16(1):12–27. https://doi.org/10.1631/FITEE.1400141

Varley J, DeChant C, Richardson A, et al., 2017. Shape completion enabled robotic grasping. IEEE/RSJ Int Conf on Intelligent Robots and Systems, p.2442–2447. https://doi.org/10.1109/IROS.2017.8206060

Wang LJ, Fang Y, 2017. Unsupervised 3D reconstruction from a single image via adversarial learning. https://arxiv.org/abs/1711.09312

Wang NY, Zhang YD, Li ZW, et al., 2018. Pixel2Mesh: generating 3D mesh models from single RGB images. Proc 15th European Conf on Computer Vision, p.55–71. https://doi.org/10.1007/978-3-030-01252-6_4

Wang XL, Gupta A, 2016. Generative image modeling using style and structure adversarial networks. Proc 14th European Conf on Computer Vision, p.318–335. https://doi.org/10.1007/978-3-319-46493-0_20

Wu JJ, Zhang CK, Xue TF, et al., 2016a. Learning a probabilistic latent space of object shapes via 3D generative-adversarial modeling. Advances in Neural Information Processing Systems, p.82–90.

Wu JJ, Xue TF, Lim JJ, et al., 2016b. Single image 3D interpreter network. Proc 14th European Conf on Computer Vision, p.365–382. https://doi.org/10.1007/978-3-319-46466-4_22

Wu JJ, Wang YF, Xue TF, et al., 2017. MarrNet: 3D shape reconstruction via 2.5D sketches. Advances in Neural Information Processing Systems, p.540–550.

Wu ZR, Song SR, Khosla A, et al., 2015. 3D ShapeNets: a deep representation for volumetric shapes. IEEE Conf on Computer Vision and Pattern Recognition, p.1912–1920. https://doi.org/10.1109/CVPR.2015.7298801

Xiang Y, Mottaghi R, Savarese S, 2014. Beyond PASCAL: a benchmark for 3D object detection in the wild. IEEE Winter Conf on Applications of Computer Vision, p.75–82. https://doi.org/10.1109/WACV.2014.6836101

Xiang Y, Kim W, Chen W, et al., 2016. ObjectNet3D: a large scale database for 3D object recognition. Proc 14th European Conf on Computer Vision, p.160–176. https://doi.org/10.1007/978-3-319-46484-8_10

Xiao JX, Hays J, Ehinger KA, et al., 2010. SUN database: large-scale scene recognition from abbey to zoo. IEEE Computer Society Conf on Computer Vision and Pattern Recognition, p.3485–3492. https://doi.org/10.1109/CVPR.2010.5539970

Xie HZ, Yao HX, Sun XS, et al., 2019. Pix2Vox: contextaware 3D reconstruction from single and multi-view images. IEEE/CVF Int Conf on Computer Vision, p.1–9. https://doi.org/10.1109/ICCV.2019.00278

Yan XC, Yang JM, Yumer E, et al., 2016. Perspective transformer nets: learning single-view 3D object reconstruction without 3D supervision. Advances in Neural Information Processing Systems, p.1696–1704

Yang B, Wen HK, Wang S, et al., 2018. 3D object reconstruction from a single depth view with adversarial learning. IEEE Int Conf on Computer Vision Workshop, p.679–688. https://doi.org/10.1109/ICCVW.2017.86

Yang B, Rosa S, Markham A, et al., 2019. 3D object dense reconstruction from a single depth view. IEEE Trans Patt Anal Mach Intell, 41(12):2820–2834. https://doi.org/10.1109/TPAMI.2018.2868195

Yang B, Wang S, Markham A, et al., 2020. Robust attentional aggregation of deep feature sets for multi-view 3D reconstruction. Int J Comput Vis, 128:53–73. https://doi.org/10.1007/s11263-019-01217-w

Zeiler MD, Krishnan D, Taylor GW, et al., 2010. Deconvolutional networks. IEEE Computer Society Conf on Computer Vision and Pattern Recognition, p.2528–2535. https://doi.org/10.1109/CVPR.2010.5539957

Zhu CY, Byrd RH, Lu PH, et al., 1997. Algorithm 778: L-BFGS-B: Fortran subroutines for large-scale bound-constrained optimization. ACM Trans Math Softw, 23(4):550–560. https://doi.org/10.1145/279232.279236

Zou CH, Yumer E, Yang JM, et al., 2017. 3D-PRNN: generating shape primitives with recurrent neural networks. IEEE Int Conf on Computer Vision, p.900–909. https://doi.org/10.1109/ICCV.2017.103

Author information

Authors and Affiliations

Contributions

Caixia LIU designed the research outline and collected the data. Caixia LIU and Shaofan WANG drafted the manuscript. Dehui KONG and Zhiyong WANG helped organize the manuscript. Caixia LIU, Shaofan WANG, Jinghua LI, and Baocai YIN revised and finalized the paper.

Corresponding author

Ethics declarations

Caixia LIU, Dehui KONG, Shaofan WANG, Zhiyong WANG, Jinghua LI, and Baocai YIN declare that they have no conflict of interest.

Additional information

Project supported by the National Natural Science Foundation of China (Nos. 61772049, 61632006, 61876012, U19B2039, and 61906011) and the Beijing Natural Science Foundation of China (No. 4202003)

Rights and permissions

About this article

Cite this article

Liu, C., Kong, D., Wang, S. et al. Deep3D reconstruction: methods, data, and challenges. Front Inform Technol Electron Eng 22, 652–672 (2021). https://doi.org/10.1631/FITEE.2000068

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1631/FITEE.2000068

Key words

- Deep learning models

- Three-dimensional reconstruction

- Recurrent neural network

- Deep autoencoder

- Generative adversarial network

- Convolutional neural network