Abstract

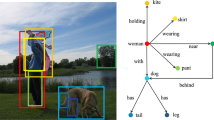

Object detection is one of the hottest research directions in computer vision, has already made impressive progress in academia, and has many valuable applications in the industry. However, the mainstream detection methods still have two shortcomings: (1) even a model that is well trained using large amounts of data still cannot generally be used across different kinds of scenes; (2) once a model is deployed, it cannot autonomously evolve along with the accumulated unlabeled scene data. To address these problems, and inspired by visual knowledge theory, we propose a novel scene-adaptive evolution unsupervised video object detection algorithm that can decrease the impact of scene changes through the concept of object groups. We first extract a large number of object proposals from unlabeled data through a pre-trained detection model. Second, we build the visual knowledge dictionary of object concepts by clustering the proposals, in which each cluster center represents an object prototype. Third, we look into the relations between different clusters and the object information of different groups, and propose a graph-based group information propagation strategy to determine the category of an object concept, which can effectively distinguish positive and negative proposals. With these pseudo labels, we can easily fine-tune the pre-trained model. The effectiveness of the proposed method is verified by performing different experiments, and the significant improvements are achieved.

摘要

目标检测是机器视觉领域最热门的研究方向之一, 在学术界已取得令人瞩目的成果, 在工业界也存在许多有价值的应用. 然而, 主流的检测方法仍有两个缺陷: (1) 即使是经过大量数据有效训练的模型, 仍然无法很好地泛化到新场景中; (2) 模型一旦部署到位, 则无法随着不断累积的无标注数据自主进化. 为克服上述问题, 受视觉知识理论启发, 提出一种场景自适应进化的无监督视频目标检测算法, 该算法可利用目标群体概念, 降低场景变化带来的不利影响. 首先通过预训练检测模型从无标注数据中提取大量候选目标, 然后对候选目标聚类, 构建目标概念的视觉知识字典, 其中各个聚类中心代表一种目标原型. 其次, 通过研究不同目标簇和不同群体目标信息之间的关系, 提出基于图的群体信息传播策略以判断目标概念的归属, 可有效区分候选目标. 最终, 利用收集到的伪类标微调预训练模型, 实现算法对新场景的自适应. 算法的有效性得到多个不同实验的验证, 且性能提升显著.

Similar content being viewed by others

Explore related subjects

Discover the latest articles and news from researchers in related subjects, suggested using machine learning.References

Chen MH, Kira Z, AlRegib G, et al., 2019. Temporal attentive alignment for large-scale video domain adaptation. Proc IEEE/CVF Int Conf on Computer Vision, p.6320–6329. https://doi.org/10.1109/ICCV.2019.00642

Cordts M, Omran M, Ramos S, et al., 2016. The cityscapes dataset for semantic urban scene understanding. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.3213–3223. https://doi.org/10.1109/CVPR.2016.350

Croitoru I, Bogolin SV, Leordeanu M, 2017. Unsupervised learning from video to detect foreground objects in single images. Proc IEEE Int Conf on Computer Vision, p.4345–4353. https://doi.org/10.1109/ICCV.2017.465

Dai JF, Li Y, He KM, et al., 2016. R-FCN: object detection via region-based fully convolutional networks. Proc 30th Int Conf on Neural Information Processing Systems, p.379–387. https://doi.org/10.5555/3157096.3157139

Deng JJ, Pan YW, Yao T, et al., 2020. Single shot video object detector. IEEE Trans Multim, 23:846–858. https://doi.org/10.1109/TMM.2020.2990070

Feichtenhofer C, Pinz A, Zisserman A, 2017. Detect to track and track to detect. Proc IEEE Int Conf on Computer Vision, p.3057–3065. https://doi.org/10.1109/ICCV.2017.330

Geiger A, Lenz P, Urtasun R, 2012. Are we ready for autonomous driving? The KITTI vision benchmark suite. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.3354–3361. https://doi.org/10.1109/CVPR.2012.6248074

Girshick R, 2015. Fast R-CNN. Proc IEEE Int Conf on Computer Vision, p.1440–1448. https://doi.org/10.1109/ICCV.2015.169

Girshick R, Donahue J, Darrell T, et al., 2014. Rich feature hierarchies for accurate object detection and semantic segmentation. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.580–587. https://doi.org/10.1109/CVPR.2014.81

Guo CX, Fan B, Gu J, et al., 2019. Progressive sparse local attention for video object detection. Proc IEEE/CVF Int Conf on Computer Vision, p.3908–3917. https://doi.org/10.1109/ICCV.2019.00401

Han W, Khorrami P, Le Paine T, et al., 2016. Seq-NMS for video object detection. https://arxiv.org/abs/1602.08465v1

He ZW, Zhang L, 2019. Multi-adversarial faster-RCNN for unrestricted object detection. Proc IEEE/CVF Int Conf on Computer Vision, p.6667–6676. https://doi.org/10.1109/ICCV.2019.00677

Htike KK, Hogg DC, 2014. Efficient non-iterative domain adaptation of pedestrian detectors to video scenes. Proc 22nd Int Conf on Pattern Recognition, p.654–659. https://doi.org/10.1109/ICPR.2014.123

Johnson-Roberson M, Barto C, Mehta R, et al., 2016. Driving in the matrix: can virtual worlds replace human-generated annotations for real world tasks? Proc IEEE Int Conf on Robotics and Automation, p.746–753. https://doi.org/10.1109/ICRA.2017.7989092

Kang K, Ouyang WL, Li HS, et al., 2016. Object detection from video tubelets with convolutional neural networks. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.817–825. https://doi.org/10.1109/CVPR.2016.95

Kang K, Li HS, Xiao T, et al., 2017. Object detection in videos with tubelet proposal networks. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.889–897. https://doi.org/10.1109/CVPR.2017.101

Kang K, Li HS, Yan JJ, et al., 2018. T-CNN: tubelets with convolutional neural networks for object detection from videos. IEEE Trans Circ Syst Video Technol, 28(10):2896–2907. https://doi.org/10.1109/TCSVT.2017.2736553

Khodabandeh M, Vahdat A, Ranjbar M, et al., 2019. A robust learning approach to domain adaptive object detection. Proc IEEE/CVF Int Conf on Computer Vision, p.480–490. https://doi.org/10.1109/ICCV.2019.00057

Kipf TN, Welling M, 2017. Semi-supervised classification with graph convolutional networks. https://arxiv.org/abs/1609.02907

Kwak S, Cho M, Laptev I, et al., 2015. Unsupervised object discovery and tracking in video collections. Proc IEEE Int Conf on Computer Vision, p.3173–3181. https://doi.org/10.1109/ICCV.2015.363

Lahiri A, Ragireddy SC, Biswas P, et al., 2019. Unsupervised adversarial visual level domain adaptation for learning video object detectors from images. Proc IEEE Winter Conf on Applications of Computer Vision, p.1807–1815. https://doi.org/10.1109/WACV.2019.00197

Law H, Deng J, 2018. CornerNet: detecting objects as paired keypoints. Proc 15th European Conf on Computer Vision, p.765–781. https://doi.org/10.1007/978-3-030-01264-9_45

Li D, Hung WC, Huang JB, et al., 2016. Unsupervised visual representation learning by graph-based consistent constraints. Proc 14th European Conf on Computer Vision, p.678–694. https://doi.org/10.1007/978-3-319-46493-0_41

Li JN, Liang XD, Shen SM, et al., 2018. Scale-aware fast R-CNN for pedestrian detection. IEEE Trans Multim, 20(4):985–996. https://doi.org/10.1109/TMM.2017.2759508

Li NJ, Chang FL, Liu CS, 2020. Spatial-temporal cascade autoencoder for video anomaly detection in crowded scenes. IEEE Trans Multim, 23:203–215. https://doi.org/10.1109/TMM.2020.2984093

Lin TY, Dollár P, Girshick R, et al., 2017a. Feature pyramid networks for object detection. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.936–944. https://doi.org/10.1109/CVPR.2017.106

Lin TY, Goyal P, Girshick R, et al., 2017b. Focal loss for dense object detection. Proc IEEE Int Conf on Computer Vision, p.2999–3007. https://doi.org/10.1109/ICCV.2017.324

Liu W, Anguelov D, Erhan D, et al., 2016. SSD: single shot multibox detector. Proc 14th European Conf on Computer Vision, p.21–37. https://doi.org/10.1007/978-3-319-46448-0_2

Ma XL, Zhu XT, Gong SG, et al., 2017. Person reidentification by unsupervised video matching. Patt Recogn, 65:197–210. https://doi.org/10.1016/j.patcog.2016.11.018

Pan YH, 2016. Heading toward artificial intelligence 2.0. Engineering, 2(4):409–413. https://doi.org/10.1016/J.ENG.2016.04.018

Pan YH, 2019. On visual knowledge. Front Inform Technol Electron Eng, 20(8):1021–1025. https://doi.org/10.1631/FITEE.1910001

Pan YH, 2020. Miniaturized five fundamental issues about visual knowledge. Front Inform Technol Electron Eng, online. https://doi.org/10.1631/FITEE.2040000

Pang JM, Chen K, Shi JP, et al., 2019. Libra R-CNN: towards balanced learning for object detection. Proc IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.821–830. https://doi.org/10.1109/CVPR.2019.00091

Redmon J, Farhadi A, 2017. YOLO9000: better, faster, stronger. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.6517–6525. https://doi.org/10.1109/CVPR.2017.690

Redmon J, Divvala S, Girshick R, et al., 2016. You only look once: unified, real-time object detection. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.779–788. https://doi.org/10.1109/CVPR.2016.91

Ren SQ, He KM, Girshick R, et al., 2015. Faster R-CNN: towards real-time object detection with region proposal networks. Proc 28th Int Conf on Neural Information Processing Systems, p.91–99. https://doi.org/10.5555/2969239.2969250

Shen ZQ, Maheshwari H, Yao WC, et al., 2019. SCL: towards accurate domain adaptive object detection via gradient detach based stacked complementary losses. https://arxiv.org/abs/1911.02559

Shvets M, Liu W, Berg A, 2019. Leveraging long-range temporal relationships between proposals for video object detection. Proc IEEE/CVF Int Conf on Computer Vision, p.9755–9763. https://doi.org/10.1109/ICCV.2019.00985

Subramaniam A, Nambiar A, Mittal A, 2019. Co-segmentation inspired attention networks for video-based person re-identification. Proc IEEE/CVF Int Conf on Computer Vision, p.562–572. https://doi.org/10.1109/ICCV.2019.00065

Tang K, Ramanathan V, Li FF, et al., 2012. Shifting weights: adapting object detectors from image to video. Proc 25th Int Conf on Neural Information Processing Systems, p.638–646. https://doi.org/10.5555/2999134.2999206

Veličković P, Casanova A, Lio P, et al., 2018. Graph attention networks. https://arxiv.org/abs/1710.10903

Wang HW, Leskovec J, 2019. Unifying graph convolutional neural networks and label propagation. https://arxiv.org/abs/2002.06755

Wang SG, Cheng J, Liu HJ, et al., 2018. Pedestrian detection via body part semantic and contextual information with DNN. IEEE Trans Multim, 20(11):3148–3159. https://doi.org/10.1109/TMM.2018.2829602

Wang SY, Zhou YC, Yan JJ, et al., 2018. Fully motion-aware network for video object detection. Proc 15th European Conf on Computer Vision, p.557–573. https://doi.org/10.1007/978-3-030-01261-8_33

Wang SY, Group A, Lu HC, et al., 2019. Fast object detection in compressed video. Proc IEEE/CVF Int Conf on Computer Vision, p.7103–7112. https://doi.org/10.1109/ICCV.2019.00720

Wu F, Souza A, Zhang TY, et al., 2019. Simplifying graph convolutional networks. Proc 36th Int Conf on Machine Learning, p.6861–6871.

Xiao FY, Lee YJ, 2016. Track and segment: an iterative unsupervised approach for video object proposals. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.933–942. https://doi.org/10.1109/CVPR.2016.107

Xiao FY, Lee YJ, 2018. Video object detection with an aligned spatial-temporal memory. Proc 15th European Conf on Computer Vision, p.494–510. https://doi.org/10.1007/978-3-030-01237-3_30

Yu HK, Guo DZ, Yan ZP, et al., 2018. Unsupervised learning for large-scale fiber detection and tracking in microscopic material images. https://arxiv.org/abs/1805.10256

Zhang XS, Wan F, Liu C, et al., 2019. FreeAnchor: learning to match anchors for visual object detection. https://arxiv.org/abs/1909.02466

Zhu ML, Liu M, 2018. Mobile video object detection with temporally-aware feature maps. Proc IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.5686–5695. https://doi.org/10.1109/CVPR.2018.00596

Zhu XG, Pang JM, Yang CY, et al., 2019. Adapting object detectors via selective cross-domain alignment. Proc IEEE/CVF Conf on Computer Vision and Pattern Recognition, p.687–696. https://doi.org/10.1109/CVPR.2019.00078

Zhu XZ, Wang YJ, Dai JF, et al., 2017. Flow-guided feature aggregation for video object detection. Proc IEEE Int Conf on Computer Vision, p.408–417. https://doi.org/10.1109/ICCV.2017.52

Author information

Authors and Affiliations

Contributions

Shiliang PU, Di XIE, and Yunhe PAN designed the research. Wei ZHAO and Weijie CHEN conducted the experiments. Wei ZHAO drafted the manuscript. Shicai YANG and Di XIE helped organize the manuscript. Wei ZHAO and Shicai YANG revised and finalized the paper.

Corresponding author

Ethics declarations

Shiliang PU, Wei ZHAO, Weijie CHEN, Shicai YANG, Di XIE, and Yunhe PAN declare that they have no conflict of interest.

Additional information

Project supported by the National Key R&D Program of China (No. 2020AAA010400X) and the Hikvision Open Fund, China

Rights and permissions

About this article

Cite this article

Pu, S., Zhao, W., Chen, W. et al. Unsupervised object detection with scene-adaptive concept learning. Front Inform Technol Electron Eng 22, 638–651 (2021). https://doi.org/10.1631/FITEE.2000567

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1631/FITEE.2000567